Joystiq isn't scoring reviews anymore, and here's why

Ever since we started publishing reviews here at Joystiq, we've striven to deliver the most timely and definitive critiques we can. The word "definitive" is important in this conversation, because things don't get much more definitive than a review score. The very purpose of a score is to define something entirely nebulous and subjective – fun – as narrowly as possible. The problem is that narrowing down something as broad and fluid as a video game isn't truly useful, especially in today's industry.

Between pre-release reviews, post-release patching, online connectivity, server stability and myriad other unforeseeable possibilities, attaching a concrete score to a new game just isn't practical. More importantly, it's not helpful to our readers.

For that reason, above all others, we've decided that Joystiq will no longer score its reviews. Don't worry, we'll still give you a reason to scroll past the review before actually reading it (it's okay, we all do it), but the information you'll find will be more helpful and meaningful than a handful of stars. I've been mulling over this decision for several months and, after discussing it with the rest of the Joystiq staff, we decided that the new year was the perfect time to flip the switch. For a rundown of why 2014 was the year that broke the critic's back, and exactly how our new system will work, read on.

How did we get here?

If you've been reading Joystiq long enough, you might know that the idea of un-scored reviews is nothing new for us. We didn't really start reviewing games on a regular basis until 2009, and we didn't start adding scores until 2010. (Joystiq trivia time: I dug up what I think is Joystiq's very first review, and it actually was scored. It was Tomb Raider: Legend way back in 2006, and we gave it 7.0/10.) We avoided scores for a long time, thinking our words should speak for themselves. We did, however, finally decide to append scores to our reviews, at least in part because we wanted Joystiq represented on Metacritic. We did our best to make our five star system worthwhile – more on that later – but obviously the editorial staff also believed that a Metacritic presence gave Joystiq greater legitimacy as an outlet and, of course, a larger potential audience.

Metacritic would later have an unexpected effect on our scoring system. When converted to Metacritic's 100 point scale, each of Joystiq's five stars translated to a whopping 20 points. Games with recognizable flaws but redeemable gameplay – three stars, according to our guidelines – showed up as 60/100. Wonderful games that were just shy of greatness – not quite good enough to get five stars – saw their 4-star ratings converted to 80/100. We weren't comfortable with the way some games were represented, and after a few months we decided to add half-stars to the system. It's nice that we were able to reward good ideas that didn't quite come together – we added a half-star to Singularity, for example, which initially scored just three – but the incorporation of half-stars was also clearly a capitulation to the industry. I'm not saying it was the wrong thing to do, but it's illustrative of many of the problems that would follow. (Incidentally, Metacritic's posting of our Singularity review still reflects the original 3-star score, in accordance with its own policies, which I'll touch on in a bit.)

The industry has evolved at an incredibly rapid pace over the last few years, and many of its changes have made the job of critiquing games very challenging. The advent of internet-connected consoles brought the once PC-exclusive phenomenon of post-release patching to the masses, and publishers have been taking advantage of it ever since. This enables developers and publishers to fix unforeseen problems but, as I've written recently, it also means that some games aren't truly "finished" until after launch (if they ever are). Many games have a "Day One Patch," which often includes vital fixes or even entire gameplay modes. Even if a game's code is up to snuff on day one, however, outside factors like server stability can still render it unplayable. These issues aren't new, but the last three years in particular seem to have had a rash of rough game launches, including the likes of Battlefield 4, SimCity, Diablo 3, DriveClub, Assassin's Creed Unity and Halo: The Master Chief Collection, to name some of the most prominent examples.

Meanwhile, even perfectly functional games can change dramatically after launch. Diablo 3, initial server problems aside, is a drastically different game now than it was in 2012. Many games, especially competitive ones, are actually designed to change based on community behavior. And of course there's the ever-growing category of "games as a service," which sees developers slowly adding to their games over time, introducing new features and addressing the feedback of a dedicated audience.

Taking all of these factors into account, the notion of a concrete, launch day review score has become both antiquated and impractical. Take Battlefield 4 as an example. Our experience with Battlefield 4 prior to launch was excellent – we awarded it 4.5 stars – and most critics seemed to agree. Post-launch, the game suffered from numerous online problems for months, and all of those high scores began to look very silly. As a solution, some may suggest holding reviews until after launch, so that any potential problems can be noted. In Joystiq's experience, however, most people want reviews on launch day or before. That is when they are most widely read, according to our own statistics, and that makes sense, given that the majority of a game's lifetime sales usually happen during its launch period.

Others might argue that scores shouldn't be static, that we should change them periodically to reflect a game's current status. An interesting idea, maybe, but it only proves how arbitrary scores really are and, again, most people seek out and read reviews on or near launch day, so there's little point in changing scores after the fact. Also, as I mentioned above, Metacritic will not change an outlet's review score once it has been posted, so late adopters browsing for reviews wouldn't see an updated score anyway.

There's a more fundamental problem than posterity here though, specifically that scores are meaningless without the context of the full review. In general, all a score does is inform the reader whether or not a specific critic liked a game or not. We've done our best to make Joystiq's scoring system impart a little more information at a glance, as outlined in our review guidelines, but the basic assumption – that a score is a barometer for overall quality – is impossible to shake. I wouldn't be surprised if you didn't even know we had specific score guidelines until now.

Case in point, I recently reviewed Halo: The Master Chief Collection, and I awarded it 4.5 stars, essentially Joystiq's way of saying that fans of "this type of game" definitely don't want to miss it. I acknowledged the game's now infamous online matchmaking problems in the review, noting that it was "barely functional," and that "players who put a lot of priority in matchmaking will be disappointed." In the end, though, I felt that the incredible care that went into remastering Halo 2, and the sheer amount of quality content available in the rest of the package, warranted the high score.

A few weeks later, I wrote an editorial about the rash of "broken" games that have been released over the last few years, citing The Master Chief Collection's terrible matchmaking as one of 2014's most egregious examples. Some of the comments on the article asked what is more or less the same question: If The Master Chief Collection's matchmaking is "broken," then why did it get an almost "perfect" score? It's a valid question, one that I believe is answered by the review itself – and there's the rub.

Regardless of whatever nuance we ascribe to our scores, some will see five stars and immediately assume it means "perfect." It doesn't. Others see three stars and assume it means "awful." It doesn't. These scores don't really translate to the 90/100 or 60/100 you see on Metacritic either. We always intended our system to help people decide whether a certain game was worth their time, based on how much they like "this type of game," but what if a game defies a specific type? Who decides what defines a genre? What genre is Noby Noby Boy? The Stanley Parable? Device 6? Is Destiny an MMO, a shooter, or just a dungeon crawler?

A score can't answer these questions. A score can't tell you what a critic liked or disliked about a game, or why. It can't tell you what qualities are most valued by the review's author. Without the full context of a review to explain it, about the only thing a score is good for is deciding whether you want to take the time to read the review in the first place. There's nothing wrong with that impulse (goodness knows I've done it) but it serves to identify a problem. If a score is meaningless without context – context that can easily be ignored – then there's no reason to have a score at all.

What now?

"But I like scores," you say? We understand that, and we realize this move might not be popular, but we're also not going to leave all you score-lovers hanging. We know that you want to be able to get the gist of a game at a glance, and we're going to make sure that you still can – but you'll get much more information than a score could ever have provided.

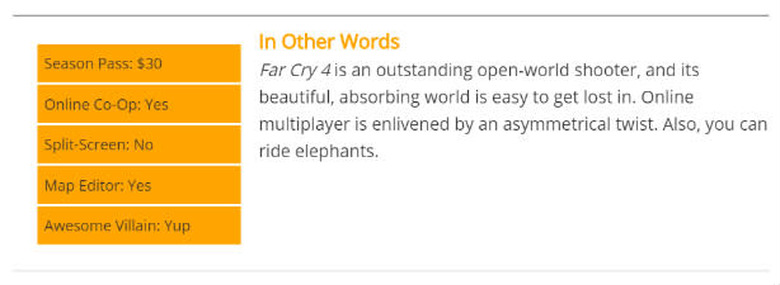

Going forward, at the bottom of every review, you'll find a quick summary of its important points, which we're calling "In Other Words." In a few sentences (a paragraph at most), we'll tell you what you need to know. Furthermore, we'll give you the Breakdown to help answer common questions like "Does it have a season pass?" and "Is there split-screen?" It should look a little something like this:

If that piques your interest, you'll probably want to read the whole review for a more detailed critique. And, of course, we'll still communicate the conditions under which a review was conducted, including what platform version was reviewed, whether we played a retail copy or "review code," whether we were able to play on public servers, and any other pertinent information. In the event that something unforeseen happens after publication – server problems, pervasive bugs, gameplay improvements etc. – we'll append a link to the review directing readers to relevant news articles here on Joystiq.

Oh, and one more thing.

The Joystiq Excellence Award

Introducing the Joystiq Excellence Award. We know that nothing will ever replace the cold, mathematical decisiveness of a score, but we still wanted a way of recognizing the best of the best. The Excellence Award isn't something we'll hand out often. Whether they have innovative new concepts, tell a heart-wrenching story or simply execute established ideas in a brilliant way, Excellence Award winners are games we believe you absolutely must play.

It's the highest recommendation we can give, and I'm pleased to announce that our first Excellence Award winner is The Talos Principle. If you'd like to learn why we think it deserves our highest honor, you can read the review right here.

You can scroll right to the bottom, if you want, but we hope you'll stay for the whole thing.