3D audio is the secret to HoloLens' convincing holograms

Microsoft's spatial sound system is essential to the mixed reality experience.

The streets of Microsoft's campus are lined with tall fir trees. A drive through lush, green urban woods reveals dozens of nondescript buildings. Minibuses shuttle employees across the company's 500-acre headquarters in Redmond, Washington. Inside Building 99, a concrete and glass structure that houses Microsoft Research, Ivan Tashev walked through the quiet halls toward his lab, where he devised the spatial sound system for HoloLens.

Tashev leads the audio group at Microsoft Research, which is the second largest computer science organization in the world. For HoloLens, a mixed reality headset that places holograms in your immediate environment, his team worked on a sound system that creates the illusion of 3D audio to bring virtual objects to life.

Mixed reality, like virtual reality, is a medium best known for its visual trickery. When you first try on the HoloLens, the thing that instantly grabs your attention is the holographic display: the aliens crawling out of the walls in RoboRaid or Buzz Aldrin walking on the surface of Mars. The device tricks your brain into seeing things that are only visible through the headset. But what makes the holograms seem realistic is the spatial sound that allows you to engage with the projections. You hear the alien enemies before they break out of the walls, and you can find the astronaut talking to you as he walks across the red planet.

"Spatial sound roots holograms in your world," says Matthew Lee Johnston, audio innovation director at Microsoft. "The more realistic we can make that hologram sound in your environment, the more your brain is going to interpret that hologram as being in your environment."

The HoloLens audio system replicates the way the human brain processes sounds. "[Spatial sound] is what we experience on a daily basis," says Johnston. "We're always listening and locating sounds around us; our brains are constantly interpreting and processing sounds through our ears and positioning those sounds in the world around us."

The brain relies on a set of aural cues to locate a sound source with precision. If you're standing on the street, for instance, you would spot an oncoming bus on your right based on the way its sound reaches your ears. It would enter the ear closest to the vehicle a little quicker than the one farther from it, on the left. It would also be louder in one ear than the other based on proximity. These cues help you pinpoint the object's location. But there's another physical factor that impacts the way sounds are perceived.

Before a sound wave enters a person's ear canals, it interacts with the outer ears, the head and even the neck. The shape, size and position of the human anatomy add a unique imprint to each sound. The effect, called Head-Related Transfer Function (HRTF), makes everyone hear sounds a little differently.

These subtle differences make up the most crucial part of a spatial-sound experience. For the aural illusion to work, all the cues need to be generated with precision. "A one-size-fits-all [solution] or some kind of generic filter does not satisfy around one-half of the population of the Earth," says Tashev. "For the [mixed reality experience to work], we had to find a way to generate your personal hearing."

His team started by collecting reams of data in the Microsoft Research lab. They captured the HRTFs of hundreds of people to build their aural profiles. The acoustic measurements, coupled with precise 3D scans of the subjects' heads, collectively built a wide range of options for HoloLens. A quick and discreet calibration matches the spatial hearing of the device user to the profile that comes closest to his or hers.

Drone concept for RoboRaid. Image: Microsoft

Back at the Microsoft campus, on a bright, sunny morning in late August, Tashev walked into his lab in Building 99. Dressed in black pants and a platinum-gray shirt that matched his hair, he pulled open the heavy doors to a concealed room where he carries out the acoustic measurements. The walls, covered with large foam wedges, insulate the space from the rest of the building. The floor is made up of a wire mesh that sits atop another layer of sound absorbers at the bottom. The structure soaks up all sounds and vibrations to create an anechoic chamber, or a space that is devoid of echoes.

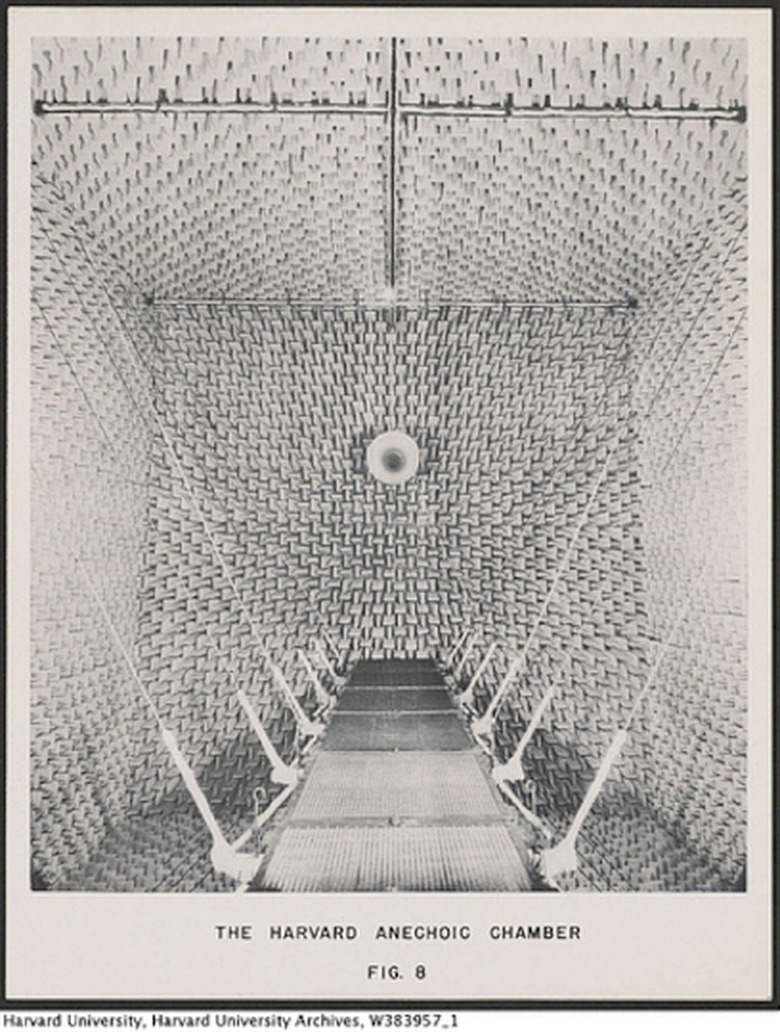

The Harvard anechoic chamber built in 1943. Image: Harvard University Archives

After a few minutes, the echoless chamber starts to feel uncomfortable, even unnatural. The blood pumping through the heart becomes more audible. The ebb and flow of the air in the lungs comes into focus. It's a feeling that is often experienced inside anechoic rooms, which have been around for many decades. Dr. Leo Beranek, the director of Harvard's electroacoustic lab, built the first one in 1943 to test broadcasting systems and loudspeakers and to improve noise control during WWII. Since then, similar spaces have been designed to test microphones and to measure HRTFs for multi-directional audio systems.

At Microsoft, Tashev's chamber has a black leather chair at the center of the room where the HRTFs of 350 people have been measured. After a pair of small, orange microphones has been placed inside the ears of a subject, a black rig equipped with 60 speakers slowly rises from the back. As the contraption moves in an arc over the person, it stops at brief intervals to play sharp, successive, laser-like sounds. The microphones capture the sound waves as they enter the ear canals of the participant.

By playing sounds all around the listener, the team is able to capture the precise audio cues for both right and left ears in relation to 400 directions in the room. These measurements give them a pair of HRTF filters for each sound source. "If we know these filters for all possible directions, then we own your spatial hearing," says Tashev. "We can trick your brain and make you perceive that the sound comes from any desired direction."

Your browser does not support the audio element

To place a hologram at a particular location, a corresponding audio filter is applied. When the HoloLens projects those specific sounds, the HRTF clues trick the human brain into spotting the source almost instantly.

Despite the realism, the paraphernalia required to generate spatial sound has kept it from replacing stereo and surround systems for the masses. Apart from the precise acoustic measurements, it also requires constant head-tracking. The orientation of the head has a direct impact on the way sounds reach the ears. If you're looking away from the bus on the street, for instance, it will sound different than if you're looking straight at it.

For HoloLens, however, the team did not need to tackle the head-tracking problem from scratch. The holographic visuals work in part because one of the six cameras in the device monitors the user's head movements at all times. The audio system simply taps into that information.

Microsoft is not the first or only company with the ability to create personalized audio. For most 3D audio experiences in VR, creators have been relying on HRTF databases that are publicly available or turning to research labs where audio personalization has been possible for a number of years. At Princeton University, Edgar Choueiri, a professor of mechanical and aerospace engineering, has been using the microphone-in-ears technique for the past few years. And VisiSonics, a company based in the University of Maryland's research lab, has been measuring HRTFs to build its own library.

But Microsoft's audio system stands apart for its engineering, which makes the audio calibration invisible to the HoloLens user. While the personalization isn't as perfect as it tends to be inside a controlled lab, it is a lot less tedious.

The first time you wear the device, you start with a wizard that guides you through a calibration for the eyes. For the holographic effect to work, the computer around your head needs to measure the distance between your pupils. It asks you to close one eye, hold your finger up and tap down on a projected image in front of you. You repeat the same for the second eye for the system to calculate the interpupillary distance. But that's not all the system is doing. Baked into this process is an algorithm that correlates the eye measurements with the numbers from Tashev's research that scanned and measured the eyes and ears of hundreds of subjects to build a generic average. Essentially, the distance between the eyes becomes an indicator of the distance between the two ear canals of the person using the device.

The idea is to make the information-gathering process as inconspicuous as possible. "I think we have succeeded," says Tashev. "Today the final user doesn't even know when or how the personalization of the HRTFs happens."

The efficiency of software also extends to the hardware. While the dynamism of spatial sound is best maintained and experienced over headphones, the HoloLens team needed to steer clear of any occlusions to keep the mixed reality effects intact. "We quickly realized that the user would like to hear the environment around them in addition to the sound from the holograms," says Håkon Strande, senior program manager at Microsoft. "So we needed something that was outside the ear but close enough to make sure the sound reached the ear at a certain level of loudness."

Strande describes one early iteration of the HoloLens that had small tubes folding down from the band to direct air into the ear canals. Another concept swapped the tubes for earbuds that popped into the ears of the user. But the team eventually engineered a pair of thin, red speakers that sit on a band right above the user's ears.

"Most people don't realize that [the speakers] are there," says Strande. "The first time they try the device and when they hear the sound in the space around them they think there are speakers located in the room around them that are playing the sounds. That's how convincing the effect and simulation is at this point."

Microsoft's spatial audio, while active and effective in HoloLens, isn't limited to the device. It's essentially baked into the operating system so it can work across devices that rely on Windows 10. With new VR headsets announced for the Windows ecosystem at the Surface event in October, perhaps the spatial audio technique will translate from a holographic mixed reality to a fully immersive virtual space.

"Audio is important in mixed reality and in VR because it ties the experience together," says Strande. "It is often the second thing that game and app developers think about, but without audio you don't suspend disbelief. To bring something to life, it has to have a sound aspect to it — especially if they're holograms that are moving around you."