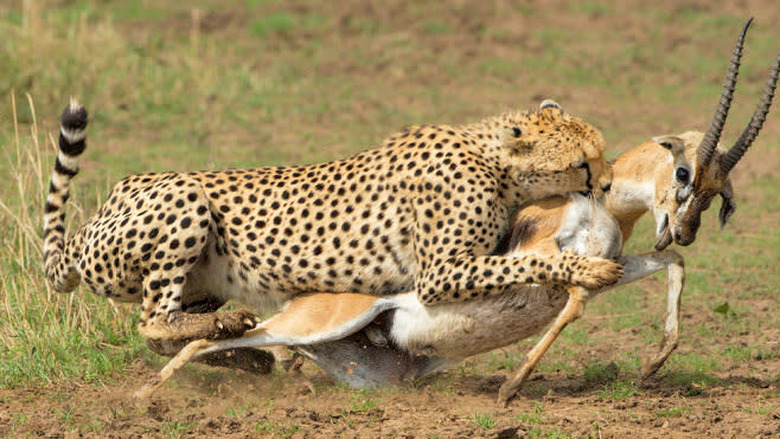

Scientists are teaching robots how to hunt down prey

One day this could be a very important skill for robots across the world.

Intelligent robots are all well and good until they start learning how to hunt prey. That's exactly what a team of scientists at the Institute of Neuroinformatics at the University of Zurich in Switzerland did. They taught a robot to behave like a predator and hunt "prey," or a robot controlled by a human, using special software to aid the robot to mark its target and pounce.

The applications of these lessons for the predator robot are a lot less terrifying than thinking robots are about to start hunting the human race. It's about creating software that could potentially allow a robots to both take a look at their environments and then discern a target in real time.

For instance, as Tobi Delbruck, professor at the Institute of Neuroinformatics explained, "one could imagine future luggage or shopping carts that follow you." This allows the software to transcend the labels of "predator and prey" to reach levels of "parent and child," but the fundamental operating basics remain.

The predator robot's hardware is actually modeled directly after members of the animal kingdom, as the robot uses a special "silicon retina" that mimics the human eye. Delbruck is the inventor, created as part of the VISUALISE project. It allows robots to track with pixels that detect changes in illumination and transmit information in real time instead of a slower series of frames like a regular camera uses.

This allows for data to be processed by a neural network that helps the robot learn and adapt to the actions it should take the next time it "sees" something similar, which allows it to better track prey the next time it's asked to.

It's a wild world out there, and no doubt robots are going to be a huge part of it going forward. Just don't be surprised when you start seeing them taking point on newer operations just like these in the future.