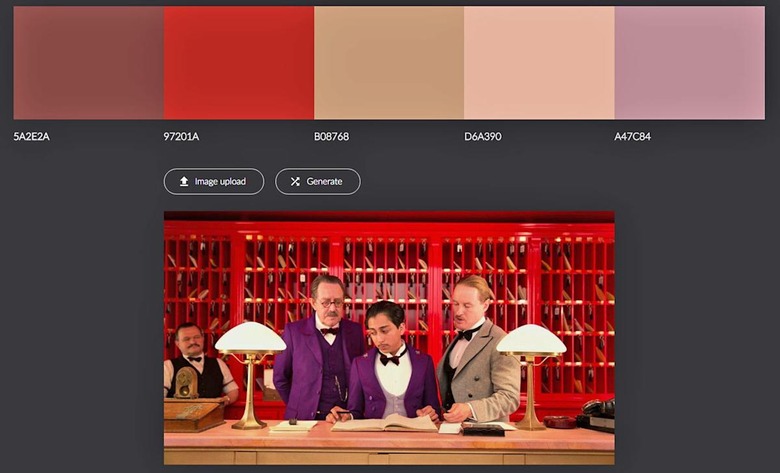

Use AI to turn your favorite film into a color palette

Make your website look like "Total Recall" or a Michael Bay film.

If you're seeking color inspiration from a distinctive-looking film like Grand Budapest Hotel, you could just "eyedrop" it in Photoshop or try an app like Adobe Color CC. Thanks to Vancouver-based developer Jack Qiao, though, there's now a slightly easier way. He came up with Colormind, an AI algorithm that uses films, video games, fashion and art to "generate color suggestions that fit the distinct visual style of those mediums," he says.

In coming up with his system, Qiao writes that he first looked at so-called color quantization (MMCQ), in which algorithms extract representative colors from images. However, those colors are often "haphazard" and not very useful for design, unlike human palettes that feature "similar hues grouped together ... and some minimum amount of contrast between each other," he says.

To find a balance between the two, Qiao thought about using a fancy adversarial network deep-learning system, but instead "settled on a brute-force technique that I call generative-MMCQ." Basically, it selects representative colors using quantization, shuffles them randomly, and runs them through a classifier, "the ultimate judge of a 'good looking' color palette," Qiao writes. He then trained it on some hand-picked examples, with the aim of making palettes with decent color contrast and a solid theme, while avoiding random-looking ones with poor contrast.

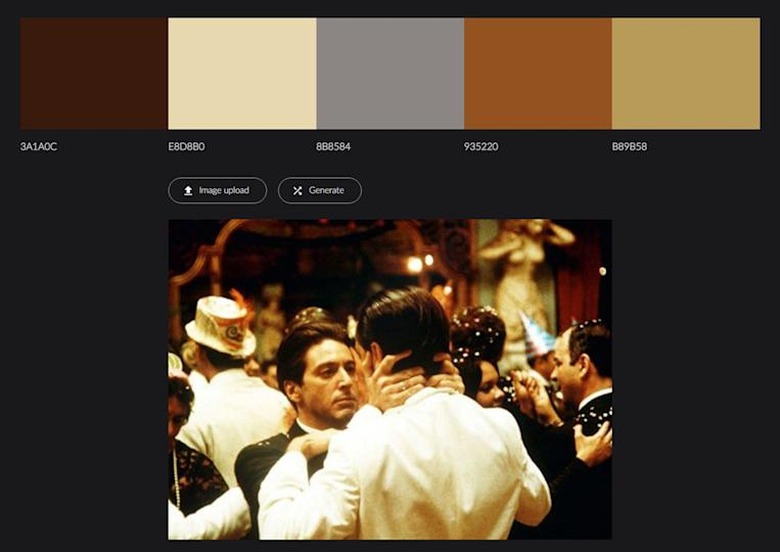

After testing it on films like The Godfather Part II, Jaws, Total Recall and random Michael Bay films (that teal and orange) I found the results to be mixed. Sometimes, it doesn't choose representative colors to my liking, and sometimes the range of hues is inadequate for a design. Since it's pseudo-random, however, you can just keep clicking until you get one that you like. Try it yourself by uploading an image or video and clicking "generate" — it's pretty fun.