Apple's Portrait Lighting uses AI to color our memories

Its talent for altering a photo's setting and lighting will bother purists.

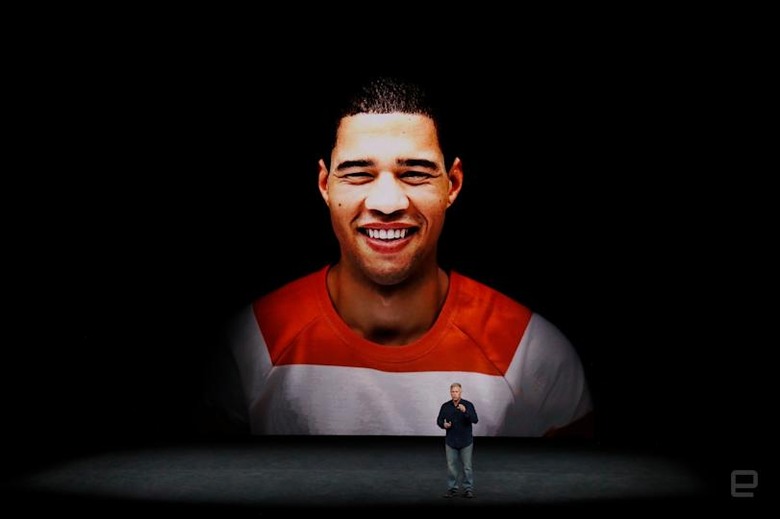

People already hate inane Snapchat-like AI photo filters, but a new trick called Portrait Lighting on Apple's iPhone 8 and X might cause even more dismay. Here's how Apple VP Phil Schiller describes it: "You compose a photo, the dual cameras and the ISP sense the scene, they create a depth map, and they actually change the lighting contours over the face." At first glance, that sounds like a nice, innocent feature, but it might one day create much more consternation than puking rainbows.

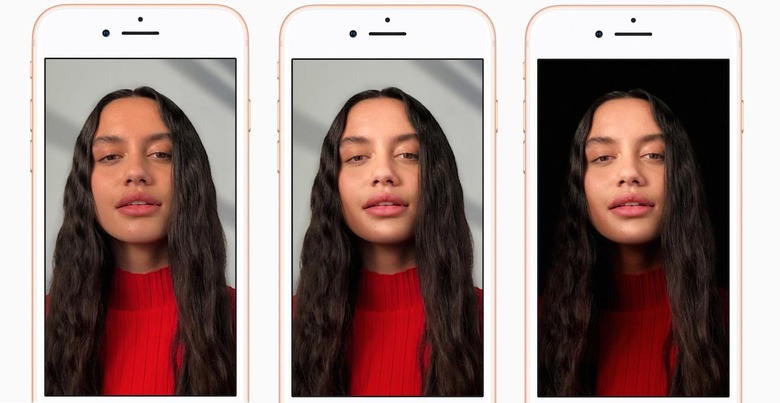

In the two photos on the right (above), there's distinctive shading on the model's right jaw that's simply not there on the original, no matter how much you crank the contrast (I tried). It helps contour the face better, giving it a more three-dimensional look, much as a portrait photographer would by using lights.

The tool, available on Apple's iPhone 8 Plus and X, creates that artificially (using the "Contour Light" setting, natch) by adding a virtual "lowlight" and casting it on her jawline with an assist from the depth settings — all in real time, before you take the shot. It can also replace the background completely in the "Stage Light" setting, among other tricks. All of that is done with machine learning calculated on Apple's new A11 Bionic image processor, and can add up to a more professional, flattering or dramatic portrait.

What's wrong with that? "Light field" camera tech from Lytro and others can already defocus the background of a shot after it's taken. Photoshop processing is commonplace for studio work, and photographers have been altering finished prints since the beginning of photography by "dodging and burning" regions in the darkroom.

But there is a slight difference: The AI is adding something that wasn't there before, and most folks viewing the photo will be unaware of that. Instead of being a representation of a moment in time, you could argue that it's more like a digital VFX effect.

Photo purists, many of whom hate the idea of using Photoshop at all, will really hate this. "Digital is for people that create things in post. Photography is for people that get it right at capture. Problems occur when digital folks mistakenly believe that they are actually photographers," said one bitter commenter in an Fstoppers article about Photoshop.

Smartphones in particular have been held up as a paragon of photographic virtue — lacking large sensors and pricey lenses, you must focus on basics like subject matter, composition and color. For the iPhone Photography Awards, for instance, "photos should not be altered in Photoshop or any desktop image processing program," the rules state.

The new Portrait Lighting modes work on both the rear camera and front camera for selfies. Who doesn't want studio quality selfies? But as with all things AI, the slope can get slippery. What's to stop someone from creating even more dramatic filters that seamlessly, and invisibly change locations, facial features or people in a scene?

In effect, that would change photos from being a sort of historical representation into a record of how we want to beseen. That's a trend already happening, thanks to social media, which often shows laughably upbeat representations of our friends' and families' lives.

In one way, AI is taking us back in time to when people had highly idealized depictions of themselves painted in oil. Have you ever looked at a pre-photography era painting and wondered, "what did this person really look like?"

Don't get me wrong, Apple's machine learning tricks are nothing like that. Quite the opposite — they give the average user a nice way to create sweet portraits and selfies, and other photographers are sanguine about Apple's post processing. "I'm a bit open minded to Apple's new 'Portrait Mode,'" cinematographer Phil Holland tells Engadget. "In reality it's a very advanced photo editor and I think it's going to be fun for the target audience for sure. I'm cool with anything to make cell phone snaps more fun for all."

However, with Apple's Portrait Lighting, machine learning is involved, so as Elon Musk, Bill Gates, Stephen Hawking and others have warned us, it's important to consider where it's going, not just where it is now. If Apple's AI is just the start, we can expect a lot more radical photography transformations to come that won't just be for laughs, but as a way to flatter the user and trick viewers. At some points, will your descendants look at old digital shots of you and say, "gosh, I wonder what Grandma really looked like?"