Modern copyright law can't keep pace with thinking machines

Who owns the work of an artificial intelligence?

This past April, engineer Alex Reben developed and posted to YouTube, "Deeply Artificial Trees", an art piece powered by machine learning, that leveraged old Joy of Painting videos. It generate gibberish audio in the speaking style and tone of Bob Ross, the show's host. Bob Ross' estate was not amused, subsequently issuing a DMCA takedown request and having the video knocked offline until very recently. Much like Naruto, the famous selfie-snapping black crested macaque, the Trees debacle raises a number of questions of how the Copyright Act of 1976 and DMCA's Fair Use doctrine should be applied to a rapidly evolving technological culture, especially as AI and machine learning techniques approach ubiquity.

Questions like, "If a human can learn from a copyrighted book, can a machine learn from [it] as well?," Reben recently posited to Engadget. Much of Reben's art, supported by non-profit Stochastic Labs, seeks to raise such conundrums. "Doing something that's provocative and doing something that's public, I think, starts the conversation and gets them going in a place where the general public can start thinking about them," he told Engadget.

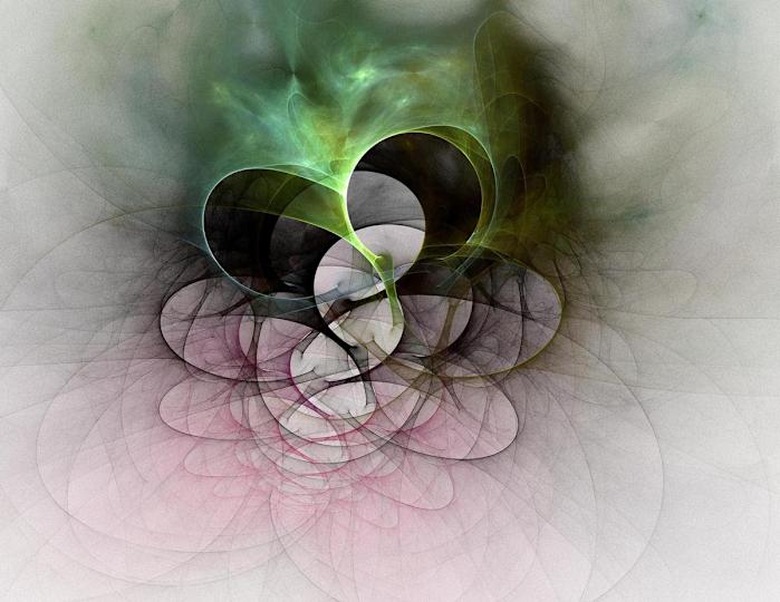

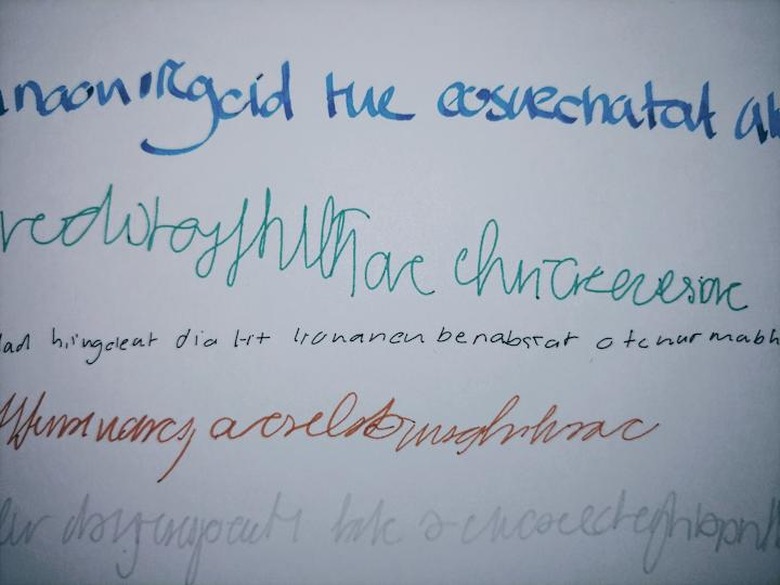

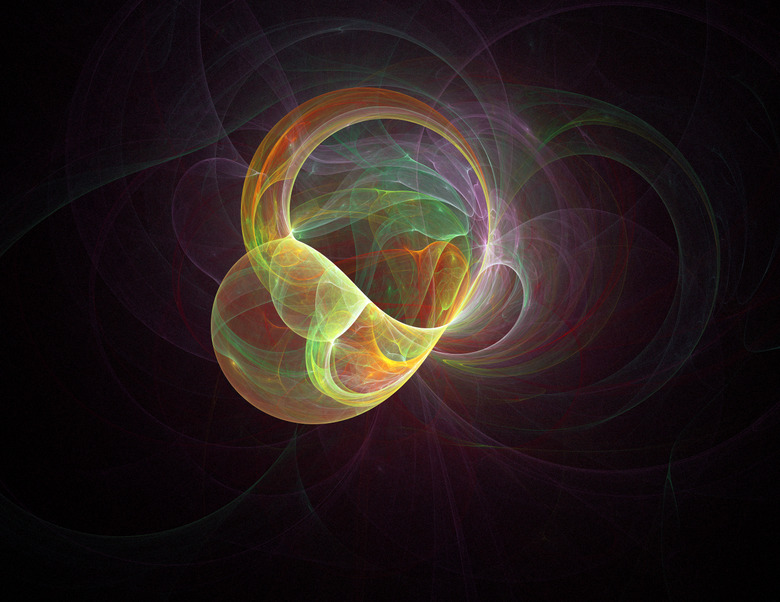

To that end, Reben creates projects like Let Us Exaggerate, "an algorithm which creates gobbly-gook art-speak from learning Artforum articles," Synthetic Penmanship, which accurately mimics a person's handwriting, Korible Bibloran, an algorithm that generates new scripture based on its understanding of the Bible and Koran, or Algorithmic Collaboration: Fractal Flame, which blurs the line of creatorship between human and machine.

"I start with a program which generates phrases for me to think about, for example 'obtrusive grass,'" Reben explained. He then thinks about the phrase while an EEG and other sensors record his reactions. That data is then fed into an art generating algorithm to create an image. "The digital version uses IFS fractal generation where the color palette is chosen by the computer from the phrase used in Google image search results," he said, "then displays different versions for me to choose from by measuring my reactions to the images."

New technology running afoul of existing copyright law is nothing new, mind you. "In the 1980s, US Courts of Appeals evaluated who 'authors' images of a videogame that are generated by software in response to a player's input," Ben Sobel, an Affiliate at the Berkman Klein Center for Internet and Society, Harvard University, told Intellectual Property Watch in August. "IP scholars have been writing about how to treat output generated by an artificial intelligence for at least 30 years."

One of the big sticking points between AI and copyright law centers around how these systems are trained, specifically the process machine learning. Most such systems rely on vast quantities of data — images, text, or audio — that enable the computer to discover patterns within them. "Well-designed AI systems can automatically tweak their analyzes of patterns in response to new data," Amanda Levendowski, a clinical teaching fellow at New York University Law School, argues in her forthcoming Washington Law Review study. "Which is why these systems are particularly useful for tasks that reliance on principles that are difficult to explain, such as the organization of adverbs in English, or when coding the program would be impossibly complicated."

Problems arise, however, when the datasets used to train AIs include copyrighted works without the permission of the rightsholder. "This is presumptively copyright infringement unless it's excused by something like fair use," Sobel explained. This is precisely the issue that Google ran into when it launched the Google Books initiative in 2005 and was promptly sued for copyright infringement.

In Authors Guild v. Google, the plaintiff argued that by digitizing and annotating some 20 million titles, the search company had violated the Guild's copyrights. Google countered by arguing its actions were protected under fair use. The case was finally resolved last year when the Supreme Court declined to hear the Guild's appeal, leaving a lower court's ruling in favor of Google standing. "This is often because the uses are what some scholars call 'non-expressive,'" Sobel told IPW. "They analyze facts about works instead of using authors' copyrightable expression."

Things get even stickier when AI is trained to create expressive works, like how Google fed its system 11,000 romance novels to improve the AI's conversational tone. The fear, Sobel explains, is that the subsequent, AI-generated work will supplant the market for the original. "We're concerned about the ways in which particular works are used, how it would affect demand for that work," he said.

"It's not inconceivable to imagine that we would see the rise of the technology that could threaten not just the individual work on which it is trained," Sobel continued. "But also, looking forward, could generate stuff that threatens the authors of those works." Therefore, he argued to IPW, "If expressive machine learning threatens to displace human authors, it seems unfair to train AI on copyrighted works without compensating the authors of those works."

It's part of what Sobel calls the "fair use dilemma." On one hand, if expressive use of machine learning isn't protected by the fair use doctrine, any author whose work was used as even a single part of a massive training data set would be able to sue. This would create a major impediment to the further development of AI technology. On the other, Sobel asserted to IPW, "a hyper-literate AI would be more likely to displace humans in creative jobs, and that could exacerbate the income inequalities that many people fear in the AI age."

These legal ramifications have other implications to more than just the defendant's pocketbook. The rules around copyright influence the AI itself. If you can't train your literary AI on copyrighted materials, you've got to look elsewhere: like the public domain. The problem with that is many of those titles — being written prior to the 1920s largely by white Western male authors — are themselves inherently biased.

One such example is the Enron emails, which were released to the public domain by the Federal Energy Regulatory Commission in 2003. This dataset contains 1.6 million emails and offer very low legal risk in using as Enron and its ex-employees aren't in the position to sue anyone. However the dataset is typically only used to train spam filters. Namely because the emails are full of lies.

"If you think there might be significant biases embedded in emails sent among employees of Texas oil-and-gas company that collapsed under federal investigation for fraud stemming from systemic, institutionalized unethical culture, you'd be right." Levendowski wrote. "Researchers have used the Enron emails specifically to analyze gender bias and power."

Even more recent sources like those shared under the Creative Commons are not without at least a small degree of bias. Wikipedia, for example, is a massive trove of information, all of which is reproducible under their Creative Commons licensing, which makes it a desireable dataset for machine learning. However, as Levendowski points out 91.5 percent of the site's editors identify as male, which — intentional or not — may influence how information relating to women and women's issues are presented. This, in turn, could influence the AI's algorithmic output.

Frustratingly, solutions for these issues are hard to come by. Just as with the "timeshifting" fair use issues presented by DVRs or Naruto the macaque's selfie, each new platform presents unique technological wrinkles and legal ramifications which must slowly wind their way through the court system for interpretation and guidance.

Sobel points to a number of competing proposals, such as the person who operated the computer or the people who developed the program "Perhaps there will be no copyright whatsoever," he said, which was the case with Naruto's selfie. "The more broad ranging proposals involve giving rights to the computer, which I think would require more dramatic reform in order to recognize an algorithm as a rights holding entity. I think that that's a bit further afield. But to be honest, yeah, there's no great answer."