Robots can learn tasks by watching and mimicking humans

Researchers at NVIDIA unveiled a new deep learning system.

This week, NVIDIA revealed that its researchers have developed a unique deep learning system that allows a robot to learn a task based on the actions of a human. The focus here is on the communication between the robot and human, to the point where the robot can observe and mimic the human.

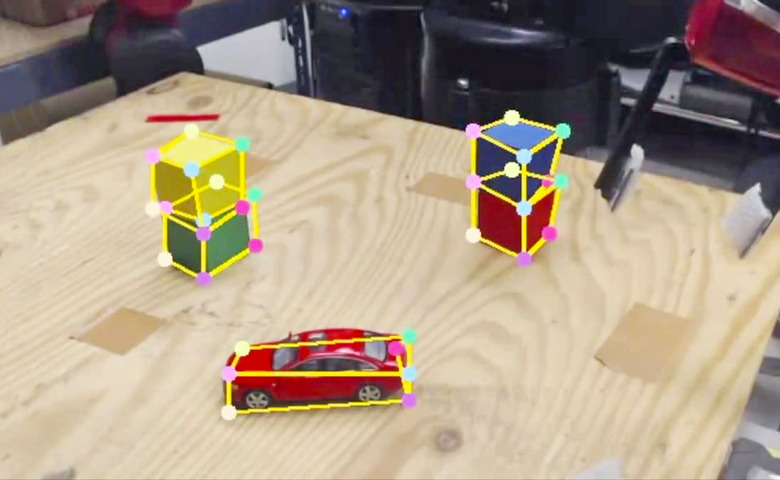

After a robot witnesses a task being performed, it comes up with a list of steps necessary to duplicate performance of that task. The list can then be reviewed by a human to confirm it's correct before robot then carries out the steps. The researchers carried out a demonstration with a pair of stacked cubes, which the robot needs to place in the correct order, in the video below.

A huge advantage with this approach is that it doesn't require researchers to input a large amount of labeled training data into the system. Instead, it generates data synthetically, which produces the labeled training data necessary for the robot to learn and complete tasks. Additionally, by using an image-centric domain randomization approach (the first time this has been tried with robots), the synthetic data has a large amount of diversity and isn't dependent on environmental variables or a camera.

Researchers Stan Birchfield and Jonathan Tremblay will present their research this week at the International Conference on Robotics and Automation. Their next steps are to continue looking at how to use synthetic data to use their research and methods in other scenarios.