Google explains the Pixel 3's improved AI portraits

A little machine learning goes a long way.

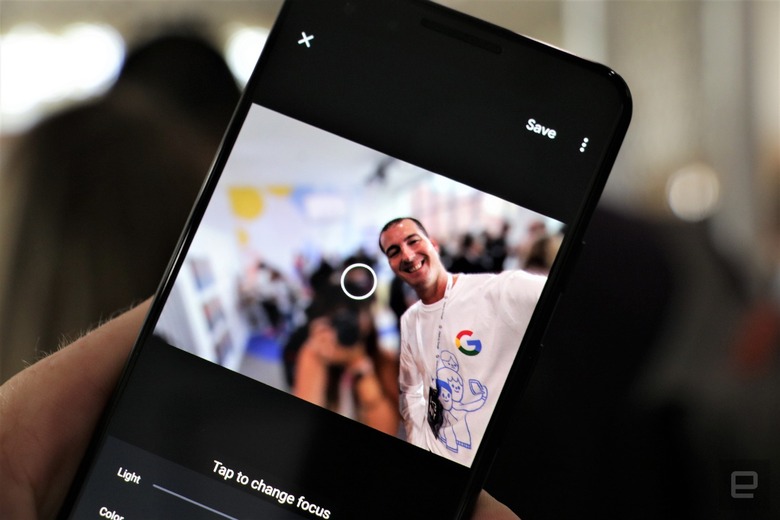

Google's Pixel 3 takes portrait photos that are more accurate than its predecessor could take when new, which is no mean feat when you realize that the upgrade comes solely through software. But just what is Google doing, exactly? The company is happy to explain. It just posted a look into the Pixel 3's (or really, the Google Camera app's) Portrait Mode that illustrates how its AI changes produce portraits with fewer visual glitches.

When the Pixel 2 launched, Google used a neural network and the camera's phase-detect autofocus (namely, the parallax effect it offers) to determine what's in the foreground. This doesn't always work when you either have a scene that doesn't change much or a are shooting through a small aperture, though. Google tackled this with the Pixel 3 by teaching a neural network to predict depth using a myriad of factors, such as the typical size of objects and the sharpness of various points in the scene. You should see fewer of the glitches that tend to come up in portrait photos, such as background objects that are still sharp.

It required some creative technology to train this neural network, Google said. The company created a "Frankenphone" with five Pixel 3s to capture synchronized depth data, eliminating the issues with aperture and parallax.

While you might not be happy that Pixels still rely on single cameras for photos (and thus limiting your photographic options), this does illustrate the advantage of using AI. Google can improve the image quality without tying it to hardware upgrades.