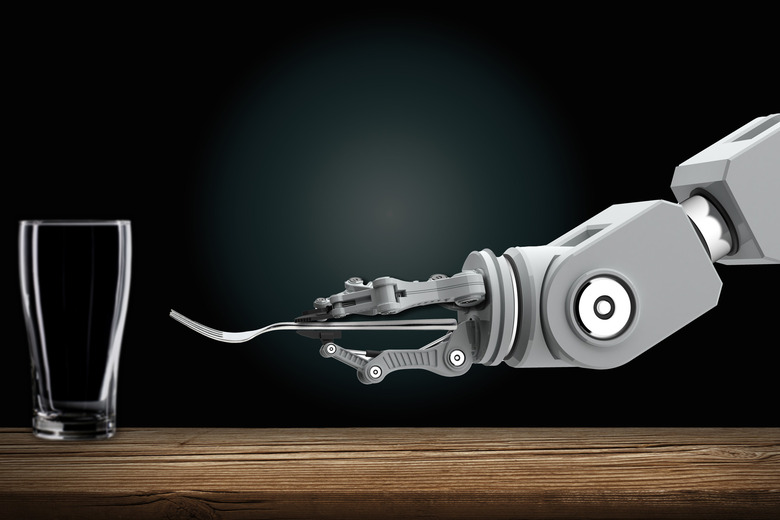

Robot learns to set the dinner table by watching humans

You could one day teach a robot just by performing a task yourself.

To date, teaching a robot to perform a task has usually involved either direct coding, trial-and-error tests or handholding the machine. Soon, though, you might just have to perform that task like you would any other day. MIT scientists have developed a system, Planning with Uncertain Specifications (PUnS), that helps bots learn complicated tasks when they'd otherwise stumble, such as setting the dinner table. Instead of the usual method where the robot receives rewards for performing the right actions, PUnS has the bot hold "beliefs" over a variety of specifications and use a language (linear temporal logic) that lets it reason about what it has to do right now and in the future.

To nudge the robot toward the right outcome, the team set criteria that helps the robot satisfy its overall beliefs. The criteria can satisfy the formulas with the highest probability, the greatest number of formulas or even those with the least chance of failure. A designer could optimize a robot for safety if it's working with hazardous materials, or consistent quality if it's a factory model.

MIT's system is much more effective than traditional approaches in early testing. A PUnS-based robot only made six mistakes in 20,000 attempts at setting the table, even when the researchers threw in complications like hiding a fork — the automaton just finished the rest of the tasks and came back to the fork when it popped up. In that way, it demonstrated a human-like ability to set a clear overall goal and improvise.

The developers ultimately want the system to not only learn by watching, but react to feedback. You could give it verbal corrections or a critique of its performance, for instance. That will involve much more work, but it hints at a future where your household robots could adapt to new duties by watching you set an example.