Google I/O 2025: Live updates on Gemini, Android XR, Android 16 updates and more

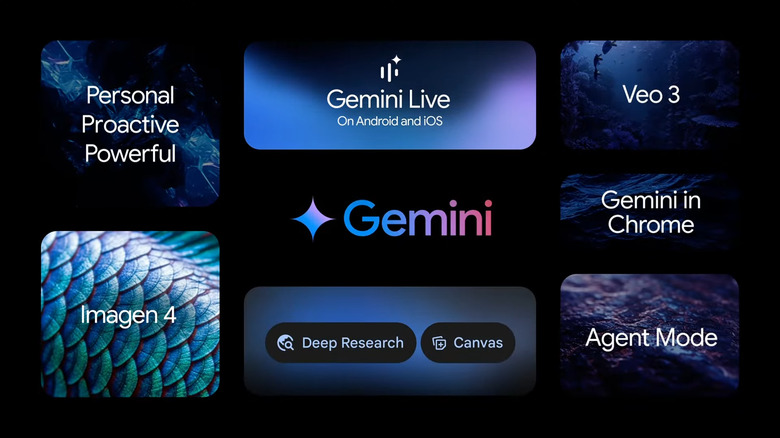

A cavalcade of new Gemini AI services and enhancements have already been announced.

Google I/O has kicked off in Mountain View, California, and the initial keynote provided a laundry list of big AI announcements. A parade of Google execs starting with CEO Sundar Pichai took the stage to detail how the search giant is weaving its Gemini artificial intelligence model throughout its entire catalog of online services, as the company continues to vie for supremacy in the AI space with rivals like OpenAI, Microsoft and a host of others. Among the big announcements today: A new AI-powered movie creator called Flow; Virtual clothing try-ons enabled by photo uploads; real-time AI-powered translation is coming to Google Meet; Project Astra's computer vision features are getting considerable more knowledgeable; Google's Veo image and video generation options are getting a big upgrade and Google shared a live demo of real-time translation via its XR glasses, too. See everything announced at Google I/O for a full recap.

The initial keynote has ended, but there will be a developer-centric keynote thereafter (4:30PM ET / 1:30PM PT). If you want a recap of the event as it happened, scroll down to read our full liveblog, anchored by our on-the-ground reporter Karissa Bell and backed up by off-site members of the Engadget staff.

You can also rewatch Google's keynote in the embedded video above or on the company's YouTube channel, too. Note that the company plans to hold breakout sessions through May 21 on a variety of different topics relevant to developers.

266 Updates

-

Stick around for Karissa's hands-on impressions of some of the things Google announced today, by the way, including those new XR Glasses!

-

Thanks to everyone who tuned in for this wild AI ride with us.

We have more stories about everything Google announced today on Engadget, so please check us out there.

-

Google's AI Mode lets you virtually try clothes on by uploading a single photo

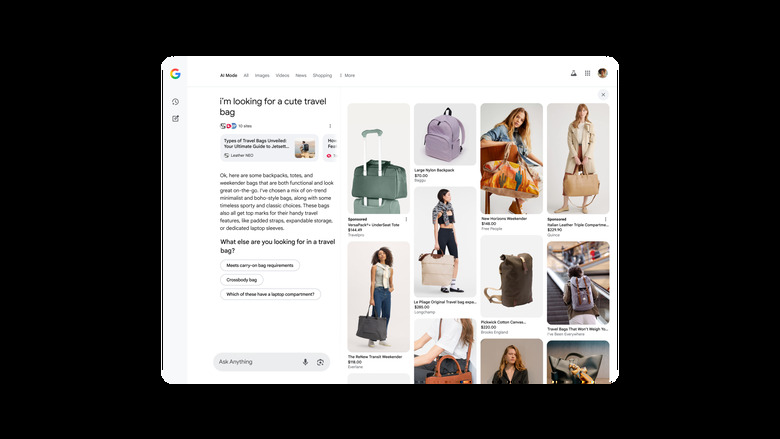

A screenshot of the Shopping interface in AI Mode in Search, showing the results in response to a prompt

As part of its announcements for I/O 2025 today, Google shared details on some new features that would make shopping in AI Mode more novel. It's describing the three new tools as being part of its new shopping experience in AI Mode, and they cover the discovery, trying on and checkout parts of the process. These will be available "in the coming months" for online shoppers in the US.

The first update is when you're looking for a specific thing to buy. The examples Google shared were searches for travel bags or a rug that matches the other furniture in a room. By combining Gemini's reasoning capabilities with its shopping graph database of products, Google AI will determine from your query that you'd like lots of pictures to look at and pull up a new image-laden panel.

Read more: Google's AI Mode lets you virtually try clothes on by uploading a single photo

-

Ok. Now that the AI deluge has slowed down. I can see why people are excited for this technology. But at the same time, it almost feels like Google is throwing features against a wall to see what sticks. There is A LOT going on, and trying to cover a million different applications in a two-hour presentation gets messy fast.

-

Aaaaaand it looks like that's it. Whew!

-

Wifi has been slipping here since that AR glasses demo so I'm a bit late here, but it's worth lingering on that for a moment. It's been more than a decade since Google first tried augmented reality glasses with Google Glass. The tech (and our perception of it) has advanced so much since then, it's great to see Google trying something so ambitious. So far this demo feels on par with what Meta showed with its Orion prototype last year (with a few bumps.)

-

Now Pichai is recounting a story about going in a driverless Waymo with his father and remembering how powerful this technology will be.

-

With the show wrapping, what did we think of the announcements Google made today?

-

FireSat just announced, which can detect fires as small as 270 square feet.

-

Pichai says Google is building something new called FireSat. It's designed to more closely monitor fire breakouts, which is especially important to folks in places like California.

-

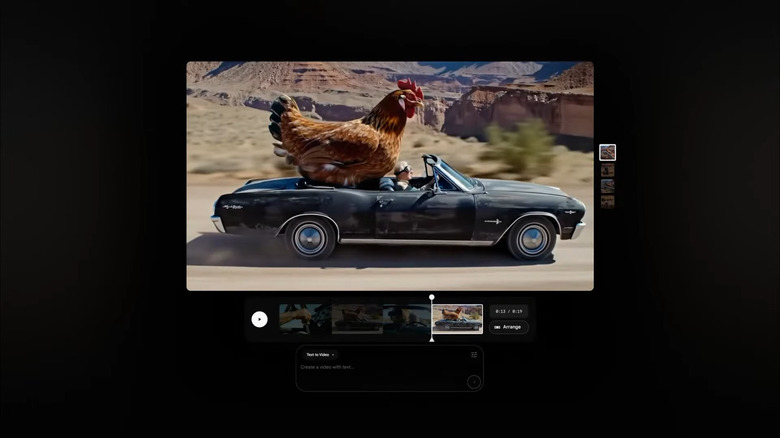

Google's Veo 3 AI model can generate videos with sound

Google

As part of this year's announcements at its I/O developer conference, Google has revealed its latest media generation models. Most notable, perhaps, is the Veo 3, which is the first iteration of the model that can generate videos with sounds. It can, for instance, create a video of birds with an audio of their singing, or a city street with the sounds of traffic in the background. Google says Veo 3 also excels in real-world physics and in lip syncing. At the moment, the model is primarily available for Gemini Ultra subscribers in the US within the Gemini app and for enterprise users on Vertex AI. (It's also available in Flow, Google's new AI filmmaking tool.)

Read more: Google's Veo 3 AI model can generate videos with sound

-

Everything announced at Google I/O today.

-

Sundar Pichai is back, ostensibly to wrap up the show.

Apparently Gemini got mentioned more times than AI itself during the keynote.

-

Google says its working hard to create a platform for these types of glasses, with retail devices available as early as later this year.

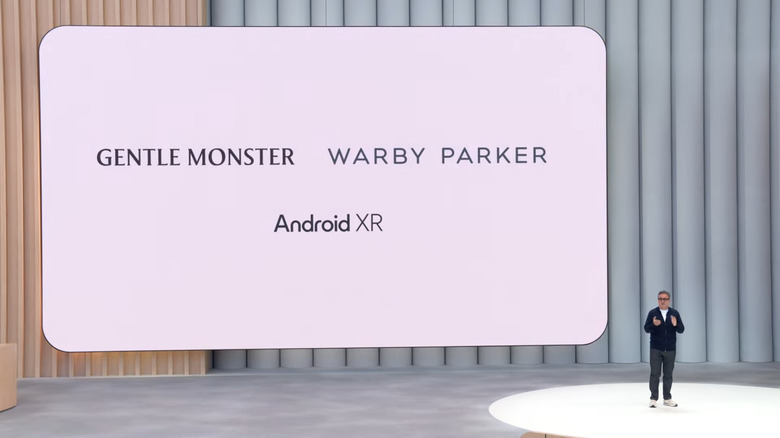

Gentle Monster and Warby Parker are slated to be the first glasses makers to build something on the Android XR platform.

-

Gentle Monster and Warby Parker will be the first brands to build with Android XR.

-

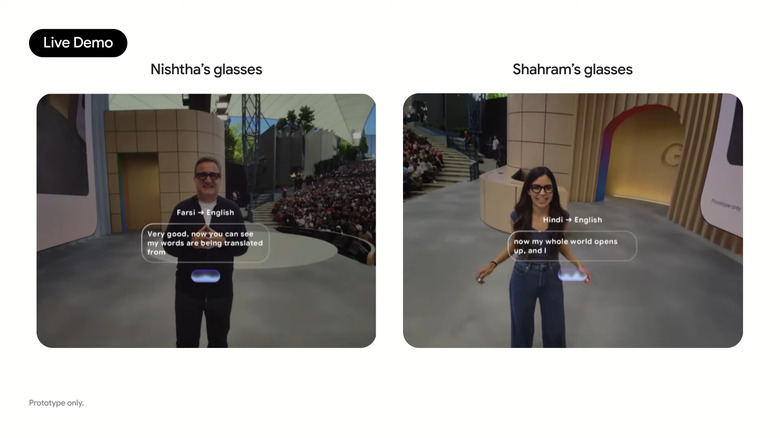

It seems Google has tempted the live demo gods one too many times, because one pair of the glasses got confused and thought Izadi was speaking Hindi.

-

Live chat translation using the glasses.

-

Google Search Live will let you ask questions about what your camera sees

Google I/O 2025

One of the new AI features that Google has announced for Search at I/O 2025 will let you discuss what it's seeing through your camera in real time. Google says more than 1.5 billion people use visual search on Google Lens, and it's now taking the next step in multimodality by bringing Project Astra's live capabilities into Search. With the new feature called Search Live, you can have a back-and-forth conversation with Search about what's in front of you. For instance, you can simply point your camera at a difficult math problem and ask it to help you solve it or to explain a concept you're having difficulty grasping.

Read more: Google Search Live will let you ask questions about what your camera sees

-

Now Izadi is doing what he calls a risky demo by attempting to speak in Farsi to another presenter in real time with translation.

-

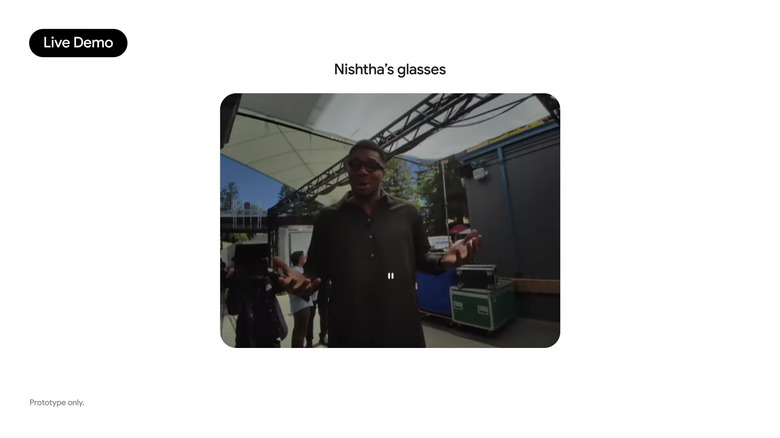

Of course, the glasses can take photos too.

-

Izadi reveals that he's even using his glasses as a personal teleprompter during the keynote.

-

We first saw these glasses in a demo Google showed off last year during the Project Astra showcase.

-

The glasses have the ability to respond to voice commands while also highlighting notifications for things like reminders. It incorporates some Project Astra-like features to remember things that you've seen. It can even populate heads-up turn-by-turn directions to specific locations.

-

A close up of Bhatia's glasses.

-

Live demo of Nishtha Bhatia, Product Manager at Google, Glasses & AI, wearing the new Android XR glasses.

-

Google is showing how an unnamed pair of Android XR glasses can respond to voice requests to mute sounds.

Apparently Giannis of the Milwaukee Bucks is backstage wearing these glasses too.

-

Google is showing some AR glasses that look pretty similar to Meta's Orion (maybe a tad less chunky). Pretty unclear from this demo so far what the field of view might be, it looks like it might be fairly small.

-

Of course, dating back to the old Google Glass, Android XR will be available on lightweight smart glasses as well.

Izadi says "It's a natural form factor for Android XR

-

Glasses with Android XR.

-

Made by Samsung, Project Moohan is set to be the first Android XR device.

It allows you to talk to Gemini about anything you can see, both in the real world or in a virtual screen.

Project Moohan is slated to go on sale later this year.

-

Project Moohan on VR headsets.

-

Google introduces Deep Think reasoning or Gemini 2.5 Pro

The Gemini logo beside the words Gemini 2.5 on a black background

Google has started testing a reasoning model called Deep Think for Gemini 2.5 Pro, the company has revealed at its I/O developer conference. According to DeepMind CEO Demis Hassabis, Gemini Deep Think uses "cutting-edge research" that gives the model the capability to consider multiple hypotheses before responding to queries.

Google says it got an "impressive score" when evaluated using questions from the 2025 United States of America Mathematical Olympiad competition. However, Google wants to take more time to conduct safety evaluations and get further input from safety experts before releasing it widely. That's why it's making Deep Think initially available to trusted testers via the Gemini API first in order to get their feedback first.

Read more: Google introduces the Deep Think reasoning model for Gemini 2.5 Pro and a better 2.5 Flash

-

Google says its reimagining all of its most popular apps for XR.

-

Android XR is the first built in the Gemini era.

-

But the most exciting one is Android XR.

It's meant to work on both headsets and smart glasses.

-

And finally we're getting to Android XR! Interesting that Google is specifically calling out AR Glasses and smart glasses w/ AI capabilities (a la Meta). IMO glasses have been the best thing for XR, headsets are just so limited.

-

Izadia is reiterating how many of these new Gemini features are coming soon to a wide range of devices including smartwatches, phones and cars.

-

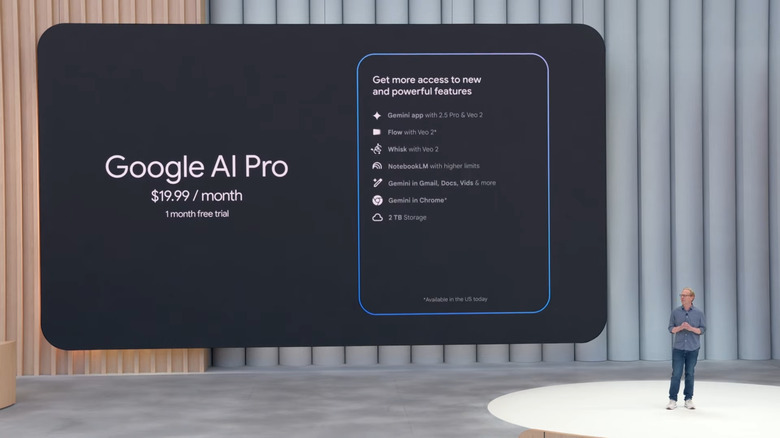

This is a good reminder that all this AI is very resource-intensive (and expensive) so Google has some new plans for everyone who wants access to more prompts. Notably, not a word yet today about the environmental impact of all this.

-

Next up is Shaham Izadi.

-

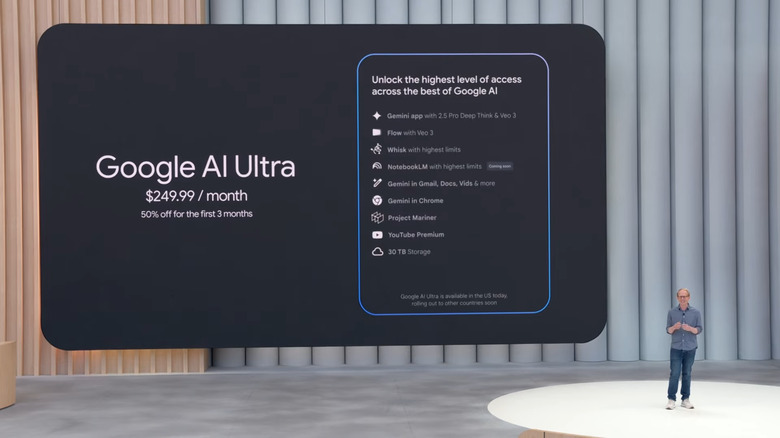

For context, $250 is $50 more than either OpenAI or Anthropic charge for their respective top-tier plans.

-

Google AI Ultra price.

-

What do we think of the $250 price for the Ultra plan?

-

$250 a month for Google AI Ultra is A LOT.

-

Google AI Pro price.

-

Now comes pricing for some of Google's various AI plans including a new Ultra tier.

-

Google's new text-to-speech can switch languages on the fly

Google I/O keynote

Google is enhancing Gemini's text-to-speech (TTS). On Tuesday at Google I/O 2025, the company previewed a new TTS feature, built on native audio output, that can "converse in more expressive ways."

Google's Tulsee Doshi showed a quick demo of the Gemini 2.5 TTS models onstage in Mountain View. It showed an AI-powered voice that sounds more natural and less robotic, with subtler nuances.

The TTS can converse in over 24 languages, switching between them seamlessly.

Read more: Google's new text-to-speech can switch languages on the fly

-

If I remember, didn't we see some AI music video making thing last year? Don't think we ever got an update on that.

-

Now Google is presenting testimonies from some AI filmmakers highlighting the potential of its tools.

-

I'm curious to know what the generation limit of Flow is; most video generation models are limited to about 60 seconds of footage at most.

-

Flow allows you to describe a scene and have it come to life.

-

In essence, you can use specific prompts in Flow to create multiple scenes across a video timeline.

-

Woodward is back on stage to talk about a new AI-powered filmmaking tool that combines the best of Imagen and Veo called Flow.

-

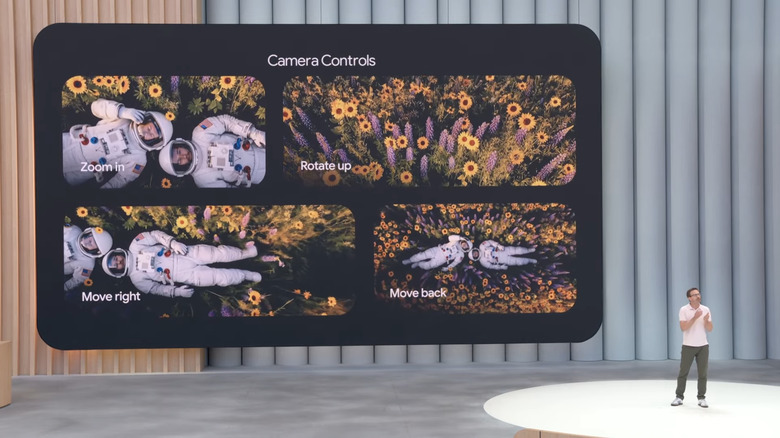

Veo offers tools that are akin to directing a virtual movie set.

-

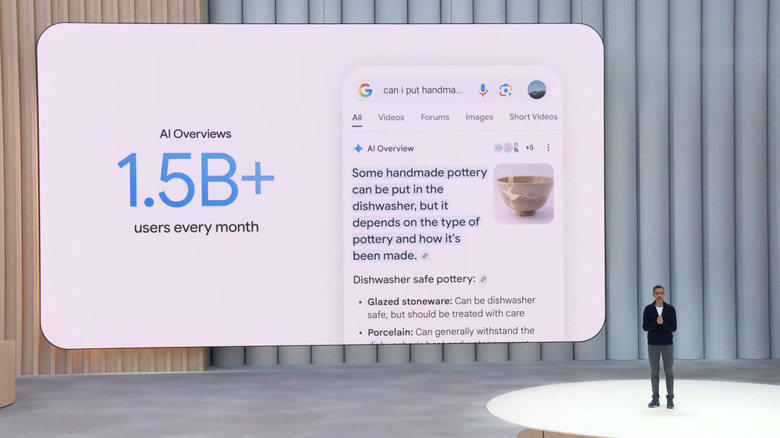

1.5 billion people see Google's AI Overviews each month

Google touted some big numbers regarding its AI Overviews feature during its I/O conference. On the stage, company CEO Sundar Pichai said that the service has 1.5 billion monthly users. That's around 18 percent of the total human population of planet Earth, give or take.

For the remaining 82 percent of humanity, AI Overviews are those little recaps that appear at the top of Google queries, like this helpful example:

Screenshot of a Google AI Overview definition of a nonsensical idiom.

Read more: 1.5 billion people see Google's AI Overviews each month

-

Veo now supports reference-powered video so you can have more control about how a specific scene or shot looks.

-

Google is partnering with Hollywood filmmakers to highlight Veo as a creative tool.

-

Google has done a lot of work on watermarking AI-generated content and now it's making those easier to detect (though it's still a manual process from what I could see). This is important as AI-generated content becomes even more prevalent, but most people who encounter this stuff aren't looking for watermarks.

-

To help protect and make people aware of what art is AI generated, Google is expanding its SynthID detector, which includes better detection for watermarks and the ability to identify specific sections of a piece.

The updated SynthID is available now.

-

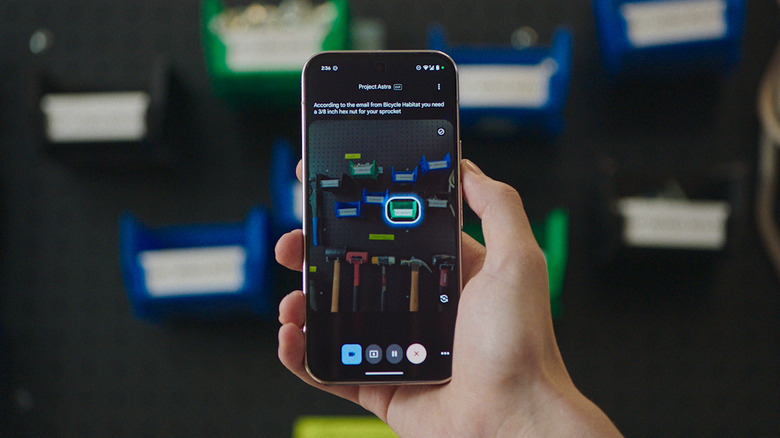

Project Astra, Google's vision for a universal AI assistant, is pulling into focus

Google's Project Astra.

Last year at Google I/O, one of the most interesting demos was Project Astra, an early version of a multimodal AI that could recognize your surroundings in real-time and answer questions about them conversationally. While the demo offered a glimpse into Google's plans for more powerful AI assistants, the company was careful to note that what we saw was a "research preview."

One year later though, Google is laying out its vision for Project Astra to one day power a version of Gemini that can act as a "universal AI assistant." And Project Astra has gotten some important upgrades to help the company accomplish this. Google has been working on upgrading Astra's memory — the version we saw last year could only "remember" for 30 seconds at a time — and added computer control so Astra can now take on more complex tasks.

In its latest video showcasing Astra, Google shows the assistant browsing the web and pulling out specific pieces of information necessary to complete a task (in this example, fixing a mountain bike). Astra is also able to look through past emails to find specific specs of the bike in question and call a local bike shop to inquire about a replacement part.

Read more: Project Astra, Google's vision for a universal AI assistant, is pulling into focus

-

Google Lyria 2 is available starting today.

-

Jason Baldridge, natural language research scientist, discussing Music AI Sandbox.

-

There's a video showing how artists are using AI to create a base melody for a song before applying their own touches.

-

Google is working with big name musicians to improve its generative audio capabilities.

-

Now on stage is Jason Baldridge.

-

Gemini AI assistant.

-

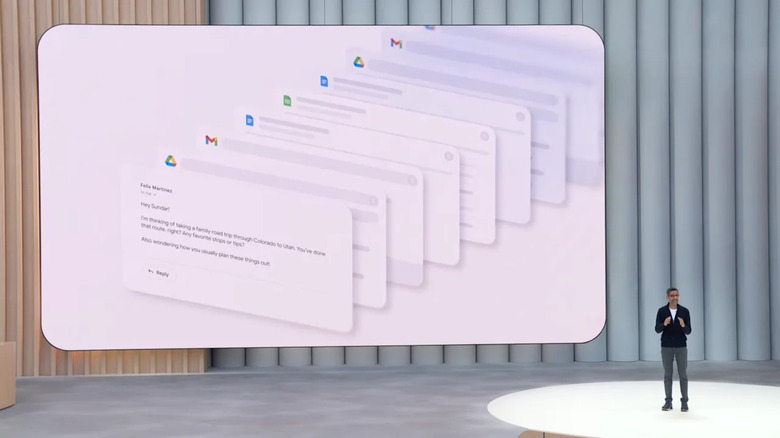

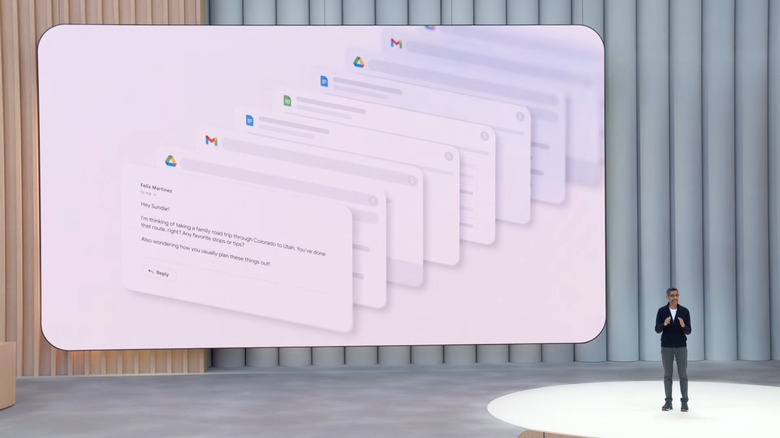

Personalized AI-powered Smart Replies are coming to Gmail

Google I/O 2025

Like it or not, Google's Gemini AI is worming its way into more of your Workspace apps. At I/O 2025, CEO Sundar Pichai announced that its upcoming Gmail app will have a new feature called Personalized Smart Replies. The idea is that with your permission, Gemini can examine your past emails and Google docs, then help you craft responses to personal- or business-oriented emails.

In an example onstage at I/O, Pichai used the example of a friend taking a road trip through Colorado to Utah, a trip the friend knew Pichai had taken before. Gemini is able to look through all the notes in Drive, looking through email for related reservations and finding an itinerary in Google Docs. It'll pull all that stuff together and then draft an email that matches the typical tone, style and even words that Pichai commonly uses.

Read more: Personalized AI-powered Smart Replies are coming to Gmail

-

"We're entering a new era of creation."

-

Video created with Veo 3

-

Next up is Veo 3, which is an AI-powered video creation engine.

It's available today and features improved visual quality and the ability to generate audio natively. This includes sound effects, background sounds and dialogue.

-

Veo 3 is available today.

-

Woodward claims Imagen 4 is 10x faster than the previous model.

-

Woodward says Imagen 4 features improved ability to create text with proper spelling and more creative flexibility.

-

Imagen 4 images.

-

Coming to the Gemini app is Imagen 4, which is Google's latest image creation model.

-

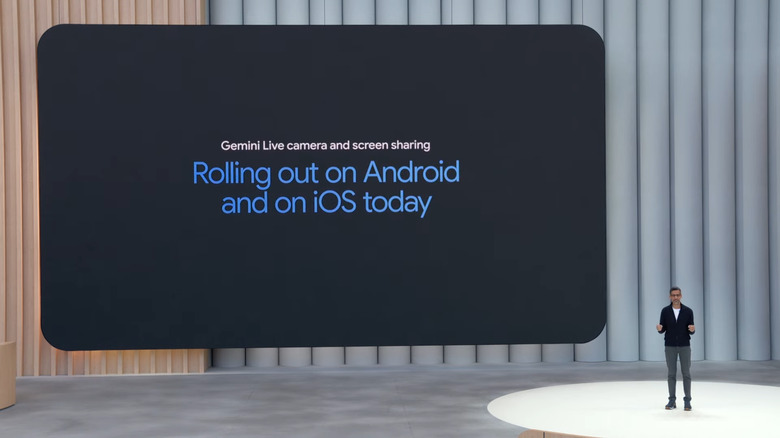

Screen and camera sharing in Gemini Live is heading to all Android and iOS devices

An example of camera sharing using Google's Gemini Live AI.

Last month, Gemini Live camera and screen sharing became available on Pixel phones as part of Google's April feature drop. But today, at Google I/O 2025, the company announced that it's bringing that capability to all compatible Android and iOS devices as part of a free update.

Available inside the Gemini app, the ability to share your phone's camera or content from your screen with Google's AI is meant to make it easier and more natural to get answers about complex topics. For example, instead of describing a situation only using your voice, you could simply point your handset's camera at something while Gemini uses object recognition to analyze the scene and do things like identify a particular species of animal or tell you want kind of screw or bolt you might need to perform a repair.

Read more: Screen and camera sharing in Gemini Live is heading to all Android and iOS devices

-

Gemini in Chrome is rolling out this week in the US.

-

Gemini is also coming to Chrome, as a personal AI that understands the context of the website you're currently looking at.

The rollout is starting this week for folks in the US.

-

Woodward says Google Canvas has the ability to transform research into various new forms like a podcast or a simulation.

-

In a nice update to Google's Deep Research tool, you can now upload files and documents to make them a part of the reports Gemini generates.

-

Deep Research will now let you upload your own files, and will soon let you research across Google Drive and Gmail.

-

Gemini Live is rolling out today on camera and screen sharing for Android and iOS.

-

Woodward says conversations in Gemini Live are 5x longer than traditional questions.

-

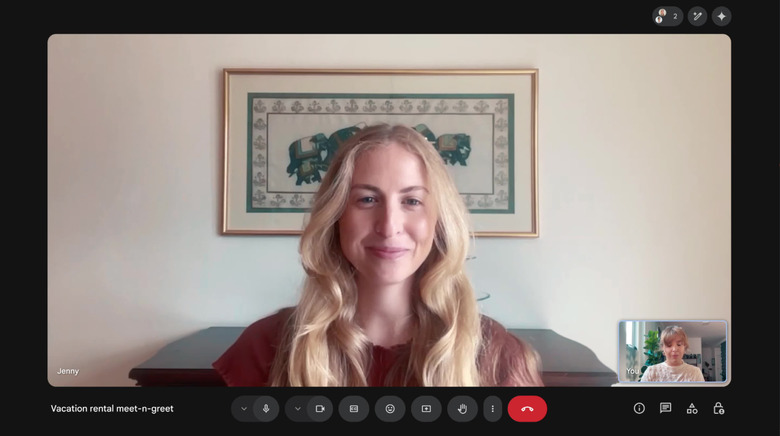

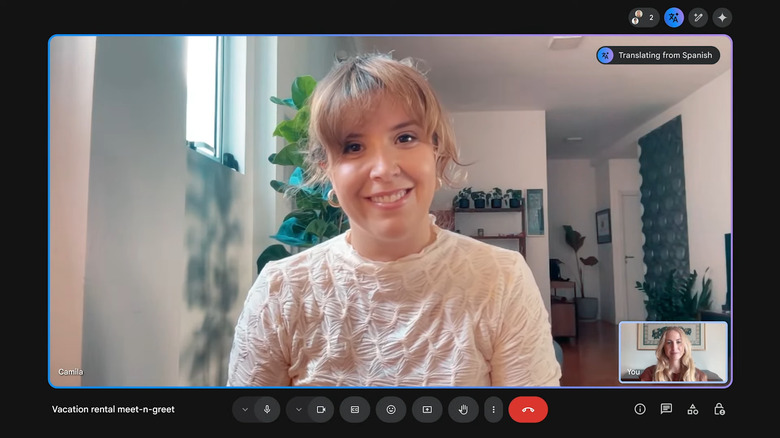

Google brings live translation to Meet, starting with Spanish

Live translation is coming to Google Meet, starting with Spanish.

If you find Google's live translation tools useful, you'll soon be able to use them more naturally during video calls and meetings. The company announced today that it's bringing the feature to Google Meet. Starting this week, AI-powered, real-time Spanish translation will be available in the app. Google says more languages are on the way and that the technology is "very, very close to having a natural and free-flowing conversation."

During a brief demo, live translation in Meet matched the speaker's tone and cadence, and was even able to channel expressions. There's no doubt this will be useful for many people, especially on work calls with colleagues in other countries. Live translation will allow everyone to speak in the language they're most comfortable with, and the technology will do the heavy lifting. Before now, you had to rely on live captions in Google Meet to do any translation, so not having to read those will allow users to be more in-tune with the conversation.

Read more: Google brings live translation to Meet, starting with Spanish

-

When it comes to being proactive, Woodward says it wants Gemini not just to remind you about upcoming events, but to provide you with help tools so that you can be better prepared for a project or task.

-

Google wants to connect your search history with Gemini, to be able to deliver results with more personal context.

-

Now Josh Woodward is here to talk about how Gemini is going to be the most personal, powerful and proactive AI

-

For what it's worth — as the person who had to write about the new Shopping tools in AI Mode, I'm here with some context. Virtual try on has been available in Google Search for awhile, but in past versions, you were only given the option to select from a variety of models to pick the one most closely resembling your body. Today's news around try on in AI Mode is that you can see the clothing on yourself thanks to the fashion-centric image generation models, and that you can do so by uploading a single photo of yourself.

-

Goodbye Project Starline, hello Google Beam 3D video conferencing

A banner image with the Google Beam logo on the left and a person sitting in front of the Beam screen talking to another person, who appears to pop slightly out of the screen.

Google first teased Project Starline in 2021, billing it at the time as a "magic window" that uses special hardware, computer vision and machine learning to create an almost holographic video call experience. Since then, we've checked out an early version of the experiment for ourselves and learned last year that the company is teaming up with HP to bring a scaled-down version of the product to enterprise clients. At I/O 2025 today, Google announced that Project Starline "is evolving into an AI-first 3D video communication platform" called Beam. CEO Sundar Pichai said onstage that the first devices will be available later this year to "select customers," though there's no word on pricing just yet.

Read more: Goodbye Project Starline, hello Google Beam 3D video conferencing

-

I also like that this "try-on" doesn't rely on camera-based AR like what a lot of marketers use. I've only found that AR can approximate what something looks like on, this seems like it could be more accurate.

-

Visual shopping and agentic try on are slated to be available in the coming months.

-

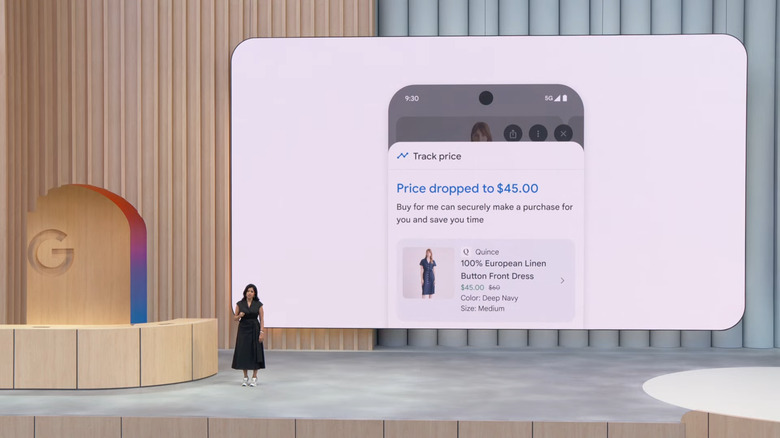

The new tool can also track the price of items for you and buy when you're ready.

-

Of course, if you want to buy something, AI Mode can help with that too, with the ability to notify users if an item has a price drop. Or even buy it automatically.

-

I think this is the most the Shoreline audience has clapped for a demo, which says something about how niche some of the products and updates Google has announced today.

-

So many companies have been trying to crack the "virtual try-on" experience for years now with ... varied results. I have to say, this demo of being able to see an article of clothing actually on you is compelling, but I wonder how well this will work in practice. She's saying it's advanced enough to visualize details like how the material will fall on your body, which would be pretty cool if it works. There's nothing worse than having to return something you thought you'd love because it doesn't work on your body.

-

The try on experience is able to simulate depth, shape and even how a piece might move and stretch in real life.

-

Google announced a new feature that lets you try on clothes virtually.

-

Users can select a specific piece of clothing and supply a picture of themselves to create an AI-generated preview.

-

This also works for clothes, with a new "try on" feature that will simulate how clothes online might look like on your body.

-

Now Google is doing a demo about how Agent Mode can help you find the right rug for you home based on specific metrics like color and durability.

-

(Also yes, I've been covering I/O for a long time! My first-ever was a full decade ago in 2015.)

-

Search Live is coming to AI Mode this summer.

-

Patel notes hat Google first launched camera-based search here in 2017 with Google Lens (this was long before we described this kind of thing as "multimodal") and I will say I remember that moment well.. it definitely felt like the future of search in a lot of ways

-

Now Patel is moving on to share how Google is using multi-modal search to make it easier to identify things in pictures.

Similar to camera sharing in Gemini Live, Search Live is a new tool that uses a device's camera to view and recognize things or even entire scenes.

-

Search can see what you see and give you live feedback.

-

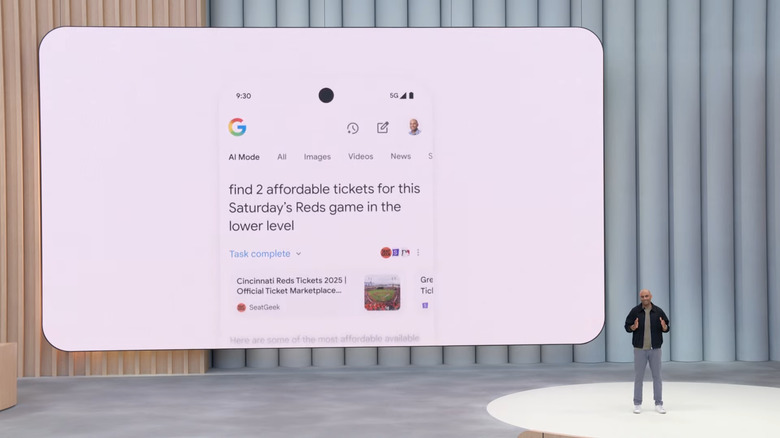

An example of how AI Mode works in search... in this case, buying ball game tickets.

-

Now Patel is using Agent Mode to help someone find and purchase tickets with real-time pricing and inventory information.

-

Patel says complex analysis is coming to financial and sports categories later this summer.

-

Deep Search is an offshoot of Deep Research, which Google has offered since the end of last year. However, I imagine most people haven't had a chance to try it since it was limited to the Gemini app.

-

Now on stage is Rajan Patel, who is here to talk about how AI Mode can help explain current events.

As a sports fan, he is demoing Agent Mode's ability to analyze how torpedo bats are helping players in baseball.

-

For anyone keeping track, we're approaching the one hour mark, or about halfway through this keynote. Lots more to come.

-

Google Deep Search will be available this summer.

-

Reid says AI mode will soon get more personalized suggestions.

It can learn things like your preference for outdoor seating at restaurants.

It will also look at your inbox to get the specific times and dates you'll be visiting a certain place.

Of course, you will be able to manage these app integrations at will.

-

AI Mode will help provide more personalized suggestions for your Google searches.

-

I don't know, some of these example queries also feel like the kind of thing I would just add "Reddit" to a normal Google search to find. (And Google has a partnership with Reddit so it could very well pull from there in some cases.)

-

It works by creating a number of simultaneous queries across a range of subjects related to your search.

-

Reid is doing a demo showing how Agent Mode can create a number of varied suggestions ranging from food to music to events happening in the area.

-

AI Mode will be available right in the search bar and over time, many of its feature will eventually get baked right into Google Search's core functionality.

-

She is highlighting a change in how people use Google Search by asking longer and more complicated questions.

Now, Google is trying to level up that usage with Agent Mode powered by Gemini 2.5 Pro.

-

I've written extensively AI Mode. For the uninitiated, it's a chat bot built directly into Google Search, and starting today Google is integrating Gemini 2.5 Pro into the product.

-

Gemini 2.5 is also coming to Search.

-

I feel like today is the first time we've heard Google describe its AI as "thinking." Lots of companies talk about "reasoning," which I guess is something different. I can't help wondering about the implications of anthropomorphizing AI even more.

-

Now Hassabis is talking about how Google is getting closer to AGI with something new called World models.

-

Google isn't releasing 2.5 Pro today. Instead, the company plans to carry out additional safety testing before rolling out it out to the public.

-

New Gemini 2.5 Pro Deep Think announced today.

-

Demis is back on stage to talk about Deep Think, a new enhanced reasoning mode within Gemini 2.5 Pro.

-

You can sign up for the Jules public beta now.

-

So we basically just saw some more vibe coding with some multimodal features on top. These demos are impressive, but I wonder how devs will use stuff like this IRL. Most devs here probably aren't building cutesy little websites like what we just saw (if there are any devs out there watching who want to disagree though, please correct me!)

-

Jules is an agent inside Gemini, which is now available as a publicly available beta.

-

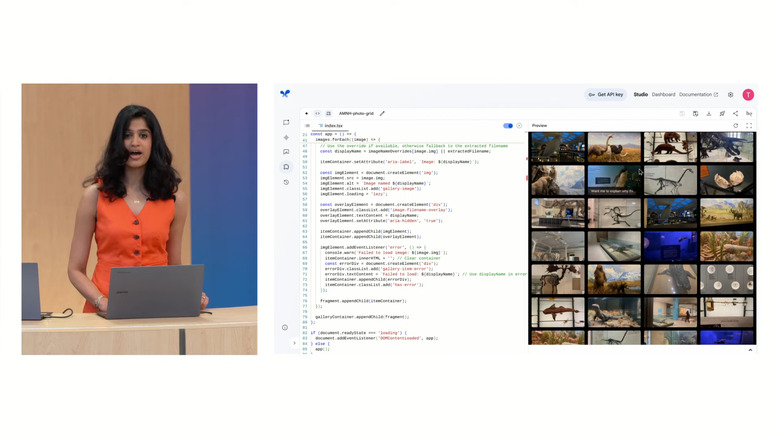

Doshi used Gemini 2.5 Pro to create a 3D sphere of images from a grid of photos, and then used Gemini to add built-in narration for each picture.

-

Doshi is now doing a demo showing how Gemini can improve web design, particularly when working in 3D.

-

Doshi is demoing Gemini 2.5 Pro in real-time.

-

Gemini 2.5 Flash will surface "thoughts" so you can see how it is arriving at answers and working through a query. Google says this provides more transparency (though in my experience with similar features on other models it also slows things down a bit.)

-

Honestly, all this jumping back and forth between different versions of Gemini is hard to follow.

Apparently Gemini 2.5 Flash is more power efficient than before as well.

-

According to Doshi, Gemini 2.5 Flash is 22 percent more efficient than the model it replaces.

-

Doshi says Gemini 2.5 is Google's most secure model yet.

-

Tulsee Doshi, Gemini Product Strategy Lead, is demoing the 2.5 Flash and Pro native audio output.

-

Gemini 2.5 is getting a new text-to-speech feature that sounds more natural and can even do things like whisper. It can also switch between languages using the same voice

-

Okay, the AI's whisper voice was slightly off-putting if I'm being honest. But the new text to speech voices do sound much less robotic and "AI-like" on the whole.

-

Now on stage is Tulsee Doshi.

-

The tagline seems to be: Imagine an

-

According to Demis, Gemini 2.5 Flash is better in nearly "every dimension" relative to its predecessor.

-

And now Google is showing a quick highlight real about 30 things you can build with AI.

-

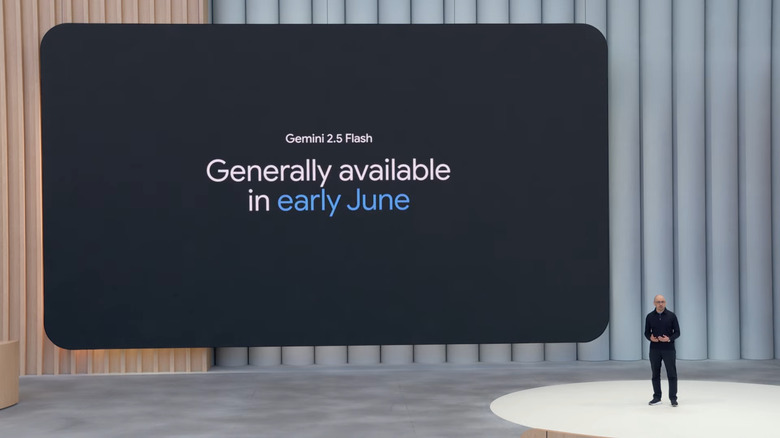

An updated Gemini 2.5 Flash is coming in June, with 2.5 Pro coming soon after.

-

On the flip side is Gemini 2.5 Flash, which is Google's lightweight AI model. It's getting an update and will be generally available in early June.

-

Demis says Gemini 2.5 Pro is the most intelligent AI model in the world. It can simulate entire cities and is also the leading model for learning.

-

Demis Hassabis, CEO of Google DeepMind, is discussing Gemini 2.5 Pro.

-

Lots of cheers for Hassabis (who also won a Nobel Prize since last year's I/O).

-

Next up is Demis Hassibis, from Google's Deepmind team.

-

I don't know about you guys, but if a friend asks me for travel recommendations, I'm usually so excited to offer them, and wouldn't want to offload that task to an algorithm.

-

Gemini can help you customize your Gmail responses by using your style and tone.

-

Meanwhile in Gmail, Pichai is showing off personalized smart replies, which allows AI to respond for you, but in a more natural and familiar way that *might* fool your friends and

-

Is this a safe space to admit I've never used a smart reply? Even if Gemini can train itself to sound exactly like me, it feels weird to let it take on my identity.

-

Agent Mode coming soon to subscribers.

-

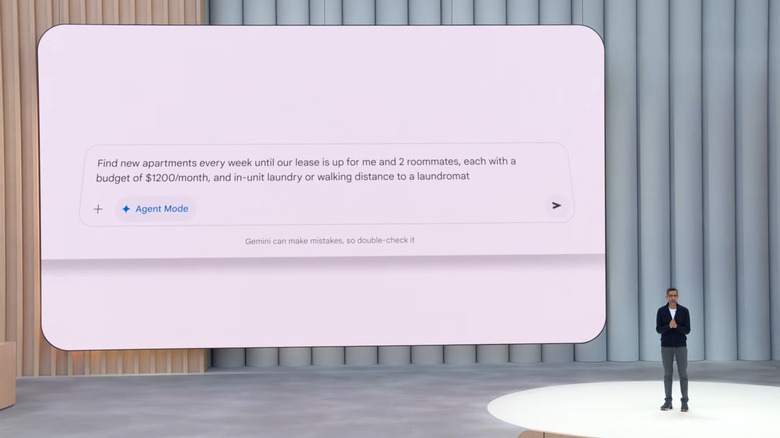

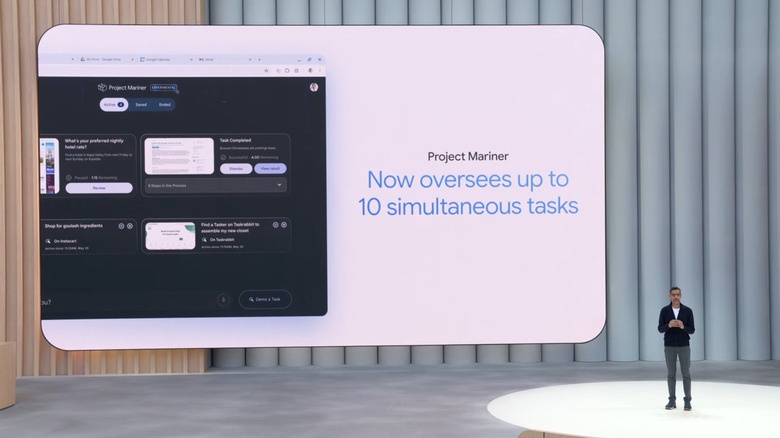

In the Gemini app, there's a new feature called Agent Mode.

It uses Project Mariner to do very things like find new apartment listings based on someone's specific parameters.

It will be available "soonto subscribers in the Gemini app.

-

The idea of off-loading a tedious task like apartment-hunting to an AI agent is definitely appealing, but it's one of those tasks that's also really important to get right. Not sure I'd trust the task to an experimental AI, personally.

-

Project Mariner, an AI agent for the web, is coming this year.

-

Project Mariner is being tuned for developers, so they can teach AI to repeat certain function in code

-

Sam and I got a chance to try out a very early version of Astra last year and Google has clearly made a lot of progress since then, including Gemini Live which incorporates some Astra features

-

If Project Mariner doesn't sound familiar, it's because it started life as Jarvis before Google began previewing it with "trusted testers" at the end of last year.

-

Gemini Live will roll out for Android and iOS users today.

-

Another announcement: Camera and screen sharing with Gemini Live is being made available to all iOS and Android devices starting today as a free update in the Gemini app.

-

Next up is Project Astra, which uses AI-powered object recognition to identify captured by the camera on someone's phone.

-

Right, Project Starline was a child of the pandemic, and it will be interesting to see if companies are willing to spend money on the necessary hardware when so many of them have enacted strict return to office polices.

-

Translation is one of the use cases for AI I'm very bullish about — exciting to see real-time translation tech in more places.

-

Google Meet will be able to translate languages in real time.

-

Announcement: English and Spanish translation is now available in Google Meet.

-

The next demo is on-the-fly translation powered by Gemini. Google is showing two people, one speaking English and one speaking Spanish, conversing almost in real time.

-

Alright, the first big update is Google Beam, which until now was called Starline. This is a great example of what I mentioned earlier about how long it can take some of these advancements to become real products — Starline first debuted at IO in 2021, I believe

-

Google Beam, a video chat service, will be available later this year.

-

The goal is to use AI to make video meetings and conferencing feel much more natural and realistc.

-

Next he highlights Project Starline as an important AI feature, which is now evolving into what's being called Google Bea

-

Sundar says that AI Overviews are bringing Gen AI to more people than other product, which sounds similar to something we hear a lot from Zuckerberg, who wants Meta AI to be the "most used AI Assistant." In both cases, though, I wonder how many of those users are finding their way to these Gen AI products intentionally.

-

AI Overviews has 1.5B+ users each month.

-

Pichai says we're entering a new phase of AI development.

-

Pichai says the Gemini app already has more than 400 million active monthly users.

-

Monthly tokens processed has apparently increased by 50x in just the last year.

-

I'll note here Gemini Plays Pokémon was inspired by Claude Plays Pokémon, which I wrote about in April.

-

One thing about an AI-heavy keynote is that it makes for some fairly dense visuals. So far we're seeing lots of graphs and charts.

-

Pichai also says Google's various AI models offer some of the best price to performance across the industry.

-

A few weeks ago, Gemini completed a full play through of Pokemon Blue!

-

It's interesting to see Google tout its Lmarena scores when Meta got caught gaming the leader board in recent weeks.

-

Unsurprisingly, Sundar opens by talking about Gemini's recent advancements and claims Gemini 2.5 Pro is the top model by benchmarks, He also says it's the "fastest growing" on Cursor (popular AI vibe coding platfrom) which is an interesting way to spin the fact that other models are likely more widely used there.

-

Pichai says Google ranks first across a number of different AI metrics.

"Gemini is the fastest growing model of the year."

-

Pichai says Google is shipping its AI products as fast as possible and that the company has a bunch of new models to show off.

-

The Google I/O event has officially started.

-

First on stage is Google CEO Sundar Pichai. Apparently its the start of Gemini season

-

So besides the music (which is "You Get What You Give" by The New Radicals), it seems like everything in the clip was created by AI.

-

First up is a Google I/O intro clip with a notable caption that says it was created by Google Veo and Imagen.

-

Ok, here we go!

-

I had a ham and cheese sandwich and a peanut butter sandwich here at home. I'm ready.

-

Last call for drinks and snacks. If history is any indication, we're expecting the keynote to run about two hours long.

-

Five minutes to go!

-

Hey, I think I was at least partially right. Toro y Moi is saying that the convergence of music and AI is going to happen whether we like it or not, so we might as well embrace it.

-

Don't remind me, I missed that Justice show and I will forever regret it.

-

The amphitheater is filling up.

The vibes are slowly picking up as we get close to the 10 minute mark, and this place is starting to fill up. (Event staff keep trying to heard folks to those empty seats behind the booth but people are, understandably, reluctant.)

-

I'm always curious how Google decides on the artists it wants to perform at I/O. At the end of the 2018 conference, the company had Justice perform, which, let me tell you, was a vibe.

-

I'm just waiting for Toro y Moi to say that all of the sounds used in this set were created by AI.

-

LOL. Toro y Moi just asked the crowd if they are awake. Like come on, this set super relaxing and you know it.

-

Yea, Igor. As I think about it, sometimes I feel like the bar for AI should be if people would still care if they didn't know a specific tool is powered by machine learning.

-

Opposite vibe from the unhinged Loop Daddy set we got last year, huh?

-

Nice of Google to give Chaz a custom Pataguchi puffer with his stage name engraved on it.

-

I do love Toro y Moi and appreciate the more chill beats.

-

On stage is Toro y Moi, who is playing some very relaxing synth tunes before the show.

-

Sam, to your point, I hope we don't get Google announcing a new AI model and then touting its performance through math and coding benchmarks. At this point, we need something more tangible that speaks to the average person.

-

Oh hey, ask and ye shall receive. It seems like the livestream is actually piping in live footage now!

-

Haha, got it.

Back to your previous point, if we look at the industry as a whole, it feels like there are a lot of different AI services (ChaptGPT, Anthropic/Claude, Google, etc) that can do things like summarize emails, generate images or create reminders and calendar appointments. So now its really upon companies like Google to further differentiate their products and prove how useful AI can really be.

-

And, fwiw, you're not missing much on the stream right now. The live vibe coding sesh has wrapped up and we're back to tunes and AI facts playing on-screen.

-

Agreed, Sam. That can be one of the more frustrating things about I/O — the most exciting things we see are often so early it can take a few more years before we see them come to fruition. I'd say Project Astra, which we both got a chance to demo last year, definitely falls into that category. So does mixed reality/AR, though we've been seeing some good momentum from companies like Meta and Snap over the last year.

-

-

For anyone who wants to watch along with us, here's the link to the official Google I/O livestream on YouTube.

-

As I'm tuning in remotely, I kind of wish the pre-show livestream would show footage of the stage instead of a canned soundtrack and colorful graphics.

-

As a quick refresher, here's the hands-on with Project Astra Karissa and I wrote at Google I/O last year.

-

That said, there are some genuinely exciting things like Project Astra and Starline that feel almost like they came out of a sci-fi novel. And as someone who appreciates MR headsets, I'm very curious to see what kind of news Google has related to Android XR.

-

On one hand, I get it. Google has been championing the power of AI before basically everyone. But to me, the issue is that a lot of these updates are hard to parse, especially as there's often a lot of overlap between new features and previous versions.

-

Also, I really hope everyone is prepared for 120 minutes of pure AI info d. With Google having hosted The Android Show last week, it really feels like they were getting that stuff out of the way so they could hit us over the head with AI, AI and then more AI.

-

Okay, the Taylor Swift remixes have stopped and we're now being treated to a live demo of two Googlers doing ... improvisational vibe coding?

-

Alternatively, you might want to get a stretch in while you can. We're not 100 percent sure, but based on the block Google carved out in its I/O Calendar, the keynote looks like it's going to run for a full two hours.

-

Google I/O keynote countdown

-

And here's your one hour warning! Now's a great time to grab some food or drink before I/O kicks off for real at 1PM ET/10AM PT.

-

Scam prevention is one of those things where I reallyyy hope AI lives up to all the hype. AI technology has supercharged *so many* scams, it would be nice if AI could help undo some of that damage too

-

Speaking of The Android Show, I just checked my phone (a Pixel 9 Pro Fold) and I saw that I got hit with a toll road scam text for the first time, which is something Google said they are using AI to crack down on.

Unfortunately, I don't think that update has rolled out quite yet, so hopefully that arrives soon.

-

No cars yet, but I haven't walked the whole space yet. I made a beeline straight for breakfast and then the keynote line :) But I'd be shocked if there wasn't some Android Auto representation here today.

-

Karissa, have you spotted any fun cars at I/O yet?

I was wondering if they would have anything on display after announcing Gemini was heading to Android Auto and vehicles with Google built-in last week at during The Android Show.

-

May or may not be a coincidence????????

Oh hell no. Apple knew exactly what it was doing by trying to steal some of Google's thunder and sending out WWDC invites on the first day of I/O.

-

I've made it into the keynote (and am in the shade.. for now, at least). The DJ is spinning (Taylor Swift at the moment) and the crowd is just starting to come in. Let's hope the wifi holds!

The Google I/O stage.

-

Cherlynn, let me be clear. I'm not putting ketchup on my BECs. But that's that's pretty common at any NYC bodega.

-

In what may or may not be a coincidence, Apple just sent out invites to the keynote for WWDC 2025 this morning. That's probably tastier than your breakfast sandwich, Karissa.

-

Any idea of what kind of musical performance we can expect this year? Last year's Loop Daddy set was unhinged 😅

-

KETCHUP!?!?!?! SAM!?!??! KETCHUP!?!??! ON A BEC!?!?!?!?

-

Here's hoping you can get a spot underneath the roof, Karissa! There was one year at I/O where I had to sit in the sun for the whole keynote and I got a wicked sunburn.

-

Waiting to get into the Shoreline Amphitheater

Karissa just got in line to grab a seat!

-

As for the sandwich, I have to represent east coast preferences. All I want is a bacon, egg and cheese. Maybe some ketchup if that's what you're into. Everything else is just a distraction.

-

I have always dreamed about reviewing the food that gets put out during meetings at CES, but there's never enough time!

-

You're both tough critics! It was pretty decent (and had some aioli not evident in the pic.) Hopefully enough to keep me going through what will likely be a marathon keynote

-

Hmm... I agree with you Igor. A solid 6.5. It looks dry but delicious with some moisture. If I were there I'd also be rating the bathrooms, but from past experience the restrooms at Shoreline do not measure up to the ones at Apple Park or Google's own campus. One of these days I'll start a blog reviewing Big Tech campuses. Maybe.

-

Not gonna lie, lettuce on a bacon and egg sandwich is a bit weird. But that wouldn't stop me lol.

-

For the curious, here's the breakfast sandwich Google served to Karissa. Chat, how would you rate it? I'd give it a 6 or a 7.

A breakfast sandwich with bacon, egg, tomato and a sad-looking piece of lettuce.

-

I just got to the press area and am waiting for some snacks and coffee ... I think I saw some pastries and bagels but will update.

-

Karissa, what's the breakfast and snack situation like at the press tent?

-

We're still a ways out from the beginning of the keynote, but here's your two hour warning.

Google I/O keynote countdown

-

I'm through security and waiting to be let into Shoreline with the rest of the early birds. When not being used for I/O, this is a concert venue and Google definitely tries to keep that vibe for this event.

-

For one thing, there was already a healthy amount of Android news revealed last week, which you can catch up on by reading our roundup of everything Google announced at the Android Show. In case you don't want to open another tab, just know that Android 16 will have a new design language called Material 3 Expressive, as well as new device-location and anti-scam measures. Google also said it's bringing Gemini to more places, including Wear OS 6, Google TV, Android Auto and cars with Google built in.

-

Glad to see Karissa made it. Traffic on I/O day is always dicey. But I'd recognize that dusty parking lot anywhere.

-

A woman holding up a badge that says "Karissa Bell, Engadget" with a red label above the name saying "Press." Behind her is a tent in a large parking lot.

Karissa has not only arrived safely at Shoreline Amphitheater, but has also acquired her badge! Looks like it's going to be lovely weather for the show, and probably a good idea to lather on sunscreen if you're there!

-

Our senior reporter Karissa Bell will be reporting live from Google I/O, while senior reviewer Sam Rutherford will be leading this liveblog, backed up by AI reporter Igor Bonifacic. I'll be around for support, logistics, vibes and snacks. The show kicks off at 1pm ET, but as you can see, we couldn't wait to start. There's been a lot, honestly.

-

Hello everyone! Welcome to our liveblog of Google's annual I/O developer conference. I feel as if our liveblog tool has gotten more than its fair share of use these last two weeks. If it all feels very familiar to you too, that's likely because we had two liveblogged events just last week, one of which was of the company's Android showcase

Update, May 19 2025, 1:01PM ET: This story has been updated to include details on the developer keynote taking place later in the day, as well as tweak wording throughout for accuracy with the new timestamp.

Update, May 20 2025, 9:45AM ET: This story has been updated to include a liveblog of the event.

Update, May 20 2025, 2:08PM ET: This story has been updated to include an initial set of headlines coming out of the I/O keynote.

Update, May 20 2025, 4:20PM ET: Added additional headlines and links based on the flow of news from the initial keynote.