Is DALL-E's art borrowed or stolen?

Creative AIs can't be creative without our art.

In 1917, Marcel Duchamp submitted a sculpture to the Society of Independent Artists under a false name. Fountain was a urinal, bought from a toilet supplier, with the signature R. Mutt on its side in black paint. Duchamp wanted to see if the society would abide by its promise to accept submissions without censorship or favor. (It did not.) But Duchamp was also looking to broaden the notion of what art is, saying a ready-made object in the right context would qualify. In 1962, Andy Warhol would twist convention with Campbell's Soup Cans, 32 paintings of soup cans, each one a different flavor. Then, as before, the debate raged about if something mechanically produced – a urinal, or a soup can (albeit hand-painted by Warhol) – counted as art, and what that meant.

Now, the debate has been turned upon its head, as machines can mass-produce unique pieces of art on their own. Generative Artificial Intelligences (GAIs) are systems which create pieces of work that can equal the old masters in technique, if not in intent. But there is a problem, since these systems are trained on existing material, often using content pulled from the internet, from us. Is it right, then, that the AIs of the future are able to produce something magical on the backs of our labor, potentially without our consent or compensation?

The new frontier

The most famous GAI right now is DALL-E 2, Open AI's system for creating "realistic images and art from a description in natural language." A user could enter the phrase "teddy bears shopping for groceries in the style of Ukiyo-e," and the model will produce pictures in that style. Similarly, ask for the bears to be shopping in Ancient Egypt and the images will look more like dioramas from a museum depicting life under the Pharaohs. To the untrained eye, some of these pictures look like they were drawn in 17th-century Japan, or shot at a museum in the 1980s. And these results are coming despite the technology still being at a relatively early stage.

Open AI recently announced that DALL-E 2 would be made available to up to one million users as part of a large-scale beta test. Each user will be able to make 50 generations for free during their first month of use, and then 15 for every subsequent month. (A generation is either the production of four images from a single prompt, or the creation of three more if you choose to edit or vary something that's already been produced.) Additional 115-credit packages can be bought for $15, and the company says more detailed pricing is likely to come as the product evolves. Crucially, users are entitled to commercialize the images produced with DALL-E, letting them print, sell or otherwise license the pictures borne from their prompts.

These systems did not, however, develop an eye for a good picture in a vacuum, and each GAI has to be trained. Artificial Intelligence is, after all, a fancy term for what is essentially a way of teaching software how to recognize patterns. "You allow an algorithm to develop that can be improved through experience," said Ben Hagag, head of research at Darrow, an AI startup looking to improve access to justice. "And by experience I mean examining and finding patterns in data." "We say to the [system] 'take a look at this dataset and find patterns," which then go on to form a coherent view of the data at hand. "The model learns as a baby learns," he said, so if a baby looked at a 1,000 pictures of a landscape, it would soon understand that the sky – normally oriented across the top of the image – would be blue while land is green.

Hagag cited how Google built its language model by training a system on several gigabytes of text, from the dictionary to examples of the written word. "The model understood the patterns, how the language is built, the syntax and even the hidden structure that even linguists find hard to define," Hagag said. Now that model is sophisticated enough that "once you give it a few words, it can predict the next few words you're going to write." In 2018, Google's Ajit Varma told The Wall Street Journal that its smart reply feature had been trained on "billions of Gmail messages," adding that initial tests saw options like 'I Love You' and 'Sent from my iPhone' offered up since they were so commonly seen in communications.

Developers who do not have the benefit of access to a data set as vast as Google's need to find data via other means. "Every researcher developing a language model first downloads Wikipedia then adds more," Hagag said. He added that they are likely to pull down any, and every, piece of available data that they can find. The sassy tweet you sent a few years ago, or that sincere Facebook post, may have been used to train someone's language model, somewhere. Even Open AI uses social media posts with WebText, a dataset which pulls text from outbound Reddit links which received at least three karma, albeit with Wikipedia references removed.

Guan Wang, CTO of Huski, says that the pulling down of data is "very common." "Open internet data is the go-to for the majority of AI model training nowadays," he said. And that it's the policy of most researchers to get as much data as they can. "When we look for speech data, we will get whatever speech we can get," he added. This policy of more data-is-more is known to produce less than ideal results, and Ben Hagag cited Riley Newman, former head of data science at Airbnb, who said "better data beats more data," but Hagag notes that often, "it's easier to get more data than it is to clean it."

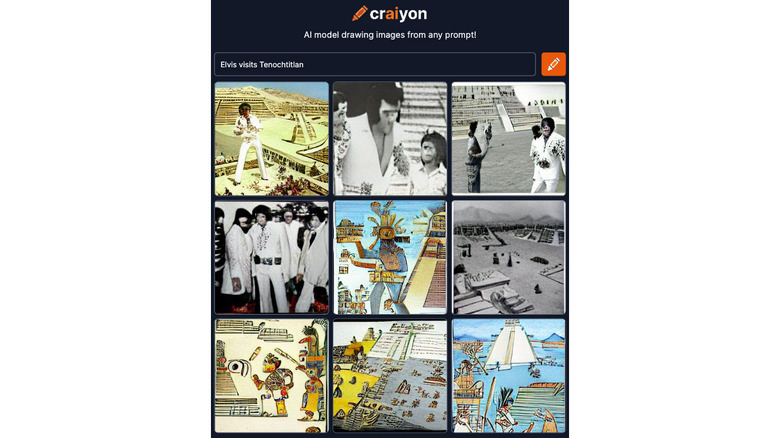

DALL-E may now be available to a million users, but it's likely that people's first experience of a GAI is with its less-fancy sibling. Craiyon, formerly DALL-E Mini, is the brainchild of French developer Boris Dayma, who started work on his model after reading Open AI's original DALL-E paper. Not long after, Google and the AI development community HuggingFace ran a hackathon for people to build quick-and-dirty machine learning models. "I suggested, 'Hey, let's replicate DALL-E. I have no clue how to do that, but let's do it," said Dayma. The team would go on to win the competition, albeit with a rudimentary, rough-around-the-edges version of the system. "The image [it produced] was clear. It wasn't great, but it wasn't horrible," he added. But unlike the full-fat DALL-E, Dayma's team was focused on slimming the model down so that it could work on comparatively low-powered hardware.

Dayma's original model was fairly open about which image sets it would pull from, often with problematic consequences. "In early models, still in some models, you ask for a picture – for example mountains under the snow," he said, "and then on top of it, the Shutterstock or Alamy watermark." It's something many AI researchers have found, with GAIs being trained on those image libraries public-facing image catalogs, which are covered in anti-piracy watermarks.

Dayma said that the model had erroneously learned that high-quality landscape images typically had a watermark from one of those public photo libraries, and removed them from his model. He added that some early results also output not-safe-for-work responses, forcing him to make further refinements to his initial training set. Dayma added that he had to do a lot of the sorting through the data himself, and said that "a lot of the images on the internet are bad."

Got sent some moody Russian ruDall-E GAN images last week from my dev piotr, that had shutterstock logos generated in them, oh how we laughed....now looks like the real Dall-E is doing the same... pic.twitter.com/6A2yLFHelw

— @amoebadesign (@amoebadesign) June 8, 2022

But it's not just Dayma who has noticed the regular appearance of a Shutterstock watermark, or something a lot like it, popping up in AI-generated art. Which begs the question, are people just ripping off Shutterstock's public-facing library to train their AI? It appears that one of the causes is Google, which has indexed a whole host of watermarked Shutterstock images as part of its Conceptual Captions framework. Delve into the data, and you'll see a list of image URLs which can be used to train your own AI model, thousands of which are from Shutterstock. Shutterstock declined to comment on the practice for this article.

Several results from the bigger GAN models, like StyleGAN are even able to recreate the watermark on images from certain websites, namely @Shutterstock It looks like hardly anyone doing ML really cares about privacy or copyright at the moment pic.twitter.com/ADrKzzOzMH

— A Wojcicki (@pretendsmarts) March 16, 2019

A Google spokesperson said that they don't "believe this is an issue for the datasets we're involved with." They also quoted from this Creative Commons report, saying that "the use of works to train AI should be considered non-infringing by default, assuming that access to the copyright works was lawful at the point of input." That is despite the fact that Shutterstock itself expressly forbids visitors to its site from using "any data mining, robots or similar data and/or image gathering and extraction methods in connection with the site or Shutterstock content."

You got to love how the GAN has the shutterstick watermark trained it and tries hard to put it into the image. Also apparently a certain subset of images of horses have all the shutterstock address placed in the same position on the bottom. pic.twitter.com/I7iW1kcuYz

— datenwolf – @datenwolf@chaos.social (@datenwolf) January 9, 2021

Alex Cardinell, CEO at AI startup Article Forge, says that he sees no issue with models being trained on copyrighted texts, "so long as the material itself was lawfully acquired and the model does not plagiarize the material." He compared the situation to a student reading the work of an established author, who may "learn the author's styles or patterns, and later find applicable places to reuse those concepts." He added that so long as a model isn't "copying and pasting from their training data," then it simply repeats a pattern that has appeared since the written word began.

Dayma says that, at present, hundreds of thousands, if not millions of people are playing with his system on a daily basis. That all incurs a cost, both for hosting and processing, which he couldn't sustain from his own pocket for very long, especially since it remains a "hobby." Consequently, the site runs ads at the top and bottom of its page, between which you'll get a grid of nine surreal images. "For people who use the site commercially, we could always charge for it," he suggested. But he admitted his knowledge of US copyright law wasn't detailed enough to be able to discuss the impact of his own model, or others in the space. This is the situation that Open AI also perhaps finds itself dealing with given that it is now allowing users to sell pictures created by DALL-E.

The law of art

The legal situation is not a particularly clear one, especially not in the US, where there have been few cases covering Text and Data Mining, or TDM. This is the technical term for the training of an AI by plowing through a vast trove of source material looking for patterns. In the US, TDM is broadly covered by Fair Use, which permits various forms of copying and scanning for the purposes of allowing access. This isn't, however, a settled subject, but there is one case that people believe sets enough of a precedent to enable the practice.

Authors Guild v. Google (2015) was brought by a body representing authors, which accused Google of digitizing printed works that were still held under copyright. The initial purpose of the work was, in partnership with several libraries, to catalog and database the texts to make research easier. Authors, however, were concerned that Google was violating copyright, and even if it wasn't making the text of a still-copyrighted work available publicly, it was prohibited from scanning and storing it in the first place. Eventually, the Second Circuit ruled in favor of Google, saying that digitizing copyright-protected work did not constitute copyright infringement.

Rahul Telang is Professor of Information Systems at Carnegie Mellon University, and an expert in digitization and copyright. He says that the issue is "multi-dimensional," and that the Google Books case offers a "sort of precedent" but not a solid one. "I wish I could tell you there was a clear answer," he said, "but it's a complicated issue," especially around works that may or may not be transformative. And until there is a solid case, it's likely that courts will apply the usual tests for copyright infringement, around if a work supplants the need for the original, and if it causes economic harm to the original rights holder. Telang believes that countries will look to loosen restrictions on TDM wherever possible in order to boost domestic AI research.

The US Copyright Office says that it will register an "original work of authorship, provided that the work was created by a human being." This is due to the old precedent that the only thing worth copyrighting is "the fruits of intellectual labor," produced by the "creative powers of the mind." In 1991, this principle was affirmed by a case of purloined listings from one phone book company by another. The Supreme Court held that while effort may have gone into the compilation of a phone book, the information contained therein was not an original work, created by a human being, and so therefore couldn't be copyrighted. It will be interesting to see if there are any challenges made to users trying to license or sell a DALL-E work for this very reason.

Rob Holmes, a private investigator who works on copyright and trademark infringement with many major tech companies and fashion brands, believes that there is a reticence across the industry to pursue a landmark case that would settle the issue around TDM and copyright. "Legal departments get very little money," he said. "All these different brands, and everyone's waiting for the other brand, or IP owner, to begin the lawsuit. And when they do, it's because some senior VP or somebody at the top decided to spend the money, and once that happens, there's a good year of planning the litigation." That often gives smaller companies plenty of time to either get their house in order, get big enough to be worth a lawsuit or go out of business.

"Setting a precedent as a sole company costs a lot of money," Holmes said, but brands will move fast if there's an immediate risk to profitability. Designer brand Hermés, for instance, is suing an artist named Mason Rothschild, who is producing MetaBirkins NFTs. These are styled images on a design reminiscent of Hermés' famous Birkin handbag, something the French fashion house says is nothing more than an old-fashioned rip-off. This, too, is likely to have ramifications for the industry as it wrestles with philosophical questions of what work is sufficiently transformational as to prevent an accusation of piracy.

Artists are also able to upload their own work to DALL-E and then generate recreations in their own style. I spoke to one artist, who asked not to be named or otherwise described for fear of being identified and suffering reprisals. They showed me examples of their work alongside recreations made by DALLl-E, which while crude, were still close enough to look like the real thing. They said that, on this evidence alone, their livelihood as a working artist is at risk, and that the creative industries writ large are "doomed."

Article Forge CEO Alex Cardinell says that this situation, again, has historical precedent with the industrial revolution. He says that, unlike then, society has a collective responsibility to "make sure that anyone who is displaced is adequately supported." And that anyone in the AI space should be backing a "robust safety net," including "universal basic income and free access to education," which he says is the "bare minimum" a society in the midst of such a revolution should offer.

Trained on your data

AIs are already in use. Microsoft, for instance, partnered with OpenAI to harness GPT-3 as a way to build code. In 2021, the company announced that it would integrate the system into its low-code app-development platform to help people build apps and tools for Microsoft products. Duolingo uses the system to improve people's French grammar, while apps like Flowrite employs it to help make writing blog posts and emails easier and faster. Midjourney, a DALL-E 2-esque GAI for art, which has recently opened up its beta, is capable of producing stunning illustrated art – with customers charged between $10-50 a month if they wish to produce more images or use those pictures commercially.

For now, that's something Craiyon doesn't necessarily need to worry about, since the resolution is presently so low. "People ask me 'why is the model bad on faces', not realizing that the model is equally good – or bad – at everything," Dayma said. "It's just that, you know, when you draw a tree, if the leaves are messed up you don't care, but when the faces or eyes are, we put more attention on it." This will, however, take time both to improve the model, and to improve the accessibility of computing power capable of producing the work. Dayma believes that despite any notion of low quality, any GAI will need to be respectful of "the applicable laws," and that it shouldn't be used for "harmful purposes."

And artificial intelligence isn't simply a toy, or an interesting research project, but something that has already caused plenty of harm. Take Clearview AI, a company that scraped several billion images, including from social media platforms, to build what it claims is a comprehensive image recognition database. According to The New York Times, this technology was used by billionaire John Catsimatidis to identify his daughter's boyfriend. BuzzFeed News reported that Clearview has offered access not just to law enforcement – its supposed corporate goal – but to a number of figures associated with the far right. The system has also proved less than reliable, with The Times reporting that it has led to a number of wrongful arrests.

Naturally, the ability to synthesize any image without the need for a lot of photoshopping should raise alarm. Deepfakes, a system that uses AI to replace someone's face in a video has already been used to produce adult content featuring celebrities. As quickly as companies making AIs can put in guardrails to prevent adult-content prompts, it's likely that loopholes will be found. And as open-source research and development becomes more prevalent, it's likely that other platforms will be created with less scrupulous aims. Not to mention the risk of this technology being used for political ends, given the ease of creating fake imagery that could be used for propaganda purposes.

Of course, Duchamp and Warhol may have stretched the definitions of what art can be, but they did not destroy art in and of itself. It would be a mistake to suggest that automating image generation will inevitably lead to the collapse of civilization. But it's worth being cautious about the effects on artists, who may find themselves without a living if it's easier to commission a GAI to produce something for you. Not to mention the implication for what, and how, these systems are creating material for sale on the backs of our data. Perhaps it is time that we examined if it's necessary to implement a way of protecting our material – something equivalent to Do Not Track – to prevent it being chewed up and crunched through the AI sausage machine.