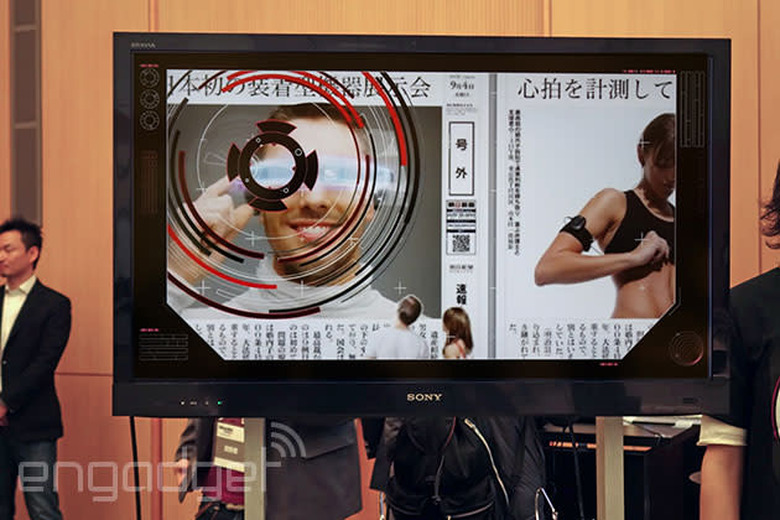

Augmented reality concept uses Google Glass to make reading the newspaper more like... reading a website

As part of the Wearable Tech Expo 2014 in Tokyo, the Asahi Shimbun is looking to offer richer content to users still reading its dead tree editions. The 'AIR' concept uses wearable du jour Google Glass to both detect physical markers and display any digital companion content. According to Asahi's Media Lab, the concept's aim is to better broadcast and convey "emotional" content: a picture of Winter Olympics skater Asada Mao gets picked up and Google Glass barrels into a slideshow, alongside a stirring soundtrack. (She had announced her retirement, and apparently her many fans were very upset.)

It's about connecting anyone who reads the (actual, physical) newspaper to everything that doesn't make it on to the page, whether that's more photos, related articles, or video. There's no release date for AIR — to begin with, Google Glass isn't even available in Japan — and it simply mimics, to a large degree, what we've already seen from augmented reality apps on smartphones. However, this wearable-based delivery method makes more sense, with no need to hover your smartphone camera over points of interest. The team didn't mention who powers the detection software, although the recent news that Layar has brought its APK to the Google wearable could well be connected.

The software can also pick up physical objects, and direct users through to related video and site content, although it can be a little temperamental. Our model had a thing for the spring rolls positioned at the demo booth, even when we were looking elsewhere. Hey, we all like spring rolls.