Google wants to speed up image recognition in mobile apps

MobileNets can spot castles or dog breeds without using the cloud.

Cloud-based AI is so last year, because now the major push from companies like chip-designer ARM, Facebook and Apple is to fit deep learning onto your smartphone. Google wants to spread the deep learning to more developers, so it has unveiled a mobile AI vision model called MobileNets. The idea is to enable low-powered image recognition that's already trained, so that developers can add imaging features without using slow, data-hungry and potentially intrusive cloud computing.

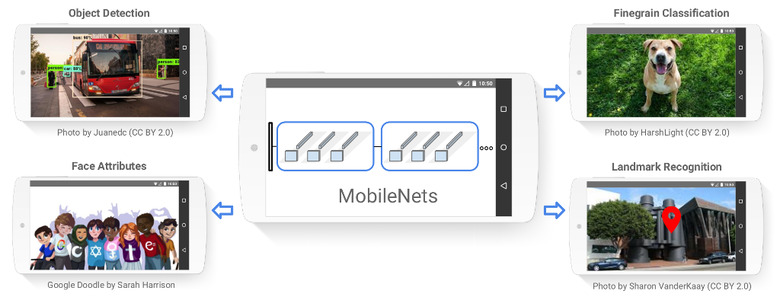

Google has made the app open-source so any developer can adopt it. It can perform chores like object detection, face attribute recognition, fine-grained classification (recognizing a dog-breed, for instance) and landmark recognition. The tech is part of TensorFlow, Google's deep learning model that recently shrunk down to mobile size in a new version called TensorFlow Lite.

MobileNets is not one-size-fits-all, as Google has actually built 16 pre-trained models "for use in mobile projects of all sizes." The larger the model, the better it is at recognizing landmarks, faces or doggos, with the most CPU-intensive ones hitting scores of between 70.7 and 89.5 percent accuracy. Those aren't far from Google's cloud-based AI, which can recognize and caption objects with around 94 percent accuracy, last we checked.

With different pre-trained models at their disposal, developers can pick one that best suits the memory and processing requirements for an app. To integrate the new models, developers need to use TensorFlow Mobile, a system designed to ease deployment of AI apps on iOS and Android.

From a consumer standpoint, you'll likely start to see apps that can do basic image identification and other useful functions, with more speed, less data use and better privacy. An example of that could be Google's new Lens product, which can pick out landmarks, products and faces using a combination of smartphone and cloud processing. The tech probably won't hit its stride, though, until we see new chips that support it — and both Google and Apple are already working on that.