AI pioneer Geoffrey Hinton isn't convinced good AI will triumph over bad AI

The 'Godfather of AI' doesn’t share the industry’s optimism.

University of Toronto professor Geoffrey Hinton, often called the "Godfather of AI" for his pioneering research on neural networks, recently became the industry's unofficial watchdog. He quit working at Google this spring to more freely critique the field he helped pioneer. He saw the recent surge in generative AIs like ChatGPT and Bing Chat as signs of unchecked and potentially dangerous acceleration in development. Google, meanwhile, was seemingly giving up its previous restraint as it chased competitors with products like its Bard chatbot.

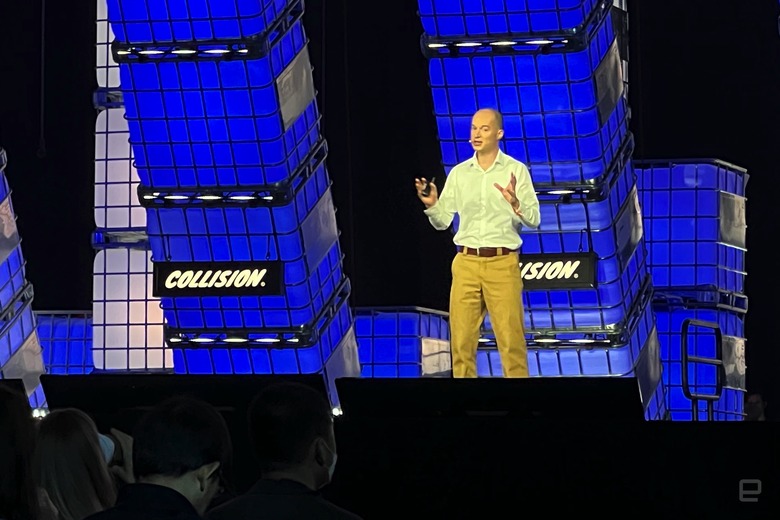

At this week's Collision conference in Toronto, Hinton expanded his concerns. While companies were touting AI as the solution to everything from clinching a lease to shipping goods, Hinton was sounding the alarm. He isn't convinced good AI will emerge victorious over the bad variety, and he believes ethical adoption of AI may come at a steep cost.

A threat to humanity

University of Toronto professor Geoffrey Hinton (left) speaking at Collision 2023.

Hinton contended that AI was only as good as the people who made it, and that bad tech could still win out. "I'm not convinced that a good AI that is trying to stop bad AI can get control," he explained. It might be difficult to stop the military-industrial complex from producing battle robots, for instance, he says — companies and armies might "love" wars where the casualties are machines that can easily be replaced. And while Hinton believes that large language models (trained AI that produces human-like text, like OpenAI's GPT-4) could lead to huge increases in productivity, he is concerned that the ruling class might simply exploit this to enrich themselves, widening an already large wealth gap. It would "make the rich richer and the poor poorer," Hinton said.

Hinton also reiterated his much-publicized view that AI could pose an existential risk to humanity. If artificial intelligence becomes smarter than humans, there is no guarantee that people will remain in charge. "We're in trouble" if AI decides that taking control is necessary to achieve its goals, Hinton said. To him, the threats are "not just science fiction;" they have to be taken seriously. He worries that society would only rein in killer robots after it had a chance to see "just how awful" they were.

There are plenty of existing problems, Hinton added. He argues that bias and discrimination remain issues, as skewed AI training data can produce unfair results. Algorithms likewise create echo chambers that reinforce misinformation and mental health issues. Hinton also worries about AI spreading misinformation beyond those chambers. He isn't sure if it's possible to catch every bogus claim, even though it's "important to mark everything fake as fake."

This isn't to say that Hinton despairs over AI's impact, although he warns that healthy uses of the technology might come at a high price. Humans might have to conduct "empirical work" into understanding how AI could go wrong, and to prevent it from wresting control. It's already "doable" to correct biases, he added. A large language model AI might put an end to echo chambers, but Hinton sees changes in company policies as being particularly important.

The professor didn't mince words in his answer to questions about people losing their jobs through automation. He feels that "socialism" is needed to address inequality, and that people could hedge against joblessness by taking up careers that could change with the times, like plumbing (and no, he isn't kidding). Effectively, society might have to make broad changes to adapt to AI.

The industry remains optimistic

Google DeepMind CBO Colin Murdoch at Collision 2023.

Earlier talks at Collision were more hopeful. Google DeepMind business chief Colin Murdoch said in a different discussion that AI was solving some of the world's toughest challenges. There's not much dispute on this front — DeepMind is cataloging every known protein, fighting antibiotic-resistant bacteria and even accelerating work on malaria vaccines. He envisioned "artificial general intelligence" that could solve multiple problems, and pointed to Google's products as an example. Lookout is useful for describing photos, but the underlying tech also makes YouTube Shorts searchable. Murdoch went so far as to call the past six to 12 months a "lightbulb moment" for AI that unlocked its potential.

Roblox Chief Scientist Morgan McGuire largely agrees. He believes the game platform's generative AI tools "closed the gap" between new creators and veterans, making it easier to write code and create in-game materials. Roblox is even releasing an open source AI model, StarCoder, that it hopes will aid others by making large language models more accessible. While McGuire in a discussion acknowledged challenges in scaling and moderating content, he believes the metaverse holds "unlimited" possibilities thanks to its creative pool.

Both Murdoch and McGuire expressed some of the same concerns as Hinton, but their tone was decidedly less alarmist. Murdoch stressed that DeepMind wanted "safe, ethical and inclusive" AI, and pointed to expert consultations and educational investments as evidence. The executive insists he is open to regulation, but only as long as it allows "amazing breakthroughs." In turn, McGuire said Roblox always launched generative AI tools with content moderation, relied on diverse data sets and practiced transparency.

Some hope for the future

Roblox Chief Scientist Morgan McGuire talks at Collision 2023.

Despite the headlines summarizing his recent comments, Hinton's overall enthusiasm for AI hasn't been dampened after leaving Google. If he hadn't quit, he was certain he would be working on multi-modal AI models where vision, language and other cues help inform decisions. "Small children don't just learn from language alone," he said, suggesting that machines could do the same. As worried as he is about the dangers of AI, he believes it could ultimately do anything a human could and was already demonstrating "little bits of reasoning." GPT-4 can adapt itself to solve more difficult puzzles, for instance.

Hinton acknowledges that his Collision talk didn't say much about the good uses of AI, such as fighting climate change. The advancement of AI technology was likely healthy, even if it was still important to worry about the implications. And Hinton freely admitted that his enthusiasm hasn't dampened despite looming ethical and moral problems. "I love this stuff," he said. "How can you not love making intelligent things?"