The law that predicts computing power turns 50

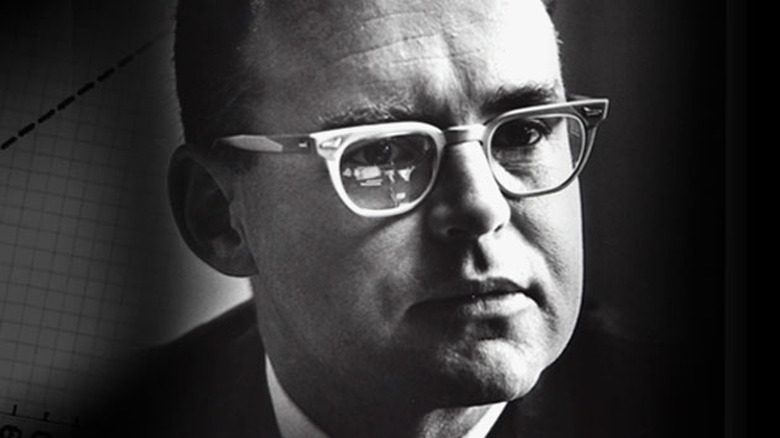

Today represents a historic milestone in technology: It's the 50th anniversary of Moore's Law, the observation that the complexity of computer chips tends to double at a regular rate. On April 19th, 1965, Fairchild's Gordon Moore (later to co-found Intel) published an article noting that the number of components in integrated circuits had not only doubled every year up to that point, but also would continue at that pace for at least a decade. He would later revise that guideline to every two years, but the concept of an unofficial law of progress stuck. It not only foresaw the rapid expansion of computing power, but also frequently served as a target — effectively, it became a self-fulfilling prophecy.

The revised law has largely held up over the past five decades, but it might not last much longer. With chips at 14 nanometers (such as Intel's Broadwell processors), the industry is starting to hit physical limits. Circuits are now so small that escaping heat is a big problem. While Moore's Law may survive for another couple of processor generations, there's a chance that chip designers will need new materials or exotic data-transmission techniques (like quantum tunneling) for the rule to hold true. Semiconductor companies have run into seemingly impassable barriers before, though, so Moore may be vindicated for a while yet.