Facebook's tool for blind users can describe News Feed photos

It's only available on iOS, at least for now.

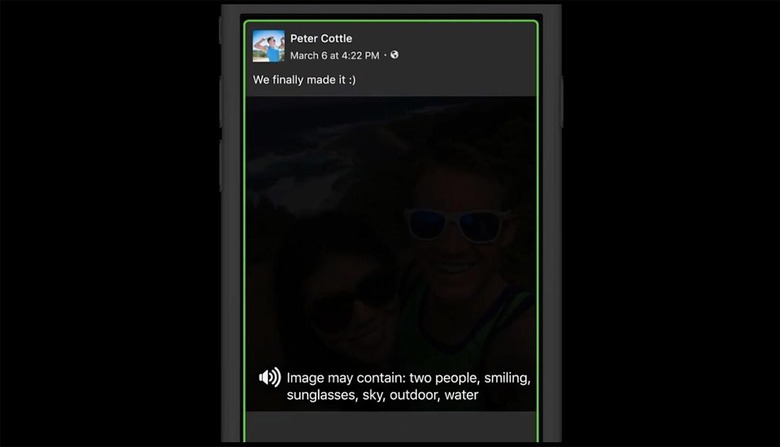

Facebook has launched a new tool for iOS that can help blind, English-speaking users make sense of all the photos people post on the social network. It's called automatic alternative text, and it can give a basic description of a photo's contents for anyone who's using a screen reader. In the past, screen readers can only tell visually impaired users that there's a photo in the status update they're viewing. With this new tool in place, they can rattle off elements the company's object recognition technology detects in the images. For instance, they can now tell users that they're looking at a friend's photo that "may contain: tree, sky, sea."

The engineers at 1 Hacker Way trained their object recognition tech by feeding it millions of images as examples. This kind of machine learning is called neural network, and it was how Google taught AlphaGo the ancient game of Go and how one of our editors trained his computer to write Engadget articles. The team then made sure that alt text describes photos in a specific order, starting with (the number of) people, then objects and then scenes in the background.

People tend to post mostly images on Facebook these days to the point that browsing the News Feed has become a very visual experience. Someone who can't see all those could feel excluded, but this technology could help "the blind community experience Facebook the same way others enjoy it." While the tool is only available for iOS and only in English right now, the social network plans to release it on other platforms and in other languages in the future.