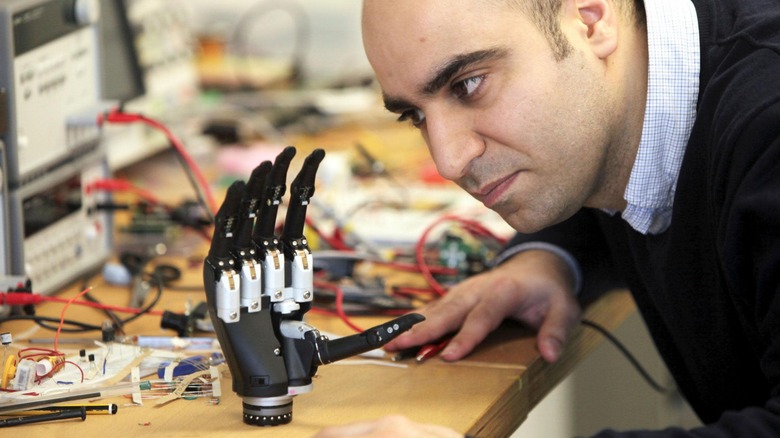

'Intuitive' prosthetic hand sees what it's touching

It reacts in milliseconds with one of four programmed grasps.

Even as we begin to wire prosthetics directly into our peripheral nervous systems and wield Deus Ex appendages with only the power of our minds, many conventional prosthetic arms are still pretty clunky, their grips activated through myoelectric signals — electrical activity read from the surface of the stump. The "Intuitive" hand, developed by Dr Kianoush Nazarpour, a senior lecturer in biomedical engineering at Newcastle University, offers a third approach. It uses a camera and computer vision to recognize objects within reach and adjust its grasp accordingly.

"Responsiveness has been one of the main barriers to artificial limbs," Dr. Nazarpour wrote in the study, which was published in the Journal of Neural Engineering. Even cutting-edge prosthetics like Dean Kamen's Luke arm, move at a glacial pace compared to their biological counterpart. But by integrating computer vision and external processing, the Intuitive hand can respond within milliseconds. "The user can reach out and pick up a cup or a biscuit with nothing more than a quick glance in the right direction," Dr. Nazarpour continued.

The team also leveraged neural networking to train the hand to recognize what's in front of it and how it should change its grasp. Interestingly the team didn't train the system on specific objects. "The computer isn't just matching an image, it's learning to recognise objects and group them according to the grasp type the hand has to perform to successfully pick it up," Ghazal Ghazaei, a PhD candidate at Newcastle who helped develop the hand's AI. "It is this which enables it to accurately assess and pick up an object which it has never seen before – a huge step forward in the development of bionic limbs." In the end, the hand developed four unique grips: palm wrist neutral (holding a beer), palm wrist pronated (holding a remote), tripod (holding a bowling ball) and pinch (you know what pinching is).

But this system is only a stopgap solution. From here, the research team hopes to further develop the hand and integrate it directly into the nervous system, potentially via nerve endings in the arm. With that capability, the arm will be able to sense pressure and temperature and transmit that data directly into our brains.