Google Lens is a powerful, AI-driven visual search app

The new algorithm knows what's in your photos.

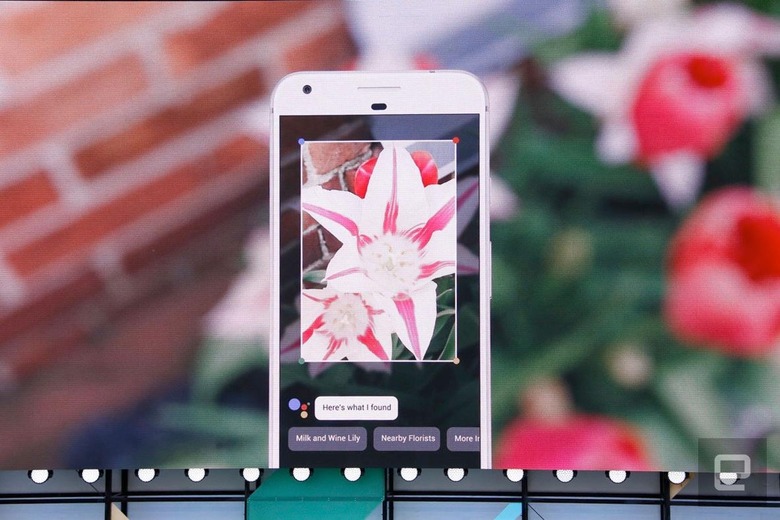

Google Lens is a set of vision-based computing capabilities that allows your smartphone to understand what's going on in a photo, video or live feed. For instance, point your phone at a flower and Google Lens will tell you, on the screen, which type of flower it is; or, aim the camera at a restaurant sign to see reviews and other information pop up. The new AI system is heading to Google Photos and Assistant first.

With Google Lens, your smartphone camera won't just see what you see, but will also understand what you see to help you take action. #io17 pic.twitter.com/viOmWFjqk1

— Google (@Google) May 17, 2017

Another cool tool available in Google Lens is the ability to point your phone at a router's setting sticker and have it automatically connect to that network. In conjunction with Assistant, Google Lens also allows users to point their phone at a sign for a concert and automatically add that event to their calendars, or even purchase tickets right then and there. For your existing pics in Google Photos, activate Lens and it will offer details about what's actually in an image.

Google Lens is part of a larger focus on AI at the company.

"When we started working on search, we wanted to do it at scale," CEO Sundar Pichai said at Google's I/O developer conference today. "That's why we designed our data centers from the ground up and put a lot of effort into them. Now that we're evolving for this machine-learning and AI world, we're building what we think of as AI-first data centers."

With a foundation in Photos and Assistant, Google Lens allows users to more naturally interact with visual and audio cues on their phones. It sounds a lot like Bixby, the virtual assistant for the Galaxy S8 and S8+, though Google Lens appears to be far more advanced than Samsung's system.

Of course, Google has been building up the tech behind Lens for years. Google Goggles is a visual search app that launched in 2010, and Lens is basically a grown-up version of this program. Lens is due to launch later this year.

For all the latest news and updates from Google I/O 2017, follow along here.