NTSB's preliminary report on Uber crash focuses on emergency braking

These collision avoidance systems are disengaged when the computer is in control.

Today, the NTSB released preliminary findings for an accident back in March, in which a self-driving Uber vehicle collided with a pedestrian. The pedestrian was killed. "At 1.3 seconds before impact, the self-driving system determined that emergency braking was needed to mitigate a collision," the release says. "According to Uber emergency braking maneuvers are not enabled while the vehicle is under computer control to reduce the potential for erratic vehicle behavior. The vehicle operator is relied on to intervene and take action. The system is not designed to alert the operator."

"Over the course of the last two months, we've worked closely with the NTSB," an Uber spokesperson told Engadget. "As their investigation continues, we've initiated our own safety review of our self-driving vehicles program. We've also brought on former NTSB Chair Christopher Hart to advise us on our overall safety culture, and we look forward to sharing more on the changes we'll make in the coming weeks."

It appears that the "emergency braking maneuvers" mentioned in the release are part of the onboard safety systems that Volvo puts into every XC90 vehicle. The Volvo was equipped with collision avoidance features, including automatic emergency braking. However, these systems are disabled when Uber's self-driving system is in control of the car.

It makes sense; after all, if the safety features were engaged, that would mean that two separate systems would be competing for control of the Volvo. However, it's surprising that the Uber self-drive system doesn't have its own emergency braking feature. According to the report, the computer recognized that emergency braking was necessary, but couldn't engage the system because it was disabled.

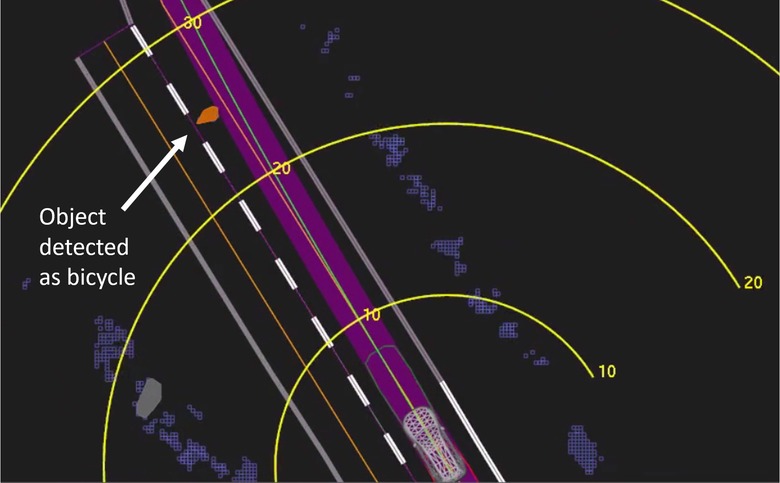

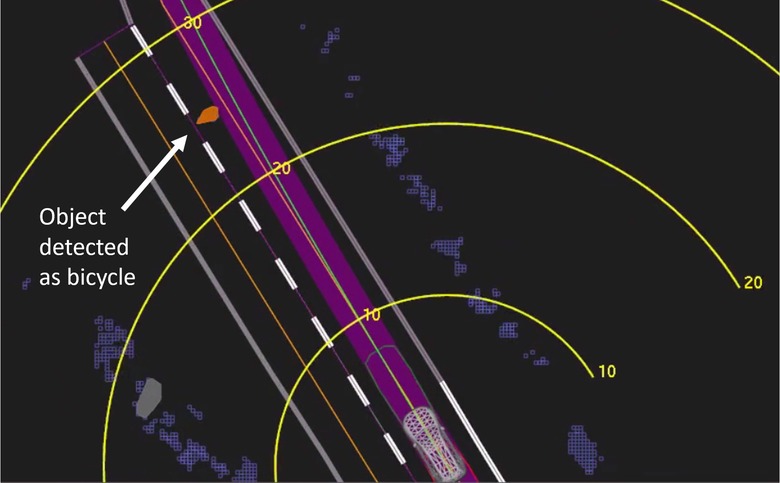

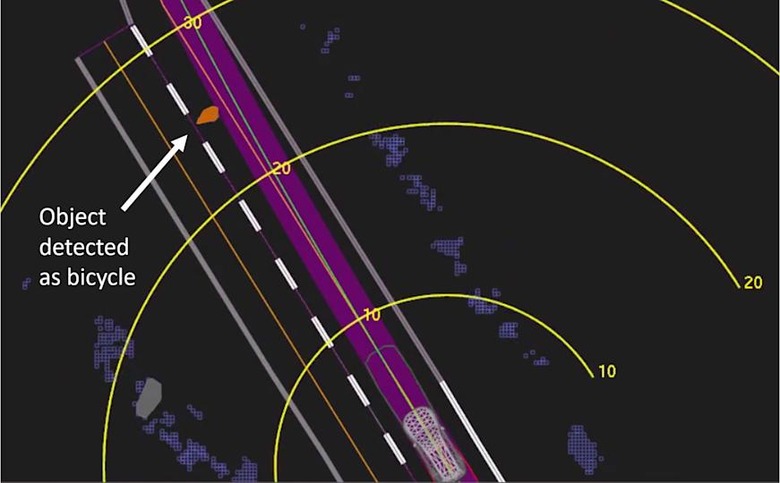

The driver was also not alerted that the self-driving system had detected an object. There wasn't much to be done at the point the system determined emergency braking was necessary, just 1.3 seconds before collision. Human reaction time isn't that swift. But the pedestrian was detected a full six seconds before the crash, yet no alert was provided to the driver. It was first classified as an "unknown object," which may have exacerbated the situation, as the software is designed to ignore objects in the road that the system determines aren't threats.

The driver is another part of this equation. "The inward-facing video shows the vehicle operator glancing down toward the center of the vehicle several times before the crash," the report says. However, the driver maintains she was looking at and monitoring the self-driving interface and was not checking her phone. Uber's computer controlled systems depend on the driver to be alert and monitoring the situation at any given point to avoid collisions.

The report also discusses the pedestrian's actions. According to the report, the pedestrian was wearing dark, non-reflective clothing (the crash occurred at night) and did not look in the direction of the approaching vehicle. As we already know, the pedestrian was also not crossing at a crosswalk, and the area was not well lit. "The report also notes the pedestrian's post-accident toxicology test results were positive for methamphetamine and marijuana," the release says.