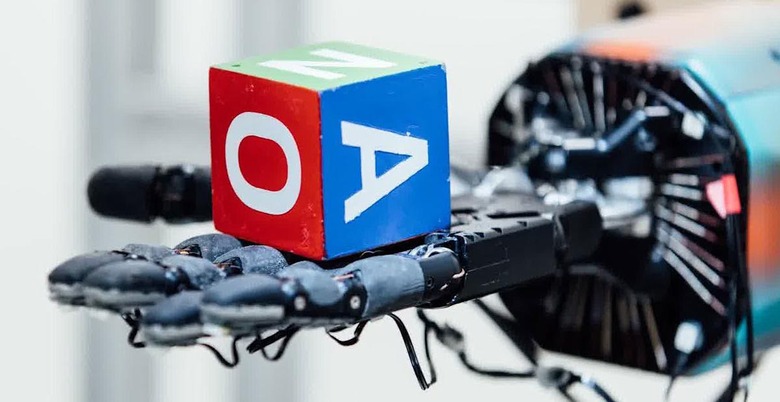

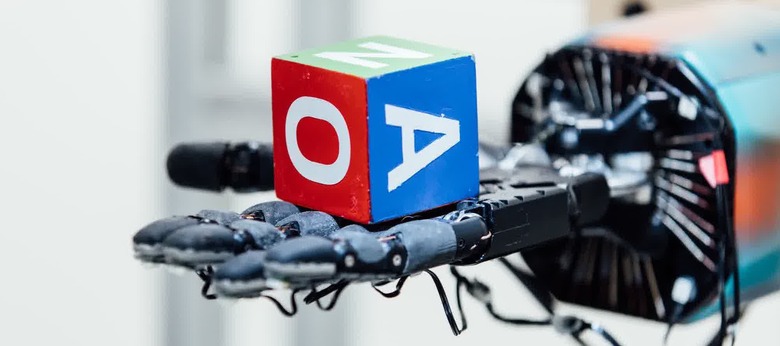

OpenAI's Dactyl system improves the dexterity of robot hands

The team only used abstracted simulations to train with.

It's still early days in creating the kind of human-like androids we see in the movies, but new research brings us ever closer to the idea. Boston Dynamics has become the de facto image of locomotion for both humans and their pets, while LG already has its CLOi porter 'bots and DARPA is working on centaur-like designs for disaster relief. Now, researchers at the Elon Musk-founded OpenAI are working on making robot hands more dextrous.

According to a blog post, the team has trained a human-like robot hand called the Shadow Dextrous Hand to manipulate real-world objects like a child's block. It uses the same algorithms and code from its OpenAI Five project, which has been training DOTA 2 bots to play video games. The resulting hand-centric system is called Dactyl, and it has learned to manipulate the blocks using a training model called domain randomization. Three cameras watch the robot hand while a computer tracks the position of the fingertips in real time. This approach provides many different experiences rather than real-world repeating tasks, letting the team scale up faster than other models of training.

Further, the researchers noted that as they deployed their positioning system to real-world robot hands, the Dactyl system uses a human-like set of strategies to achieve the desired result, like moving specific block face to the top. These strategies weren't taught to Dactyl, but rather emerged as behaviors from the training. In one case, Dactyl chose a grasp that favors the thumb and little finger (possibly due to more flexibility of its pinky), while humans tend to prefer using their thumb and index or middle finger. This shows that robot hands, and by extension, robots in general, can discover and adapt human behaviors to their own specific body types.