Facebook is fact-checking photos and videos to fight fake news

It's the latest effort to stop misinformation from spreading on its site.

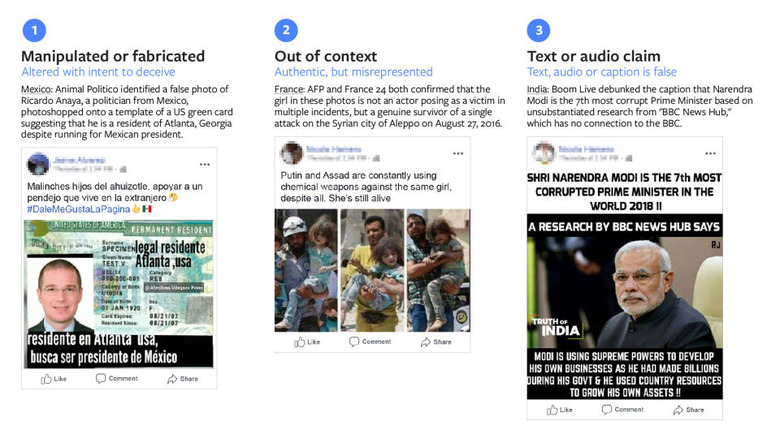

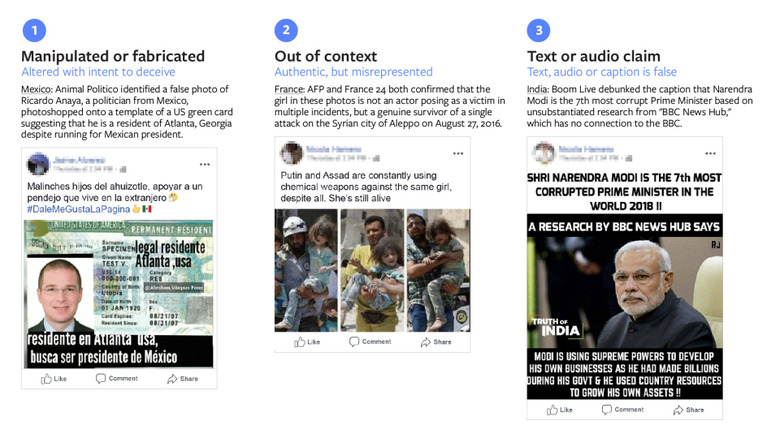

It's no secret that Facebook has been struggling to stop fake news from spreading on its site, though it has indeed made progress since the 2016 US presidential election. Now, as part of its ongoing efforts to fight misinformation, Facebook has announced that its 27 fact-checking partners across the world now have access to a new tool that will analyze pictures and videos. According to Facebook, this feature is powered by machine learning and is designed to help reviewers identify and take action against false content faster.

Facebook says the technology is proactive about tracking down posts that include media that may be false, using a combination of different engagement signals, such as feedback from users themselves — that's similar to the work it does with article links. Once the system identifies an image or video that it suspects was altered, the third-party fact checkers then try to verify if the content is real or not. For example, during the 2018 presidential election in Mexico, there was a photoshopped image of candidate Ricardo Amaya floating around which suggested he had a US green card That picture turned out to be fabricated, not surprisingly, and that's the type of content Facebook says this new tool can contain.

It's going to be challenging, without a doubt, but at least the company is taking steps in the right direction.