Microsoft and MIT can detect AI 'blind spots' in self-driving cars

Your autonomous car might avoid dangerous mistakes.

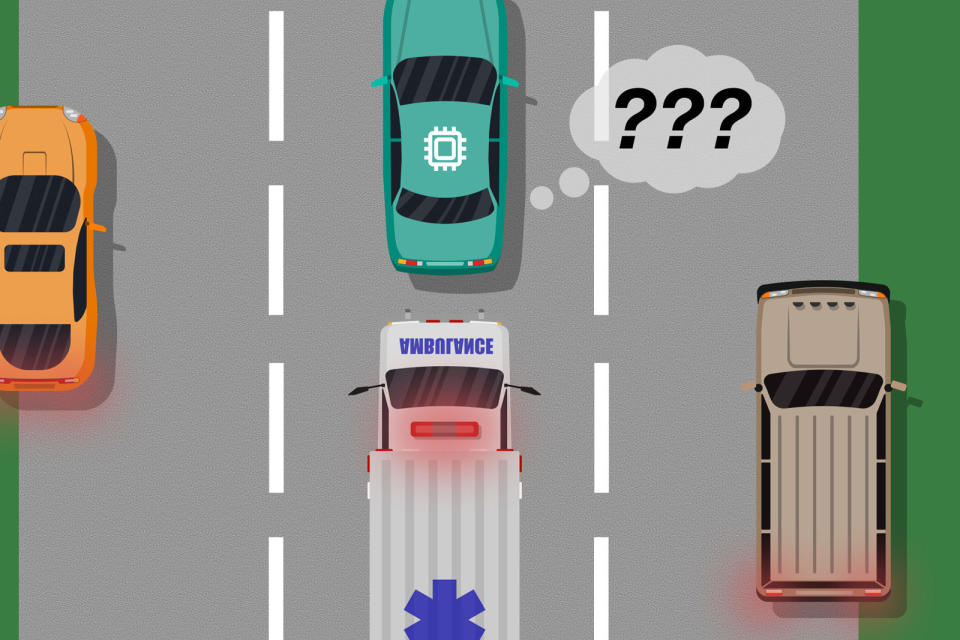

Self-driving cars are still prone to making mistakes, in part because the AI training can only account for so many situations. Microsoft and MIT might just fill in those gaps in knowledge -- they've developed a model that can catch these virtual "blind spots," as MIT describes them. The approach has the AI compare a human's actions in a given situation to what it would have done, and alters its behavior based on how closely it matches the response. If an autonomous car doesn't know how to pull over when an ambulance is racing down the road, it could learn by watching a flesh-and-bone driver moving to the side of the road.

The model would also work with real-time corrections. If the AI stepped out of line, a human driver could take over and indicate that something went wrong.

Researchers even have a way to prevent the driverless vehicle from becoming overconfident and marking all instances of a given response as safe. A machine learning algorithm not only identifies acceptable and unacceptable responses, but uses probability calculations to spot patterns and determine whether something is truly safe or still leaves the potential for problems. Even if an action is right 90 percent of the time, it might still see a weakness that it needs to address.

This technology isn't ready for the field yet. Scientists have only tested their model with video games, where there are limited parameters and relatively ideal conditions. Microsoft and MIT still need to test with real cars. If this works, though, it could go a long way toward making self-driving cars practical. Early vehicles still have problems dealing with things as simple as snow, let alone fast-paced traffic where a mistake can lead to a crash. This could help them take on tricky situations without requiring carefully-crafted custom solutions or putting passengers at risk.