Steam mods will filter 'off-topic review bombs' from ratings

But the reviews will stay up on its site.

One of the issues with relying on user reviews to rate content is the possibility that some of those reviews may not be written in entirely good faith. Recently Rotten Tomatoes took new steps to manage the impact of fake reviews submitted for Captain Marvel, while Netflix responded to several instances of "review bombing" by removing written reviews from its service entirely. Over the years Steam has taken a few different steps to deal with the issue, but now its latest response is a combination of automated scanning and human moderation teams.

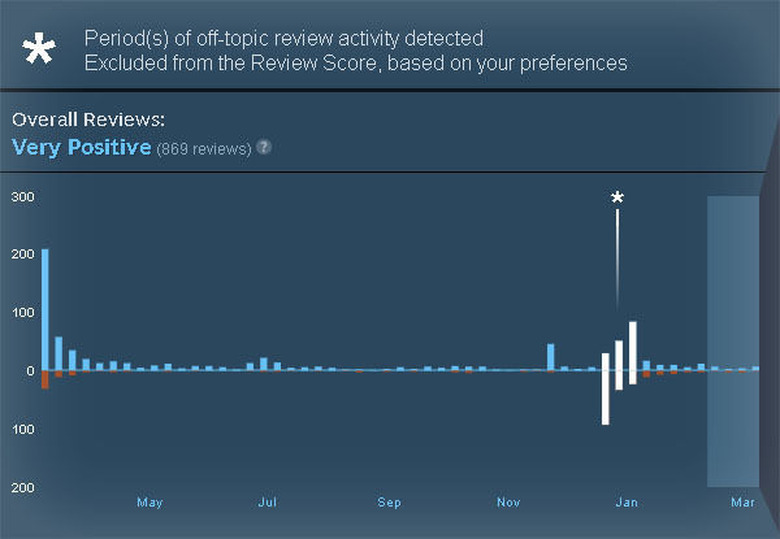

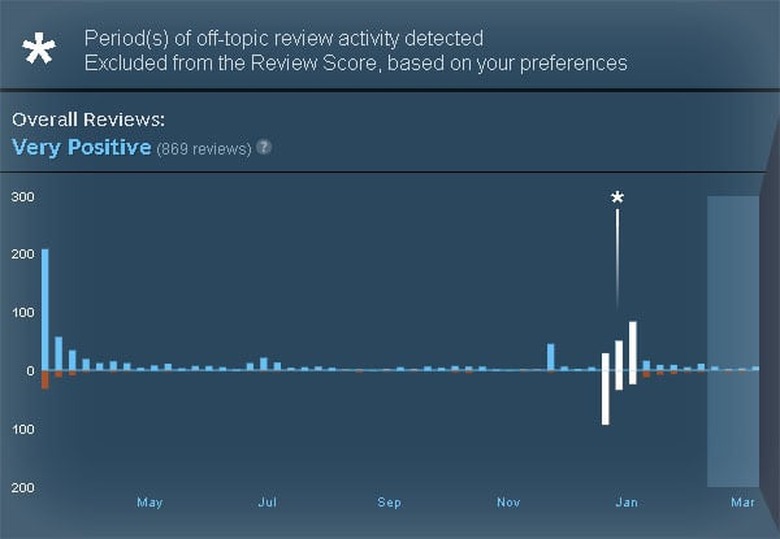

In a blog post it explained the plan: "we're going to identify off-topic review bombs, and remove them from the Review Score." In practice, what it has is a tool that monitors reviews in real-time to detect "anomalous" activity that suggests something is happening. It alerts a team of moderators, who can then look through the reviews who will investigate, and if they do find that there's a spate of "off-topic reviews," then they'll alert the developer, and remove those reviews from the way the game's score is calculated, although the reviews themselves will stay up.

Interestingly, gamers can opt-out of the filtered scores, in case they prefer the results calculated with bomb activity included. Other details mentioned explained that complaints about issues like DRM could be considered off-topic, and that while its automated tool has been trained on all of the store's previous reviews, it will not apply this approach to existing scores and reviews.

The last time we heard about significant changes on Steam to fight bombing and spam came in 2017, but the team says this isn't the only adjustment coming.