Watch super slow-mo video from a camera with human-like vision

Researchers used AI to make an "event camera" do amazing tricks.

Conventional video cameras that capture scenes frame by frame have little in common with our eyes, which see the world continuously. A new type of device called an "event camera" works in much the same way, capturing movement as a constant stream of information. Now, scientists from Eth Zurich are showing the true potential of the sensors by capturing super slow-motion video at up to 5,400 frames per second. The research could lead to inexpensive high-speed cameras and much more accurate machine vision.

Rather than capturing individual frames, event cameras continually process changes in light intensity in a scene. That's not only more economical in terms of data — as static parts of a scene take up no space — but nothing is lost between frames like a regular camera. That allows for tricks like high-speed photography, improved HDR performance and machine vision that's incredibly fast.

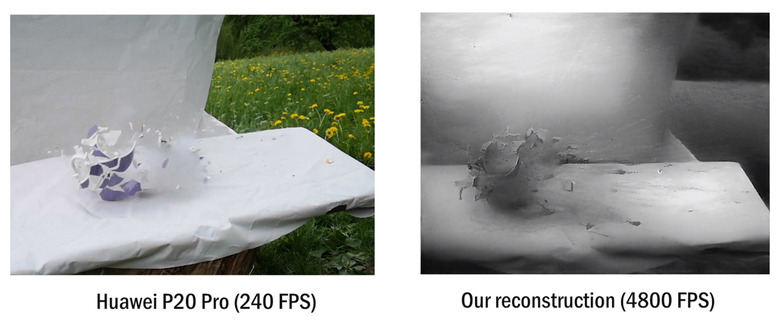

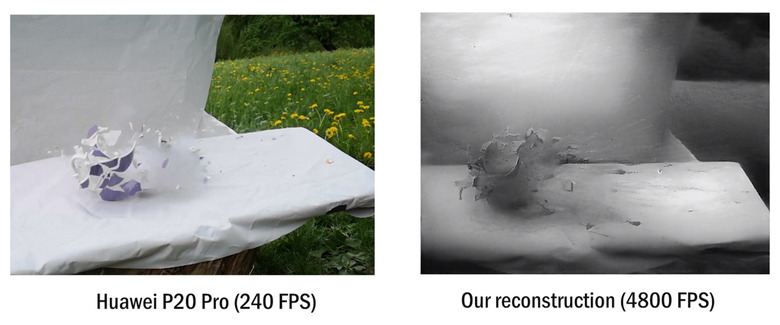

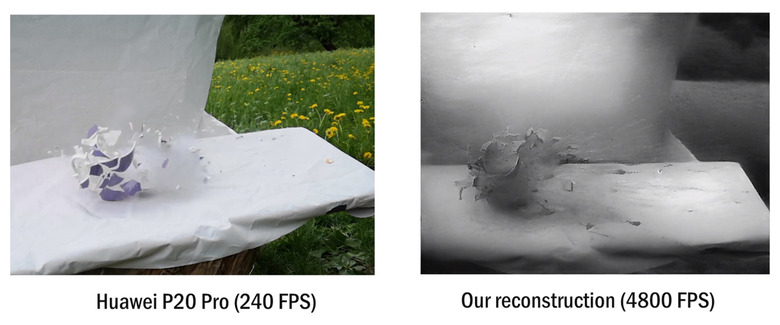

The irony of event cameras is that while they work like our eyes, it's impossible to display the raw data on a screen. First, it needs to be "reconstructed" into a viewable video, which is where the new research comes in. The Eth Zurich team first trained a neural network using simulated event data, rather than trying to extract frames manually. Then, they used the results to create a high-speed network to process event camera data in real time.

The result is 20 percent better image quality, all while running in real time. Moreover, they were able to generate high-speed video at up to 5,400 fps, capturing a bullet hitting a ceramic gnome, for instance. In another example, the algorithm produced high-dynamic range video in difficult lighting conditions.

The team showed a few practical applications that were not part of their paper, like object recognition (stop signs and traffic lights) and depth recognition without binocular vision. The research looks particularly promising for autonomous driving and machine vision, allowing vehicles to see and detect objects without processing reams of data. The technology is still in the early stages, but they've released their reconstruction code and a pretrained model so other researchers can give it a try.