The Dreamcast predicted everything about modern consoles

Sega's last console left a powerful legacy.

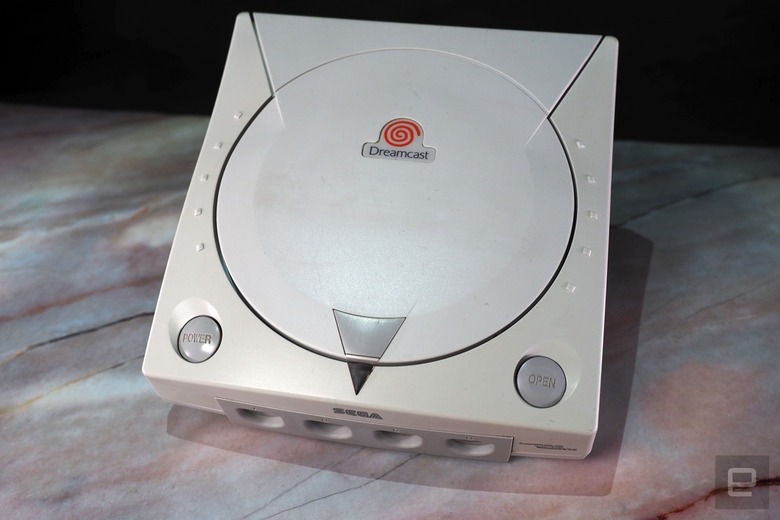

9/9/99. 20 years ago today, the Dreamcast landed in America. And even though it was ultimately an absolute failure, it changed the face of console gaming forever. It brought the power of Sega's arcade hardware into your living room (at a price more palatable than the Neo-Geo). It banked on network connectivity by including a modem. And it even ran Windows! You can draw a clear line from the Dreamcast to today's systems, making it seem even more remarkable in retrospect. Now that we're gearing up for another generation of home gaming systems, it's worth taking a look back at exactly how it foretold our current gaming landscape.

The hardware and software

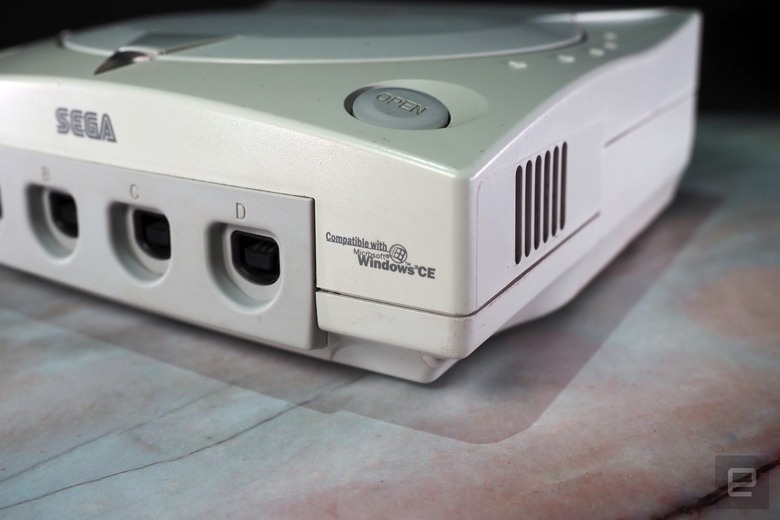

The Dreamcast was powered by a Hitachi SH-4 processor and a PowerVR2 GPU from NEC. Notably, Sega didn't attempt to craft any custom hardware, like it did with the Saturn. Instead, by using standard components, it was able to both reduce costs and make the system easier for the developers to handle. Simpler development was also a reason Sega opted for the Windows CE platform. (Microsoft would later bring Windows 2000 to the Xbox and Windows 10 to the Xbox One.)

While no other console maker has taken the same approach, the Dreamcast was the first mainstream system to use a component you'd typically find in computers — a DirectX-compatible GPU. The original Xbox wasn't far off from a typical PC, with a custom Pentium III CPU and NVIDIA NV2A graphics. With the PlayStation 3 and Xbox 360, Microsoft and Sony opted for custom PowerPC processors and NVIDIA and ATI graphics, respectively. The current generation consoles, meanwhile, are practically running PC gaming hardware, with custom AMD CPUs and GPUs.

Even Nintendo has followed suit. While the Wii U ran a PowerPC processor and AMD graphics, the Switch is powered entirely by NVIDIA's Tegra X1 system on a chip, an ARM-based processor built with set-top boxes and portable hardware in mind. The strategy makes sense: Why should console makers go through the trouble of crafting a complex CPU and GPU of their own, when they can just take advantage of the existing world of PC hardware? Even if they have to tweak things a bit, it's still more efficient to start with something proven, like AMD's oh-so-malleable architecture. Based on what we know about the upcoming systems from Microsoft and Nintendo, they're not messing with that strategy.

Built-in networking

I'm not sure how I spent hundreds of hours playing Phantasy Star Online on a 56k modem, but I did. And I loved every second of it (okay, not so much the slow dial-in times and random logoffs). Not all games took advantage of the Dreamcast's modem, but the few that did gave us a glimpse of a world where console connectivity would be standard. There was even a version of Quake 3 that offered multiplayer cross-play with PC gamers (those matches went as poorly as you'd expect, though).

Kris Naudus/Engadget

Thinking back on Phantasy Star Online today, a game where you'd venture out with three other people to complete missions and take on enormous boss fights, it was like a preview of the co-op experience we'd get with games like Destiny, Anthem and The Division. The Dreamcast arrived years before I had a PC that could actually play games decently, so for me, and many other gamers, it offered a formative online experience. Nowadays, the only way for me to relive that PSO high is to hop on a private server.

In comparison, online support was practically an afterthought for the PlayStation 2. Sony sold modem and broadband adapter add-ons, but few games took advantage of them. Microsoft, which worked with Sega to implement Windows CE on the Dreamcast, made broadband gaming a key feature of the original Xbox. Ethernet connectivity was built into every console, and with the launch of Xbox Live in 2002, there was finally an easy way for console gamers to play online like their PC brethren. And even without that service, the Xbox was a solid LAN gaming machine (at least, based on the many hours of Halo multiplayer I clocked in college).

While the Dreamcast was certainly prescient about the importance of online gaming, it came a bit too soon. It took a few years after its 1999 launch for broadband to become mainstream in homes, and to take advantage of that, you also had to buy a separate adapter. Who, but crazed PSO fans like myself, would do that?

It's basically the Xbox 0.5

In a broader sense, it's easy to see how Microsoft learned a few lessons from the Dreamcast. It was a console that failed due to a lack of third-party support, extreme marketing competition from Sony, and, unfortunately, bad timing. While the original Xbox repeated some of those mistakes, come the Xbox 360, it was clear Microsoft finally figured out how to wrestle with Sony.

If you squint a bit, the Xbox 360 even looks like a Dreamcast sequel, with its sleek white case and circular logo. (This 2005 1UP article lays out some truly eerie similarities, including the fact that executive Peter Moore was involved with both console's launches.) Xbox Live also evolved into a much more useful service on the Xbox 360 — something that was integrated throughout the entire console.

Other noteworthy tidbits:

-

The Dreamcast's controller accessory, the VMU, predicted remote app support for games like Destiny and the Wii U's dual-screen play.

-

The basic layout of the Dreamcast's controller would also be replicated on Xbox systems and other pads, like Nintendo's Switch Pro Controller.

-

It was also the first console to user a higher-capacity optical disc than CDs, the 1.2 GB GD-ROM.

-

With a cable accessory, you could also get 480p output from many games. It wasn't HD, but it was the closest we could get until HDTVs went mainstream around 2005.

If you can't tell by now, the Dreamcast holds a special place in my heart. It was the first console I bought for myself, after toiling away for months as an electronics sales associate at OfficeMax. I also lucked into a pile of excellent games early on, thanks to affiliate sales from my anime fansites. Mostly though, it was the rare gadget that made me feel like I was actually seeing the future first-hand. Twenty years later, turns out I was right.