Disney's new AI is facial recognition for animation

The system could revolutionize how we search and discover streaming content.

Disney's massive archive spans the course of nearly a century of content, which can turn any search for specific characters, scenes or on-screen objects within it into a significant undertaking. However, a team of researchers from Disney's Direct-to-Consumer & International Organization (DTCI) have built a machine learning platform to help automate the digital archival of all that content. They call it the Content Genome.

The CG platform is built to populate knowledge graphs with content metadata, akin to what you see in Google results if you search for Steve Jobs (below). From there, AI applications can then leverage that data to enhance search, discovery and personalization features or as Anthony Accardo, Director of Research and Development at DTCI, told Engadget, help animators find specific shots and sequences from within Disney's archive.

"So if an animator working on a new season of Clone Wars wants to find a specific type of explosion that happened three seasons ago or as a reference to make something for this current season, that person had to spend hours on YouTube going through video because you can't find that by just looking at episode titles." But with the help of this platform, the animator will be able to simply search for the requisite metadata.

The project began in earnest 2016 after a few years of investigation, Accardo said. "It was really about preparing a company like Disney, [which was] operating in a traditional sense for broadcast and home video distribution, for what would we need to take advantage of the differences between a digital video platform with direct access to consumers and the traditional distribution methods."

But building such a system from the ground up is no easy feat. Developing a functional and robust taxonomy is vital, Accardo continued, "especially if you're going to generate a lot of different metadata for a lot of different attributes. You need to start thinking about how you are going to manage those terms and those labels. If you let those taxonomies get out of control, then the resulting data that you generate is going to be hard to take advantage of in any sort of sophisticated, scaled way."

The team then created what it describes as "first automated tagging pipeline," according to a Medium post published Thursday. "Tagging content is an important component of DTCI's use of supervised learning, which is regularly employed in custom use cases that require specific detection," the DTCI team wrote. "Tagging is also the only way to identify a lot of highly contextual story and character information from structured data, like storylines, character archetypes or motivations."

The pipeline leveraged existing facial recognition software, which the DTCI team then applied to its catalog of movies and TV shows. The module was able to successfully detect and recognize human faces from the onscreen action. Following that initial success, the team was able to train the system to detect specific locations as well.

But recognizing a human's face from live video is a far different task than teaching an AI to spot animated faces. "The face of a character in Cars has human properties but it doesn't look like a human face," Miquel Àngel Farré, DTCI's Manager of Research and Development, said. "Therefore, we need something that can learn the abstract concept of 'face,' and with traditional machine learning, it was very complicated. But thanks to deep learning we could achieve that."

The team tried to apply the live-action facial recognition model to animated content but with mixed results. Turns out that the machine learning methods they employed, such as HOG+SVM, work well in picking out color, brightness and texture changes, the team wrote in its Medium post, but it could only pick out human features — two eyes, a nose, and a mouth — if they were within general human proportions. As such, using this system to tag Monsters Inc. was right out.

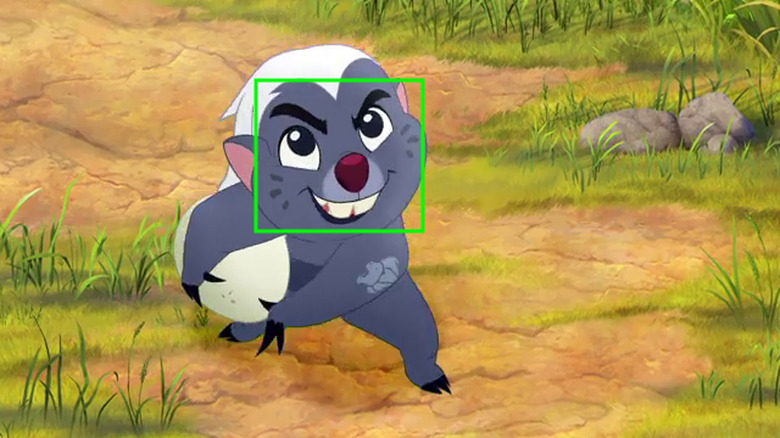

They then annotated a few hundred frames from two Disney Junior animated shows, Elena of Avalor and The Lion Guard, and attempted to train the system using those small samples but that returned disappointing results as well. The team had little other choice than to turn to deep learning methods to train the animated facial recognition system. "For animated characters, it was really one of those things that there's no other way to do it, Farré explained. "It's really what works well."

The problem with that, however, is that deep learning training data sets are massive by nature. So instead, the team used the samples it already had to fine tune a Faster-R CNN Object Detection architecture that had already been trained to detect animated faces using a different, non-Disney dataset. Basically, instead of training up a brand new architecture using huge amounts of Disney content, the team employed the speedier method of taking an existing, already-trained architecture and adapting it to their specific content.

After adjusting the data set slightly to correct for false positive results, the team combined their animated facial recognition detector with other algorithms such as bounding box trackers to shorten the processing time and improve efficiency. "This allowed us to accelerate the processing, as fewer detections are required, and we can propagate the detected faces to all the frames," the team wrote.

The tagging process isn't entirely automated, humans do have oversight over the system's generated results, depending on how that data is being used. "If this is something that's going to power a consumer-facing feature, or a consumer-facing search," Accardo said, "then we would want to make sure that the classifier is trained, highly accurate, and custom to that content. We run those results through our QA platform and have humans QA them."

This technology could prove revolutionary for consumers as well. Since the system can be applied to "all of [Disney's] studios, all of the broadcast networks, everything from ESPN to the feature films to TV networks," as Accardo points out, you would, in theory, be able to search for all episodes in a series containing a specific minor recurring character or prop, or were shot in a specific location, or feature a specific action sequence. Recommendation and discovery engines could become more accurate and efficient in sussing out the sorts of content viewers are looking for without the hamfisted results we see from today's streaming services.

Moving forward, Accardo and the team hope to further expand the system's ability to understand generalized concepts by leveraging multimodal machine learning techniques such as the framework that PyTorch recently released and which the team utilized in its work. "Way back in 2014, 2015, we had this water cooler conversation about automatically identifying an arrest," Accardo explained. "We would do that by using natural language processing against the script, using logo recognition to identify like a badge of a police officer, using all of these different things to identify a concept that is not clearly visible or audible."

But before that can happen, more research and development is necessary. "The thing about machine learning and AI is, the things that are based on understanding all the context, those are more difficult," Accardo said. "You have to start with the clearly identifiable things and then you can move into multimodal machine learning."

"The use of inferencing, the use of knowledge graphs, the use of semantics, to really enrich your ability to automate capturing human context and understanding," he concluded, "that to me is super exciting."