Facebook is using bots to figure out how to stop harassment

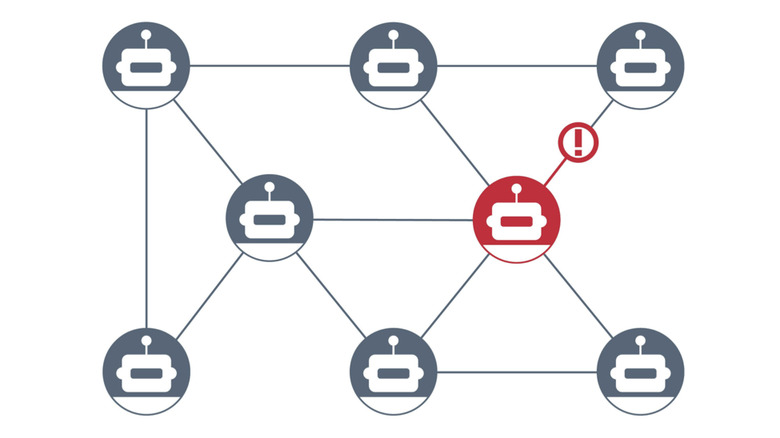

The bots interact in a test Facebook environment.

Facebook says its researchers are developing new technology they hope will aid in ongoing efforts to make its platform's AI have the ability to snuff out harassment. In the Web-Enabled Simulation (WES), an army of bots programmed to mimic bad human behavior are let loose in a test environment, and Facebook engineers then figure out the best countermeasures.

WES has three key aspects, Facebook researcher Mark Harman said in a statement. First, it uses machine learning to train bots to simulate real behavior of humans on Facebook. Second, WES can automate interactions of bots on a large scale, from thousands to millions. Finally, WES deploys the bots on Facebook's actual production code base, which allows the bots to interact with each other and real content on Facebook — but it's kept separate from real users.

In the testing environment, known as WW, the bots take actions like trying to buy and sell forbidden items, such as guns and drugs. The bot can use Facebook like a normal person would, conducting searches and visiting pages. Engineers can then test whether the bot can bypass safeguards and violate Community Standards, according to the statement. The plan is for engineers to find patterns in the results of these tests, and use that data to test ways to make it harder for users to violate Community Standards.

Facebook has long said it's been developing methods to thwart harassment, criminal activity, misinformation and other types of wrongdoing on the platform. At the 2018 Facebook F8 conference, Chief Technology Officer Mike Schroepfer said the company was investing heavily in artificial intelligence research and finding ways to make it work at a large scale with little to no human supervision. To Facebook's credit, WES appears to be evidence of that.