Facebook shut down 10 fake-account networks over the last month

As usual, every network used different methods to shape opinions.

Facebook shut down ten networks that violated its policies against coordinated inauthentic behavior between September 22nd and today, the company announced today. Six of the networks were previously disclosed and dismantled, while four were shuttered in the last week alone.

"In each case, the people behind this activity coordinated with one another and used fictitious accounts and personas as a central part of their operations to mislead people about who they are and what they are doing, and that was the basis for our action," said Facebook head of security policy Nathaniel Gleicher in a blog post. "When we investigate and remove these operations, we focus on behavior rather than content, whether they're foreign or domestic, and regardless of who's behind them or what they post."

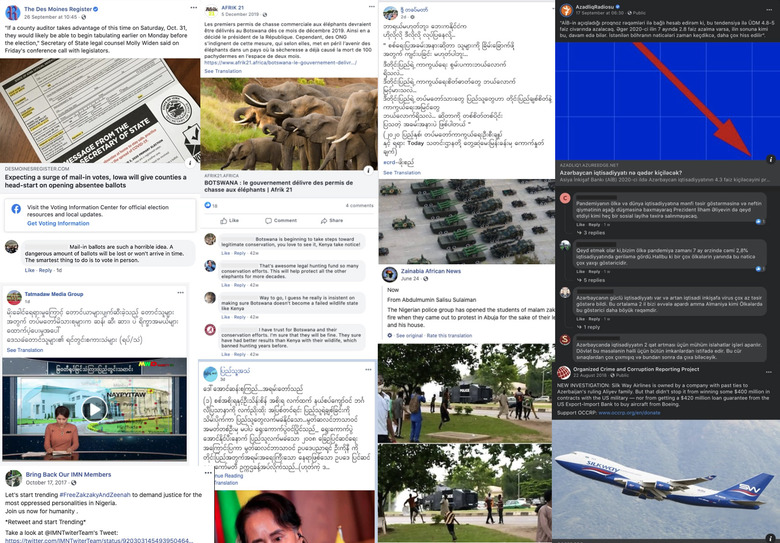

Perhaps the most notable takedown was of a misinformation network made of 200 Facebook accounts, 55 Facebook pages and 76 Instagram accounts that focused on audiences in the United States and in Kenya and Botswana. Rather than posting inflammatory or misleading content of their own, the actors involved used accounts with stock images as profile pictures to comment on stories shared by news organizations and public figures.

"These comments included topics like trophy or sport hunting in the US and Kenya, the midterm elections in 2018, the 2020 presidential election, COVID-19, criticism of the Democratic party and presidential candidate Joe Biden, and praise of President Trump and the Republican party," Gleicher writes. Of the four misinformation networks the company shut down this month, this one had spent the most on ads by far. All told, $973,000 were spent across Facebook and Instagram, though Facebook was quick to note that at least some of that money came from "authentic" accounts. Still, that considerable sum suggests a larger benefactor at work, and Facebook quickly identified it: The network was linked to a US marketing firm called Rally Forge, working on behalf of the Inclusive Conservation Group and the conservative non-profit Turning Point USA.

Update (10/8, 2:46PM): Engadget has received a statement from Turning Point Action, a 501(c)(4) and advocacy group asserting that it, not Turning Point USA, was responsible for the comments in question. (Please note that, while the two parties are technically distinct, they both share a founder and have similar conservative agendas.)

"Facebook's blog post in question was in reference to a project for Turning Point ACTION, a 501c4 and an entirely separate entity. The mistake has been flagged with Facebook's communication team and should be rectified soon. Turning Point ACTION works hard to operate within social platforms' TOS on all of its projects and communications and we hope to work closely with FB to rectify any misunderstanding. All questions regarding the activities of TP ACTION's vendor, Rally Forge, should be directed to that company."

The original story continues below.

Today's report also puts into sharp relief the extent to which misinformation networks are acting outside the United States. Gleicher notes that more than half of the campaigns Facebook shut down between September and this morning focused on domestic audiences in their countries and many of them were linked to groups and individuals associated with politically affiliated actors" in Myanmar, Russia, Nigeria, the Philippines and Azerbaijan, in addition to the United States.

In one such case, a cluster of 79 Facebook accounts, 47 Pages, 93 Groups and 48 Instagram accounts were used in Nigeria to — among other things — signal boost a hashtag supporting Ibrahim Zakzaky, the imprisoned leader of the IMN, an Islamic movement in the country that the government has banned and denounced as a terrorist organization. In addition posting comments and items about protests across Nigeria and the IMN ban, some of these fake accounts posed as news organizations and linked users to their own sites — sites free of Facebook's investigators.

How effective these specific misinformation campaigns were can't be known for sure, but there is little evidence to suggest that inauthentic actors — be they state-sponsored or not — will discontinue their efforts. Facebook continues to be a for these actions, and since its own staff cannot police the entire platform, the company today laid out a list of principles meant to guide legislation to curtail misinformation. In it, the company calls for greater transparency for expenditures in political advertising, supporting the reseach needed to combat manipulated media like deepfakes, and better sharing of intelligence on potential threats between platforms, the public, and the government — you can see the full series of suggestions here.