Google's LaMDA AI can have a 'natural' conversation while pretending to be Pluto

The research could have major implications for Google search and Assistant.

Whether it's talking AI or smarter chatbots, Google has spent the last several years teaching AI how to communicate better with humans. Now, the company is showing off its latest research that could take these efforts to the next level.

The company previewed LaMDA ("Language Model for Dialogue Applications"), research it says represents a "breakthrough conversation technology" that will one day enable people to have natural, open-ended conversations about any topic with Google's AI. The technology is still in a research phase, but it could have huge implications for existing Google products like search and Assistant.

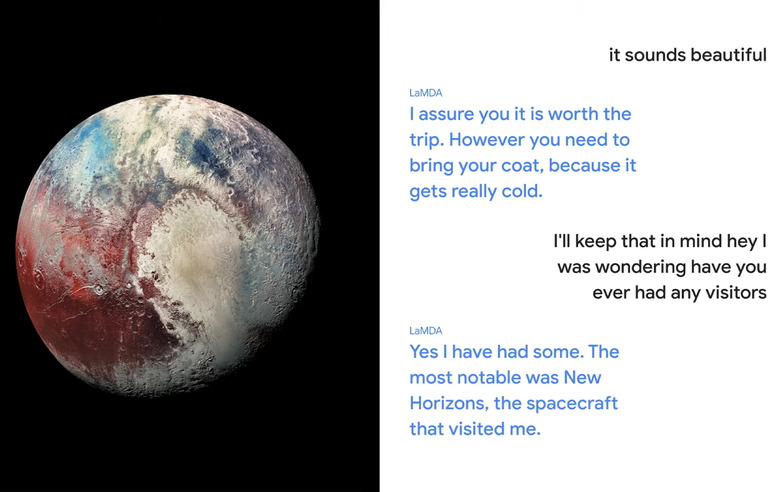

While existing chatbots are often trained on a specific topic or programmed to give canned responses, LaMDA "can engage in a free-flowing way about a seemingly endless number of topics," according to Google. Onstage at Google I/O, Sundar Pichai showed off how LaMDA could take on the persona of Pluto or a paper airplane. In each example, LaMDA was able to respond to questions or comments that don't have a clear answer.

Of course, these types of open-ended experiments don't always produce the desired result. And Pichai noted onstage that Google is still fine tuning LaMDA, as it's not always able to give a coherent or relevant answer to every query. Separately, Google said that it's also working on ways to ensure LaMDA provides accurate information, which could be another potential problem area for the technology.

"We're also exploring dimensions like 'interestingness,' by assessing whether responses are insightful, unexpected or witty," Google explains in a blog post. "Being Google, we also care a lot about factuality (that is, whether LaMDA sticks to facts, something language models often struggle with), and are investigating ways to ensure LaMDA's responses aren't just compelling but correct."

For now, LaMDA is built around text conversations, but Pichai said it could eventually expand to other elements like video and audio. Eventually, the research could be incorporated into existing features like search or Google Assistant. For example, it could enable users to ask Google for directions with a route that has views of mountains, or to ask Google to skip to a specific part of a video, such as "show me the part where the lion roars at sunset."