How AI will change the way we search, for better or worse

Why surf the web when Google will answer most any question directly?

Great news everyone, we're pivoting to chatbots! Little did OpenAI realize when it released ChatGPT last November that the advanced LLM (large language model) designed to uncannily mimic human writing would become the fastest growing app to date with more than 100 million users signing up over the past three months. Its success — helped along by a $10 billion, multi-year investment from Microsoft — largely caught the company's competition flat-footed, in turn spurring a frenetic and frantic response from Google, Baidu and Alibaba. But as these enhanced search engines come online in the coming days, the ways and whys of how we search are sure to evolve alongside them.

"I'm pretty excited about the technology. You know, we've been building NLP systems for a while and we've been looking every year at incremental growth," Dr. Sameer Singh, Associate Professor of Computer Science at the University of California, Irvine (UCI), told Engadget. "For the public, it seems like suddenly out of the blue, that's where we are. I've seen things getting better over the years and it's good for all of this stuff to be available everywhere and for people to be using it."

As to the recent public success of large language models, "I think it's partly that technology has gotten to a place where it's not completely embarrassing to put the output of these models in front of people — and it does look really good most of the time," Singh continued. "I think that that's good enough."

"I think it has less to do with technology but more to do with the public perception," he continued. "If GPT hadn't been released publicly... Once something like that is out there and it's really resonating with so many people, the usage is off the charts."

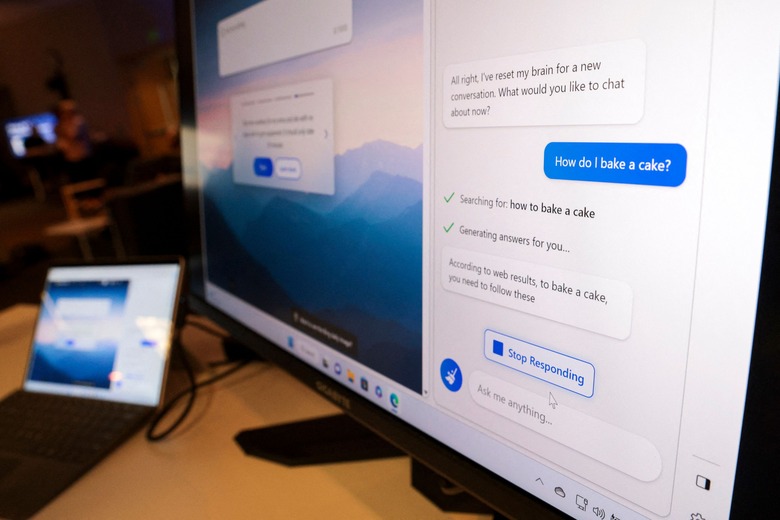

Search providers have big, big ideas for how the artificial intelligence-enhanced web crawlers and search engines might work and damned if they aren't going to break stuff and move fast to get there. Microsoft envisions its Bing AI to serve as the user's "copilot" in their web browsing, following them from page to page answering questions and even writing social media posts on their behalf.

This is a fundamental change from the process we use today. Depending on the complexity of the question users may have to visit multiple websites, then sift through that collected information and stitch it together into a cohesive idea before evaluating it.

"That's more work than having a model that hopefully has read these pages already and can synthesize this into something that doesn't currently exist on the web," Brendan Dolan-Gavitt, Assistant Professor in the Computer Science and Engineering Department at NYU Tandon, told Engadget. "The information is still out there. It's still verifiable, and hopefully correct. But it's not all in place."

For its part, Google's vision of the AI-powered future has users hanging around its search page rather than clicking through to destination sites. Information relevant to the user's query would be collected from the web, stitched together by the language model, then regurgitated as an answer with reference to the originating website displayed as footnotes.

This all sounds great, and was all going great, right up to the very first opportunity for something to go wrong. When it did. In its inaugural Twitter ad — less than 24 hours after debuting — Bard, Google's answer to ChatGPT, confidently declared, "JWST took the very first pictures of a planet outside of our own solar system." You will be shocked to learn that the James Webb Space Telescope did not, in fact, discover the first exoplanet in history. The ESO's Very Large Telescope holds that honor from 2004. Bard just sorta made it up. Hallucinated it out of the digital ether.

Bard is an experimental conversational AI service, powered by LaMDA. Built using our large language models and drawing on information from the web, it's a launchpad for curiosity and can help simplify complex topics → https://t.co/fSp531xKy3 pic.twitter.com/JecHXVmt8l

— Google (@Google) February 6, 2023

Of course this isn't the first time that we've been lied to by machines. Search has always been a bit of a crapshoot, ever since the early days of Lycos and Altavista. "When search was released, we thought it was 'good enough' though it wasn't perfect," Singh recalled. "It would give all kinds of results. Over time, those have improved a lot. We played with it, and we realized when we should trust it and when we shouldn't — when we should go to the second page of results, and when we shouldn't."

The subsequent generation of voice AI assistants evolved through the same base issues that their text-based predecessors did. "When Siri and Google Assistant and all of these came out and Alexa," Singh said, "they were not the assistants that they were being sold to us as."

The performance of today's LLMs like Bard and ChatGPT, are likely to improve along similar paths through their public use, as well as through further specialization into specific technical and knowledge-based roles such as medicine, business analysis and law. "I think there are definitely reasons it becomes much better once you start specializing it. I don't think Google and Microsoft specifically are going to be specializing it too much — their market is as general as possible," Singh noted.

In many ways, what Google and Bing are offering by interposing their services in front of the wider internet — much as AOL did with the America Online service in the '90s — is a logical conclusion to the challenges facing today's internet users.

"Nobody's doing the search as the end goal. We are seeking some information, eventually to act on that information," Singh argues. "If we think about that as the role of search, and not just search in the literal sense of literally searching for something, you can imagine something that actually acts on top of search results can be very useful."

Singh characterizes this centralization of power as, "a very valid concern. Simply put, if you have these chat capabilities, you are much less inclined to actually go to the websites where this information resides," he said.

It's bad enough that chatbots have a habit of making broad intellectual leaps in their summarizations, but the practice may also "incentivize users not go to the website, not read the whole source, to just get the version that the chat interface gives you and sort of start relying on it more and more," Singh warned.

In this, Singh and Dolan-Gavitt agree. "If you're cannibalizing from the visits that a site would have gotten, and are no longer directing people there, but using the same information, there's an argument that these sites won't have much incentive to keep posting new content." Dolan-Gavitt told Engadget. "On the other hand the need for clicks also is one of the reasons we get lots of spam and is one of the reasons why search has sort of become less useful recently. I think [the shortcomings of search are] a big part of why people are responding more positively to these chatbot products."

That demand, combined with a nascent marketplace, is resulting in a scramble among the industry's major players to get their products out yesterday, ready or not, underwhelming or not. That rush for market share is decidedly hazardous for consumers. Microsoft's previous foray into AI chatbots, 2014's Taye, ended poorly (to put it without the white hoods and goose stepping). Today, Redditors are already jailbreaking OpenAI to generate racist content. These are two of the more innocuous challenges we will face as LLMs expand in use but even they have proven difficult to stamp out in part, because they require coordination amongst an industry of viscous competitors.

"The kinds of things that I tend to worry about are, on the software side, whether this puts malicious capabilities into more hands, makes it easier for people to write malware and viruses," Dolan-Gavitt said. "This is not as extreme as things like misinformation but certainly, I think it'll make it a lot easier for people to make spam."

"A lot of the thinking around safety so far has been predicated on this idea that there would be just a couple kinds of central companies that, if you could get them all to agree, we could have some safety standards." Dolan-Gavitt continued. "I think the more competition there is, the more you get this open environment where you can download an unrestricted model, set it up on your server and have it generate whatever you want. The kinds of approaches that relied on this more centralized model will start to fall apart."