Snapchat is hoping lens creators can make augmented reality useful

Just don't call it a metaverse.

While Facebook is still trying to explain what a metaverse is, Snap has been working toward a very different vision for an AR-enabled future. Over the last four years, the company has recruited a small army of artists, developers and other AR enthusiasts to help build out its massive library of in-app AR effects.

Now Snap is tapping lens creators — it has more than 250,000 — to help it make AR more useful. The company is releasing new tools that allow artists and developers to essentially make mini apps inside of their lenses. And it's working on getting its latest AR innovation into more of their hands: its next-generation Spectacles, which have AR displays.

The goal, according to Sophia Dominguez, Snap's head of AR platform partnerships, is to expand the ways AR can be used, while keeping those experiences firmly rooted in the real world — not the metaverse. "You don't want to escape into another world," she says of Snap's philosophy. "To us, the world around us is magical, and there's things to learn from it." Instead, she says, the company is looking to enable experiences that can "bridge" digital spaces and physical ones, and create "more useful applications" for AR.

For now, Snap is leaving it up to creators to figure out exactly what a "useful" augmented reality lens is, but the company is introducing a number of new tools to help them build it. At its annual Lens Fest event, Snap is also introducing a new version of Lens Studio, the software that allows developers to create and publish lenses inside of Snapchat.

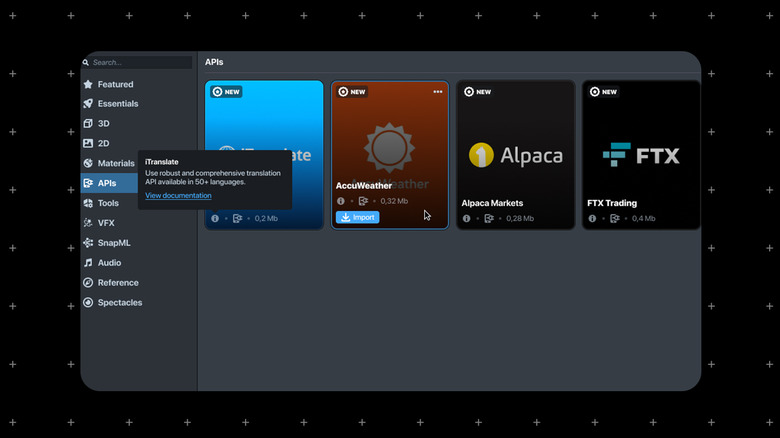

The latest version includes new APIs that allow AR creators to connect their effects to real-time information. The resulting lenses are almost like mini apps inside Snapchat lenses. For example, creators can build lenses with real-time translation capabilities via iTranslate. Or check on their preferred cryptocurrency with a lens powered by crypto platform FTX. Weather data (via Accuweather) and stock market info (from Alpaca) will also be available, and the company is planning to add more partners in the near future.

These kinds of lenses are even more intriguing in the context of Snapchat's augmented reality glasses, its "next-gen" Spectacles. Snap first showed off the glasses earlier this year, saying that the device would only be available to a small number of AR creators and developers. Since then, the company has handed out its latest Specs to hundreds of creators, who have been helping Snap figure out how far they can push the tech, and what kinds of new experiences AR glasses can enable.

That's because with Spectacles, a "lens" doesn't just have to be something that goes on top of a selfie — it can add contextual information to the world around you. For example, one creator experimenting with Spectacles recently showed off a concept where staring out a train window can surface details about where you are and what the weather is.

On a train trip today, I wanted to know what cities I'm passing by, so I created an #AR lens for @Spectacles to tell me where I am. pic.twitter.com/98Wud3i7K3

— Vova Kurbatov (@V_Kurbatov) November 29, 2021

Nike created a Spectacles lens that allows runners to follow an AR pigeon along their route, and view different animations along the way.

Nike + @snapchat AR = no more boring runs.

What do you think @EliudKipchoge? 🏃♀️🏃 pic.twitter.com/dB0Ik91DZB

— Nike.com (@nikestore) November 5, 2021

Creator Brielle Garcia, who has also been experimenting with Spectacles, recently previewed a concept for an AR menu that allows users to view 3D models of meals on a restaurant menu. Other creators are experimenting with interactive shopping and gaming lenses.

"When you think about what's gonna get people to put glasses on their face every single day, those are the things today you're checking your apps for," Dominguez said. "We're really excited to see the different UIs that people can create in augmented reality with this kind of utility."

All that comes with an important caveat: the reason why the AR Spectacles aren't for sale and likely won't be anytime soon is due to some pretty significant hardware limitations. Battery life is extremely limited (the charging case provides four extra charges) and the glasses themselves are, well, ugly.

While previous iterations of Spectacles look and feel like sunglasses, the next-gen Specs are comically large. Every time I see them, all I can think of is the chunky black frames worn by Roddy Piper in They Live. And, after spending some time wearing them, I can confirm that they are even more ridiculous looking when you put them on your face.

That said, Snap has been clear from the get-go that this version isn't intended to look good, or even like something people will want to buy. Rather, the goal is to enable new types of AR development.

And, despite the looks, their capabilities are impressive.The frames are equipped with "3D waveguides," which power the AR displays; as well as dual cameras, speakers and microphones. On the left side is a capture button, so you can snap a photo or video of your surroundings, and on the right is a "scan" button. Much like the feature of the same name in the Snapchat app, scanning can help you find lenses based on your surroundings.

I only got to experiment with a handful of AR lenses while wearing the Specs, but the process was strikingly similar to using lenses in Snapchat's app. I was able to scroll through a selection of lenses by swiping along a touchpad on the outside of the frames. Then, you can place the lens into your surroundings to see the AR effects around you. Like other AR headsets, the field of view is narrow enough that it's not fully immersive, but I was impressed by the resolution and brightness of what I saw.

"'I've worked on each generation of Spectacles and this one is by far the most fun," says David Meisenholder, a senior product designer at Snap, pointing to the company's close collaboration with its creator community. "We're also learning how much we need to go to make those perfect for the consumer glasses of the future."