Facebook's face-recognition tech is almost as good at Stallone-spotting as you are

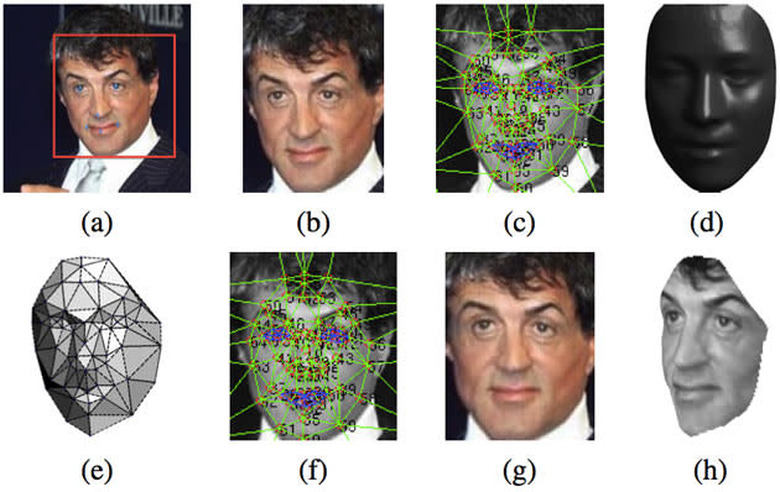

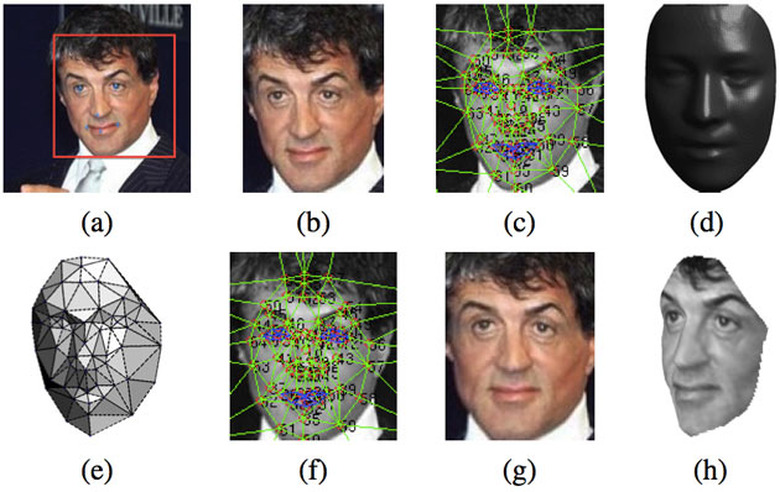

Facebook's long been interested in facial recognition, as the photo tag-suggestion feature that didn't go down too well in Europe shows. The Zuck's social network also gobbled up a face-recognition outfit in 2012, but it's Facebook's AI research team that's made headway recently with technology that's almost as good as us meatsacks at identifying mugs. Known as DeepFace, the system uses a "nine-layer deep neural network" that's been taught to pick up on patterns by looking at over 4 million photos of more than 4,000 people. We're not as up-to-date with complex machine learning techniques as we should be, either, but the main reason DeepFace is so accurate is its method of "frontalization" — or, creating a front-facing portrait from a more dynamic source image.

Like in the Sly storyboard above, the tech maps facial features, combines them with a generic 3D mask, and spits out a model that can be orientated so the subject is now perfectly posed. By doing this, it can simply compare the output with other images generated in the same way, thus silencing troublesome differences in lighting, expression, face position and the like as best it can. And, it does an extremely good job, with 97.25 percent recognition accuracy in a standardized test that humans score just over 97.5 percent in. DeepFace is just an academic endeavor currently, but it would seem like a wasted effort if Facebook didn't make use of all this work in the future — because we're all looking forward to being auto-tagged in the background of poorly lit pictures with our best nightclub-faces on, aren't we?