Brain-like circuit performs human tasks for the first time

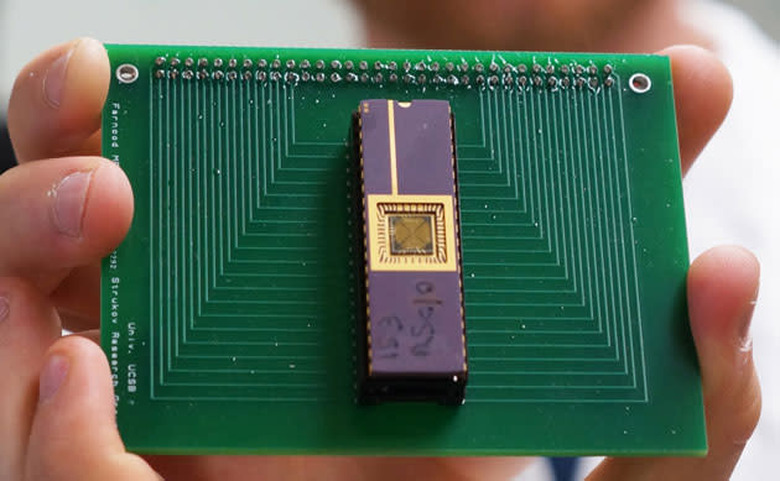

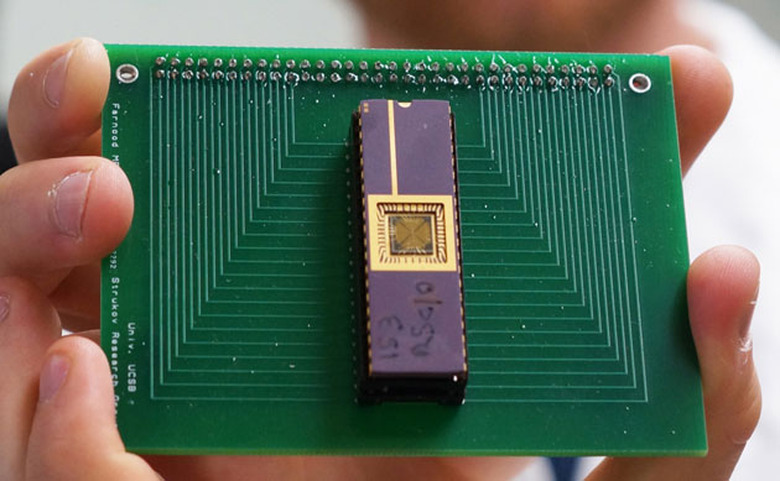

There are already computer chips with brain-like functions, but having them perform brain-like tasks? That's another challenge altogether. Researchers at UC Santa Barbara aren't daunted, however — they've used a basic, 100-synapse neural circuit to perform the typical human task of image classification for the first time. The memristor-based technology (which, by its nature, behaves like an 'analog' brain) managed to identify letters despite visual noise that complicated the task, much as you would spot a friend on a crowded street. Conventional computers can do this, but they'd need a much larger, more power-hungry chip to replicate the same pseudo-organic behavior.

This is far from ushering in truly complex neural computers that replicate human intelligence down to a tee. You'd need many more artificial synapses to achieve tasks that require a subtler understanding of what's going on, like recognizing what should be in a scene based on what's there. That should only be a matter of time, though, and the scientists believe there's an interim step in the near future where a memristor network pairs with a traditional processor to perform more sophisticated tasks. Don't be surprised if you eventually see phones with genuinely smart navigation apps, or medical imaging systems that identify conditions which would otherwise be hard to find.

[Image credit: Sonia Fernandez]