Super-sharp 3D cameras may come to your smartphone

A breakthrough makes even the tiniest 3D sensor up to 1,000 times sharper.

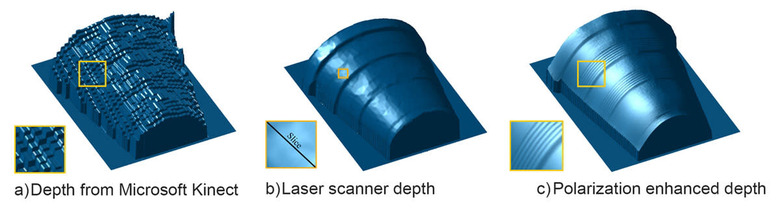

Many 3D cameras and scanners produce rough images, especially as they get smaller and cheaper. You often need a big laser scanner just to get reasonably accurate results. If MIT researchers have their way, though, even your smartphone could capture 3D images you'd be proud of. They've developed a technique that uses polarized light (like what you see in sunglasses) to increase the resolution of 3D imaging by up to 1,000 times. Their approach combines Microsoft's Kinect (or a similar depth camera), a polarized camera lens and algorithms to create images based on the light intensity from multiple shots. The result is an imager that spots details just hundreds of micrometers across — you'd be hard-pressed to notice any imperfections.

The concept would need significant tweaking for a smartphone-sized 3D camera, since it revolves around a mechanical filter. You'd likely have to use grids of polarization filters over the sensor, and that would drop the effective resolution. You wouldn't get 23-megapixel cameras for a while, folks. However, it would still be good enough that you could snap a photo of an object from your phone and get a high-quality 3D printout. The technology could also be a boon to self-driving cars, which could effectively cut through rain or snow to get an accurate view of where they're going. No matter what, the days of crude 3D pictures might soon come to an end.