'White' Twitter bots can help curb racism

A study shows that social pressure curbs harassment, but only from white, popular users.

Twitter is trying to curb the virulent racism on its platform by banning bigots and expanding reporting features, but it's like whack-a-mole — two pop up for every one banned. However, a new research paper shows that calling out users who post racist and sexist slurs can heavily curb trolling. There's a catch, however: it's much more effective if the "white knight" is, well, white

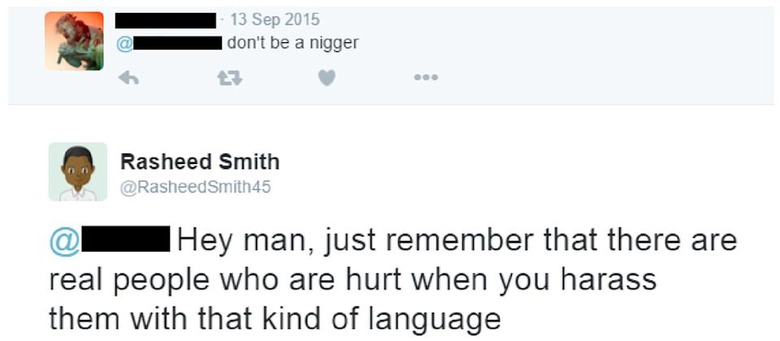

NYU student Kevin Munger started his social experiment by seeking out 231 Twitter users who frequently used the term "n****r" in a targeted manner with the "@" symbol. He chose accounts that were at least six months old with white male users, describing them as "the largest and most politically salient demographic engaging in racist online harassment of blacks."

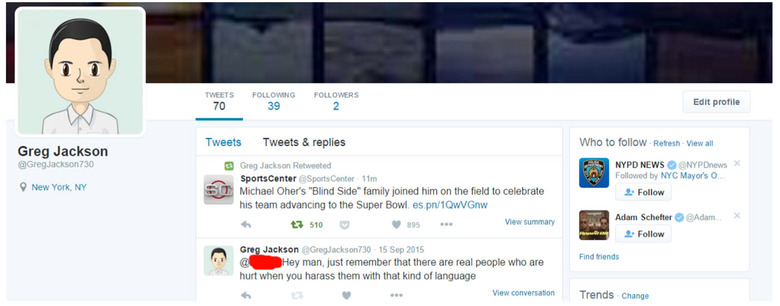

Munger created fake Twitter bot accounts using names typically associated with both white and black males, and added racially corresponding cartoon avatars. He then purchased fake followers for some of the accounts, leaving others with a sparse count. When his algorithms detected posts containing the n-word with the right criteria (targeted with "@" replies, high offensiveness score, adult and white male), the bots replied, saying "@[subject] Hey man, just remember that there are real people who are hurt when you harass them with that kind of language."

The bots showed that rebukes from apparent white male Twitter users with high follower counts caused posts containing n-word slurs to drop around 27 percent. Furthermore, the practice worked even after several weeks, albeit with reduced effectiveness. "The 50 subjects in the most effective treatment condition tweeted the word 'nigger' an estimated 186 fewer times in the month after treatment," the paper notes.

However, white users with low follower counts and apparent black males had little impact on harassment. And many users, even those not anonymous, actually posted further negative replies to the bots in those cases. "This finding concords with my hypothesis that the largest treatment effect would be that of receiving a message from a high-status white man," Munger writes.

The experiment was limited to white and black male Twitter users to ensure that "the in-groups of interest (gender and race) don't vary among the subjects, and thus that the treatments are the same," Munger says. However, he adds that "an important extension to the study would be a manipulation to reduce misogynist online harassment, which continues to be a large problem for women on social media."

By updating [community members'] beliefs about the norms of online behavior, the [bot] treatment caused a significant reduction in the use of racist slurs.

The usual way of fighting racism on social media (by banning users) can backfire, Munger says, "and cause people to confuse the use of racist or misogynist slurs with defense of free speech." As evidence of that, he cites the GamerGate movement's siren call ("ethics in journalism") and folks attracted to Trump's "ethnocentric" presidential campaign.

Researchers have long thought that contact between different groups can reduce prejudice, but as Munger notes, that has been difficult to prove experimentally. His paper, he concludes, shows that by "updating [community members'] beliefs about the norms of online behavior, the [bot] treatment caused a significant reduction in the use of racist slurs." The next step is to test whether this actually changes underlying attitudes toward racism in the real world.