New MIT tech lets you mess with objects in pre-recorded video

Yes, they’ve already successfully tested it in ‘Pokémon Go.’

Despite everything going on within the frame, videos are still a passive experience to observe. You can't reach in and mess with the objects you're watching — until now. An MIT researcher has pioneered new technology that lets you "touch" recorded things, which are simulated to respond like you'd fiddled with them in the real world.

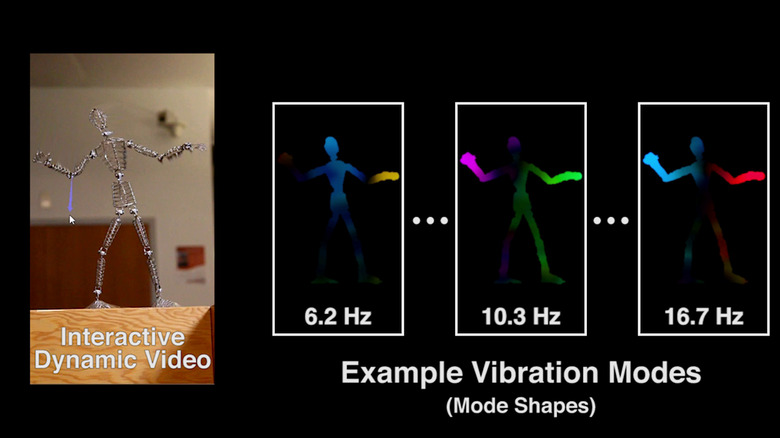

So how do you predict which ways an already-recorded object will move when tweaked? The system, called "Interactive Dynamic Video" (IDV), needs five less than a minute of footage to track movement possibilities. It does so by analyzing how it shifts when intentionally jostled: In the video example below, a researcher slams the table on which a humanoid figure is resting, which lets the system see how it vibrates across different frequencies. Then it extrapolates how the item should behave when viewers reach in with their cursors and jostle the object in video.

Obviously this could have many applications in entertainment and education, but the researchers wanted a more topical example — so they inserted the system into Pokémon Go's augmented reality. With IDV, the environment automatically reacts to the pocket monsters' movements, making it seem like the wee beasts are actually bending leaves and grass as they bounce around.

The simulation isn't perfect, admits MIT PhD student Abe Davis in the first video, but more information will boost the movement accuracy of the IDV-produced models. With sufficient precision, it could be used to evaluate the structural integrity of buildings. But it also has uses in movie special effects, tweaking real-world objects that a computer-generated character interacts with on screen.