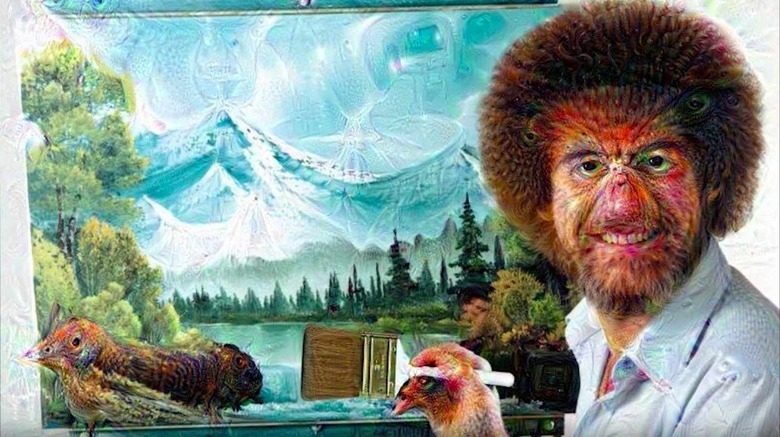

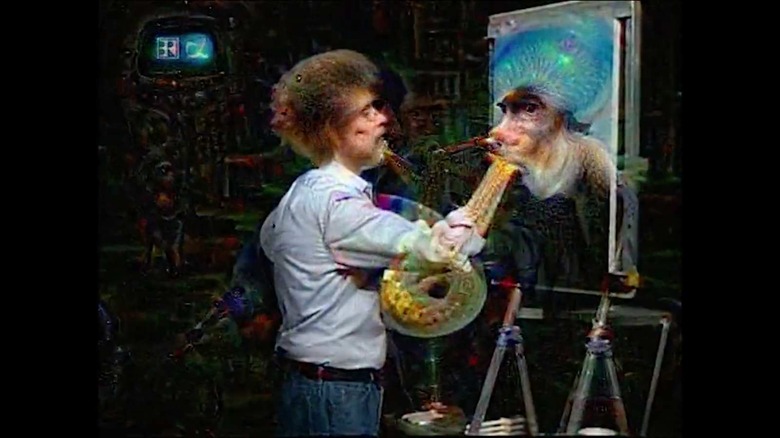

This is what AI sees and hears when it watches 'The Joy of Painting'

It's like someone spiked your computer's drink with "ASCIID"

Computers don't dream of electric sheep, they imagine the dulcet tones of legendary public access painter, Bob Ross. Bay Area artist and engineer Alexander Reben has produced an incredible feat of machine learning in honor of the late Ross, creating a mashup video that applies Deep Dream-like algorithms to both the video and audio tracks. The result is an utterly surreal experience that will leave you pinching yourself.

Deeply Artificial Trees from artBoffin on Vimeo.

"A lot of my artwork is about the connection between technology and humanity, whether it be things that we're symbiotic with or things that are coming in the future," Reben told me during a recent interview. "I try to eek a bit more understanding from what technology is actually doing." For his latest work, dubbed "Deeply Artificial Trees", Reben sought to represent "what it would be like for an AI to watch Bob Ross on LSD."

To do so, he spent a month feeding a season's worth of audio into the WaveNet machine learning algorithm to teach the system how Ross spoke. Wavenet was originally developed to improve the quality and accuracy of sounds generated for text-to-speech systems by directly modelling the original waveform using each sample point (up to 16,000 every second for 16KHz audio), rather than rely on less effective concatenative or parametric methods.

Essentially, it's designed to take in audio, make a model around what it's heard, and then produce new audio based on its model, Reben explained. That is, the system didn't study the painter's grammar or vernacular idiosyncrasies but rather his pacing, tone and inflection. The result is eerily similar to how Ross talks when he focuses on the painting and speaks in hushed tones. The system even spontaneously generated various breath and sigh noises based on what it had learned.

Reben has been refining this technique for a while. His previous efforts with Wavenet first trained the neural network to mimic the styles of various celebrities based off of each person's voice. Their words are jumbled and indecipherable but the cadence and inflections are spot on. You can hear that it's President Obama, Ellen DeGeneres or Stephen Colbert speaking, even if you can't understand what they're saying. He also trained the system to generate its best guess as to what an average English-language speaker would sound like based on the input from a 100-person sample.

For the video component, Reben leveraged a pair of machine learning algorithms, Google Deep Dream models on TensorFlow and a VGG model on Keras. Both of these operate on the already familiar Deep Dream system wherein the computer is "taught" to recognize what it's looking at after being fed series of training images that have been pre-categorized. The larger the training set, the more accurate the resulting neural network will be. But, unlike systems such as Microsoft's Captionbot, which only report on at what they're seeing, Deep Dream will overlay the image of what it thinks it sees (be it a dog or an eyeball) on top of the original image — hence the LSD trip-like effects. The result is an intensely disconcerting experience that, honestly, is not far from what you'd experience on an actual acid trip.

Interestingly, both components of this short movie — the audio and video — were produced independently. The audio portion required a full season's worth of speech in order to generate its output. The video portion, conversely, only needed a handful of episodes to stitch together. "Really, it's what a computer perceives Bob Ross to sound like along with a computer 'hallucinating' what it sees in each frame of the video," Reben explained.