UC Berkeley researchers teach computers to be curious

It's an AI that understands exploration.

When you played through Super Mario Bros. or Doom for the very first time, chances are you didn't try to speedrun the entire game but instead started exploring — this despite not really knowing what to expect around the next corner. It's that same sense of curiosity, the desire to screw around in a digital landscape just to see what happens, that a team of researchers at UC Berkeley have imparted into their computer algorithm. And it could drastically advance the field of artificial intelligence.

Google's AlphaGo AI, the one that just repeatedly dominated the world's top Go players, uses what's called a Monte Carlo tree search function to decide its next move. Each "branch", or decision, in that tree has a weighted value that's determined from previous experiences and the relative rewards associated with them. This is known as "reinforcement learning" and is basically the same way you train a dog: rewarding effective behavior and discouraging the ineffective.

This obviously works well for dogs (all of whom are good) but it does present a significant shortcoming when training neural networks: the AI will only pursue high reward actions no matter what, even to the detriment of its overall efficiency. It will run into the same wall forever rather than take a moment and think to jump over it.

The UC Berkeley team's AI, however, has been imbued with the ability to make decisions and take action even when there isn't an immediate payoff. Though, technically, the researchers define curiosity as " the error in an agent's ability to predict the consequence of its own actions in a visual feature space learned by a self-supervised inverse dynamics model."

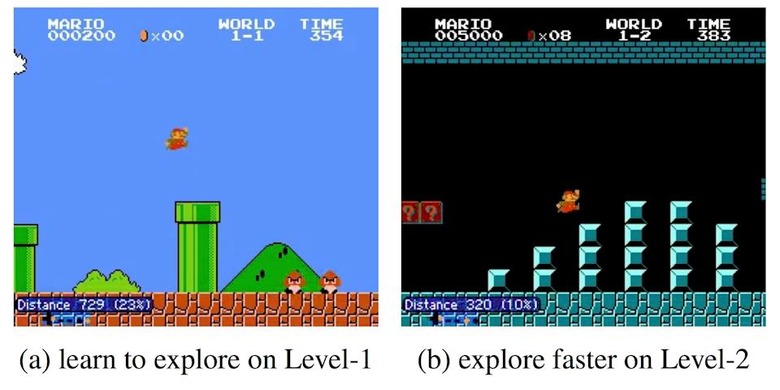

To train the AI, the researchers taught it to play Super Mario Bros. and VizDoom. As you can see in the video below, rather than blindly repeat the same high value action over and over again, the system plays more like people do with the same basic understanding that there's more to the game than the wall immediately in front of them.

"In many real-world scenarios, rewards extrinsic to the agent are extremely sparse, or absent altogether," the study's authors wrote. "In such cases, curiosity can serve as an intrinsic reward signal to enable the agent to explore its environment and learn skills that might be useful later in its life."

The implications of this are immense. We've already got Google training neural networks to design and generate baby neural nets, researchers at Brigham Young University teaching them to cooperate, and now this advancement enabling AI to teach itself. The pace at which artificial intelligence is getting smarter and more human-like is accelerating. Best of all, it shows no signs of slowing down.