Scientists made an AI that can read minds

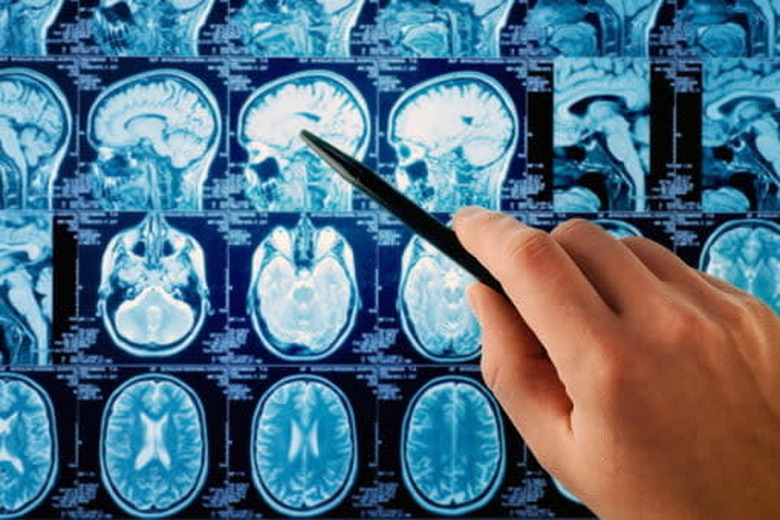

This new deep learning algorithm can analyze brain scans to predict thoughts.

Whether it's using AI to help organize a Lego collection or relying on an algorithm to protect our cities, deep learning neural networks seemingly become more impressive and complex each day. Now, however, some scientists are pushing the capabilities of these algorithms to a whole new level – they're trying to use them to read minds.

By reverse-engineering signals sent by the brain, researchers at Carnegie Mellon University have been working on an AI that can read complex thoughts simply by looking at brain scans. Using data collected from a functional magnetic resonance imaging (fMRI) machine, the CMU scientists feed that data into their machine learning algorithms, which then locate the building blocks that the brain uses to create complex thoughts.

Impressively, the study showed that the team were able to demonstrate where and how the brain was being triggered while processing 240 complex events, covering everything from individuals to places and even various physical actions or aspects of social interaction. It's by understanding these triggers that the algorithm can use the brain scans to predict what is being thought about at the time, connecting these thoughts into a coherent sentence.

Selecting 239 of these complex sentences and feeding the AI the corresponding brain scans, the algorithm managed to successfully predict the correct thoughts with an astounding 87 percent accuracy. It could also do the reverse, receiving a sentence and then outputting an accurate image of how it predicted that thought would be mapped inside a human brain.

The astonishing research shows just how far deep learning has come. If you weren't worried about the rise of super powered machines before, now that they can read minds, it's probably time to start preparing for the inevitable robot apocalypse.