The outcome of this virtual riot depends on your emotions

Control your fear, control the story.

Consider what your first reaction would be if, during a protest turned violent, you came face-to-face with a riot cop barking at you from behind his clear shield. Not the words that come out of your mouth nor the moves you could make to escape. Before any of that, your emotions would already be fired up and broadcasting to the policeman what you might do next. In RIOT 2, an interactive film by Karen Palmer, controlling these emotions is the key to your escape.

Inspired by protests in Baton Rouge and Ferguson to Turkey and Venezuela, the goal of RIOT is essentially to keep calm. It is designed to make you fearful.

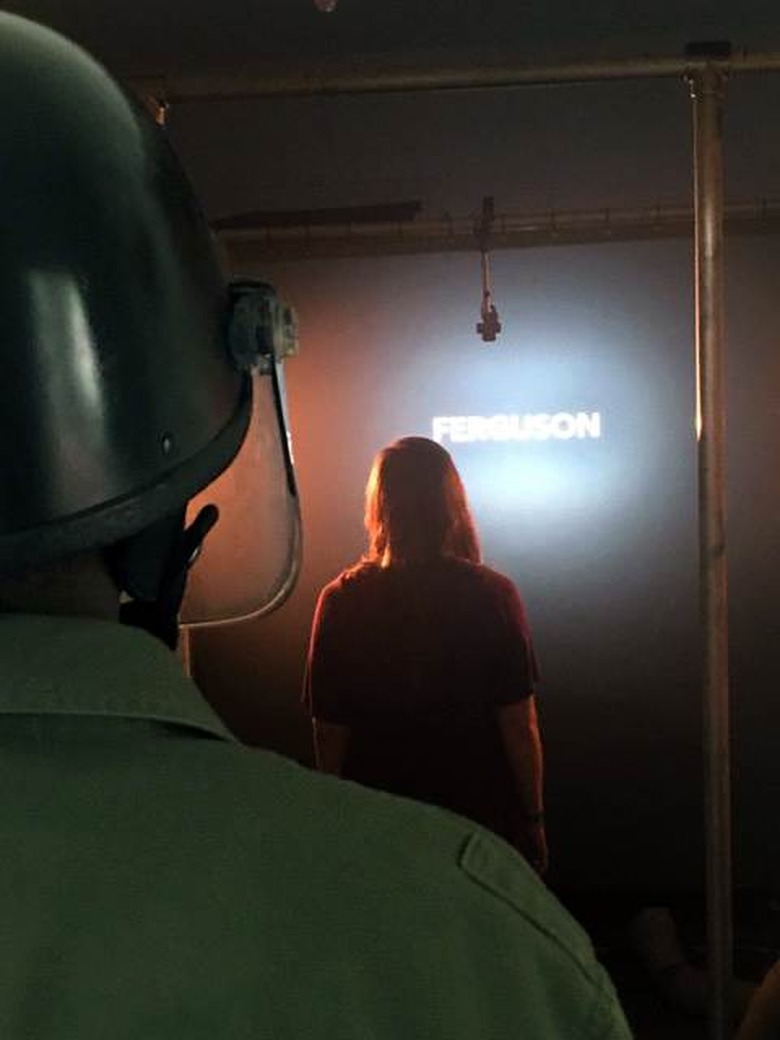

At a recent demo at the Future of Storytelling (FoST) Festival in New York, RIOT was installed in a stand-alone house where a man in a gas mask, helmet and olive-green outfit (actually Palmer's fiancé, Gary Franklin) directed participants into position in front of a screen. A wrecked trash can, traffic cone and black-and-yellow safety tape littered the dark area — set-design elements added by co-producers from the British National Theatre's Immersive Storytelling Studio. By the time participants watched real-life intro footage of a protest in Washington, DC, and absorbed the sirens wailing and jarring jungle-influenced soundtrack, their adrenaline was rising.

What follows is an encounter with the riot police and then, if players keep their cool, a cinematic urban chase around London's South Bank, interspersed with more narrative forks in the road. These are like quick time events in video games, except instead of participants hitting a button, a camera trained on their faces reads their emotions.

Developed by collaborator Hongying Meng at Brunel University London, the software detects calm, fear and anger according to factors like eye width, frowns and mouth shape. The challenge is to stay calm at critical moments or the experience ends. It's emotional conditioning through gamification.

Software that recognizes emotions — part of the field of affective computing — has made strides in recent years. Fueled by machine learning, companies like Affectiva are able to distinguish genuine from forced expressions and pick up micro-gestures that the human making them isn't even aware of. While the opportunities for advertisers and political campaigners to understand a target audience are myriad, standout artistic experiences using these capabilities have been scarcer.

"Ultimately we are the original joystick. Our bodies are the way we already interact and process the world."

Yet the ongoing melding of games and film into interactive narratives raises the question of how we should control these new experiences naturally. Motion sensors, natural language processing, haptics and perhaps even mind control have all been used. Emotions are another, lesser explored interface.

"Ultimately we are the original joystick. Our bodies are the way we already interact and process the world," said Charles Melcher, director of FoST. "Conversation, facial expression, intonation of our voice, physical gesture — all of those are the natural language of human interaction. Technology is finally discovering what we are as a species and enabling it in the purest way."

RIOT is part of a wave of narrative experiences that use technology to activate specific emotions. At FoST, there were projects meant to make you feel intimacy or even — purportedly – fall in love. If storytelling is about eliciting human emotions, Melcher said his goal is to find examples of technology augmenting that process to create "sensual media."

"It's a kind of storytelling that reminds us of the sensory joy of being alive," he said. "And I think that's a direct response to a lot of technology which has for many years been doing the opposite, disconnecting us from ourselves."

"I believe that if you're fearful or angry often your narrative in life doesn't get to reach a conclusion."

In RIOT, the primary emotion Palmer plays with is fear. "In my opinion, fear is the most powerful emotion," Palmer, originally from London, said. "If you're calm, your narrative will play out because I believe that if you're fearful or angry often your narrative in life doesn't get to reach a conclusion."

It's a recurrent theme in her work — how to face fear head on, and move through it — that comes in part from practicing freerunning for the past 13 years with international group Parkour Generations. An earlier project, Syncself, was a parkour simulator using an EEG headset to measure participants' focus. If their brainwaves didn't show concentration, they'd fail to make their leaps.

In the same way Palmer has managed her relationship with fear through parkour, she wants RIOT to help people understand their emotions. "I would like them to get an insight or confirmation into who they are," she said. Though RIOT is filmed from a first-person point of view, it's not as much about connecting with another character as learning about your reaction to pressure. You have to confront not only how you feel but also, crucially, how your emotions are projected to others. Research shows that the majority of communication is nonverbal, and it doesn't matter if you feel calm should the cop think you're angry and bring out his nightstick. RIOT is about understanding your subconscious, involuntary emotional expression and learning how to regulate it.

Palmer likens RIOT to a "gym of the mind" where you're "programming yourself," and it's easy to see its pragmatic uses in conflict training or any other preparation for a fight-or-flight scenario. It's a contrast to much storytelling, where your emotional openness to wherever the experience leads you — say, to be frightened in It or warmed in The Big Sick — enhances the enjoyment. In RIOT, you have to steel yourself. Like a traditional movie director, Palmer is still trying to manipulate your emotions. But you have to push back or you don't get to see the ending.