'Reverse Prisma' AI turns Monet paintings into photos

It can also change horses into a zebras or winter to summer.

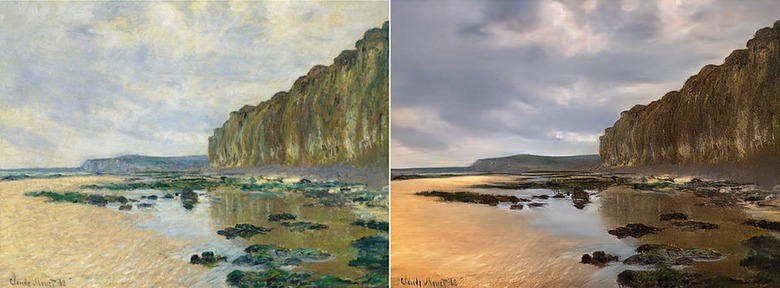

Impressionist art is more about feelings than realism, but have you ever wondered what Monet actually saw when he created pieces like Low Tide at Varengeville (above)? Thanks to researchers from UC Berkeley, you don't need to go to Normandy and wait for the perfect light. Using "image style transfer" they converted his impressionist paintings into a more realistic photo style, the exact opposite of what apps like Prisma do. The team also used the same AI to transform a drab landscape photo into a pastel-inflected painting that Monet himself may have executed.

Style transfer has suddenly become a hot thing, apparently, as Adobe recently showed off an experimental app that lets you apply one photo style ('90s stoner landscapes) to another (your crappy smartphone photo).

UC Berkely researchers have taken that idea in another direction. You can take, for instance, a regular photo and transform it into a Monet, Van Gogh, Cezanne or Ukiyo-e painting. The team was also able to use the technique to change winter Yosemite photos into summer ones, apples into (really weird) oranges and even horses into zebras. The technique also allowed them to do photo tricks like creating a shallow depth of field behind flowers and other objects.

The most interesting aspect of the research is the fact that the team used what's called "unpaired data." In other words, they don't have a photo taken at the scene at the exact moment Monet did his painting. "Instead, we have knowledge of the set of Monet paintings of of the set of landscape photographs. We can reason about the stylistic differences between those two sets, and thereby imagine what a scene might look like if we were to translate it from one set into another."

That's easier said than done though. First, they needed to figure out the relationships between similar styles in a way that a machine can understand. Then they trained so-called "adversarial networks" using a large number of photos (from Flickr and other sources) and refined them by having both people and machines check the quality of the results.

Ideally, the system would be "cycle consistent." Just as you hope to have the original sentence when you translate English to French and back again, you want roughly the same painting when you translate a Monet to a photo and back again. In many cases, other than a loss of pixel resolution, the team succeeded in that regard (above).

All is not perfect, of course. Since the algorithms have to deal with a lot of different styles for both paintings and photos, they often fail completely to transfer one to another. As with other systems, one of the main issues is with geometric transformations — changing an apple into an orange is one thing, but attempting to transform a cat into a dog instead produces a very disturbing cat.

The team adds that its methods still aren't as good as using paired training data either — ie, photos that exactly match paintings. Nevertheless, left on its own accord, the AI is surprisingly good at transferring one image style to another, so you'll no doubt see the results of their work soon in your Instagram feed. If you want to try it for yourself and are comfortable with Linux, you can grab the code here.