DeepMind researchers create AI with an ‘imagination’

It can plan future actions and even decide how it wants to imagine.

Being able to reason through potential future events is something humans are pretty good at doing, but that kind of ability is a real challenge when it comes to training AI. Taking those reasoning skills and using them to create a plan is even more difficult, but the Google DeepMind team has begun to tackle this problem. In a recent blog post, researchers describe new approaches they've developed for introducing "imagination-based planning" to AI.

Other programs have been able to work in planning abilities, but only within limited environments. AlphaGo, for example, can do this well, as the researchers note in the blog post, however, they add that "environments like Go are 'perfect' - they have clearly defined rules which allow outcomes to be predicted very accurately in almost every circumstance." Facebook also created a bot that could reason through dialogue before engaging in conversation, but again, that was in a fairly restricted environment. "But the real world is complex, rules are not so clearly defined and unpredictable problems often arise. Even for the most intelligent agents, imagining in these complex environments is a long and costly process," said the blog post.

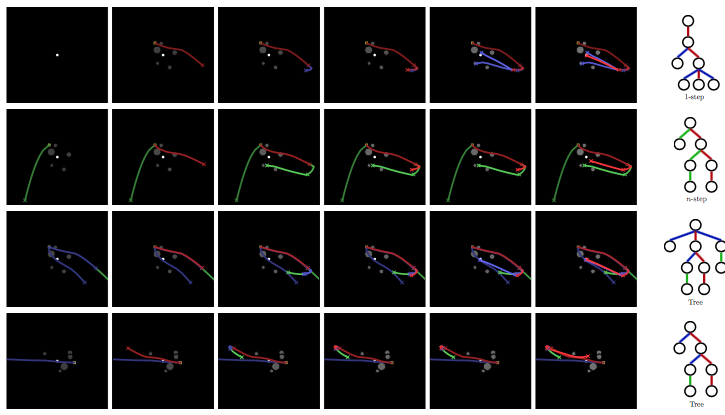

DeepMind researchers created what they're calling "imagination-augmented agents," or I2As, that have a neural network trained to extract any information from its environment that could be useful in making decisions later on. These agents can create, evaluate and follow through on plans. To construct and evaluate future plans, the I2As "imagine" actions and outcomes in sequence before deciding which plan to execute. They can also choose how they want to imagine, options for which include trying out different possible actions separately or chaining actions together in a sequence. A third option allows the I2As to create an "imagination tree," which lets the agent choose to continue imagining from any imaginary situation created since the last action it took. And an imagined action can be proposed from any of those previously imagined states, thus creating a tree.

The researchers tested the I2As on the puzzle game Sokoban and a spaceship navigation game, both of which require planning and reasoning. You can watch the agent playing Sokoban in the video below. For both tasks, the I2As performed better than agents without future reasoning abilities, were able to learn with less experience and were able to handle imperfect environments.

DeepMind AI has been taught how to navigate a parkour course and recall past knowledge and researchers have used it to explore how AI agents might cooperate or conflict with each other. When it comes to planning ability and future reasoning, there's still a lot of work to be done, but this first look is a promising step towards imaginative AI.