Let’s stop pretending Facebook cares

It’s time to flip the script.

The really great thing to come out of the Cambridge Analytica scandal is that Facebook will now start doing that thing we were previously assured at every turn they were doing all along. And all it took was everyone finding out about the harvesting and sale of everyone's data to right-wing zealots like Steve Bannon for political power. Not Facebook finding out because they already knew. For years. In fact, Facebook knew it so well, the company legally threatened Observer and NYT to prevent their reporting on it, to keep everyone else from finding out.

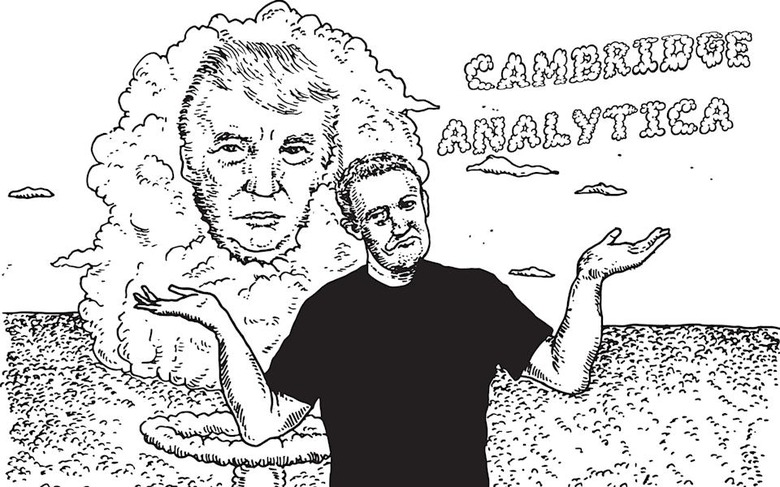

For the past seven days the internet has exploded in anger, betrayal, and disgust over the actions of Facebook and Cambridge Analytica, a data-dealing propaganda firm that wins elections for paying clients and helped put Donald Trump in the White House. Actions that, despite Facebook's pearl-clutching in the press, are starting to look more and more in concert.

To be clear, Facebook knew what Cambridge Analytica did, but only (partly) acted on it when the press made it public knowledge. It was more than two years ago — December 2015 — when The Guardian published its first report about Cambridge Analytica using Facebook data to target US voters. Facebook didn't suspend them until last Friday. Cambridge Analytica maintained its access for years, while one of its chief officers worked at Facebook — and while Facebook employees were embedded in the Trump campaign's digital media operation.

Keep in mind that Trump won by well-placed Electoral College votes: 77,744 to be precise. Before Cambridge Analytica came along, Trump had "no unifying data, digital and tech strategy," according to leaks made public today by The Guardian. With its Facebook data, they ran Facebook ads disguised as news stories, linked to fake news sites, and targeted Facebook users they deemed especially susceptible. Then Cambridge Analytica tracked those Facebook users across the internet and re-targeted them. Russia's trolls did the rest, providing all the confirmation and social proof needed.

So, long after Facebook knew that Cambridge Analytica had mined data from millions of unwitting users, a Facebook board member (Thiel) gave $1 million to a PAC that was paying the company that covertly harvested user data.

... from Facebook. https://t.co/osOJDj3Wx7

— Caroline O. (@RVAwonk) March 22, 2018

When the The Guardian's 2015 article came out, Facebook pretended to care.

"And then," former Cambridge Analytica employee Christopher Wylie told The Observer, "all they did was write a letter.

"But literally all I had to do was tick a box and sign it and send it back, and that was it," says Wylie. "Facebook made zero effort to get the data back."

Over the weekend, press characterized Wylie as a whistleblower. Which I suppose is easy to do if we're going to forget where all of this data dealing and vote-influencing has led — the indisputable rise of hate groups in the US since the 2016 election, Facebook's churning cauldron of racist communities and deadly Nazi rallies, among others. Writer Joseph Guthrie tweeted, "If I ever meet Chris Wylie, there will be no pleasantries. This isn't something you can apologise for and we all just move on. The price paid is too great a cost to ignore and again: assuming he's a queer man like I am, his involvement in this thing is a catastrophic betrayal."

It wasn't until the NYT and The Observer prepared to publish their articles last Friday that Facebook decided to suspend Cambridge Analytica and Christopher Wylie from the platform — in a weak attempt to get ahead of the story. Even then, it was after Facebook made legal threats against both NYT and The Observer in an effort to silence both publications.

So it was well-known internally at Facebook years ago that Cambridge Analytica extracted a lot of seriously sensitive user data through Facebook APIs, then used it for precision ad targeting (which Facebook could've vetted). It speaks volumes about Facebook — and all its efforts to distract from this issue — that it allowed a huge amount of sensitive data to get into the hands of unauthorized third parties ... and took years to take any action other than pinky swears.

It almost goes without saying that this whole sickening affair is more proof we didn't need that Facebook only cares when it is forced to: When the company decides it has a reputation problem. Which is the only problem they actually care about fixing. Other than that, it's all about creating more data dealer WMDs, like Facebook's impending patent to determine social class, which we can all assume will be abused until press who can afford to stand up to Facebook write an article about it.

But when you're Facebook, victimhood is performative.

A Facebook spokesperson released a statement Tuesday saying "the entire company is outraged we were deceived." Obviously. They were so paralyzed by rage that they couldn't even bring themselves to do anything about it for over two years. During which time they rolled out several "upgrades" to their privacy systems and terms, and rolled out "enhanced" features to improve the experience. They were so mad they even hired the Global Science Research officer that sold Cambridge Analytica's users' data.

Bogglingly, the official Facebook response on Wednesday began with the sentence, "Protecting people's information is the most important thing we do at Facebook. What happened with Cambridge Analytica was a breach of Facebook's trust."

Wednesday was also the day of Mark Zuckerberg's carefully crafted mea culpa press tour. It was an afternoon masterclass on softball interviews. No questions about knowingly hiring Cambridge Analytica's Joseph Chancellor. No queries about threatening to sue The Observer to prevent the information from getting out. You know, the whole reason he's sitting there being interviewed. It was almost like everyone at CNN, WIRED, Re/Code and NYT wanted Zuckerberg to feel safe. Comfortable. Far away from accepting anything like liability. Giving him a voice with which to explain his feelings of betrayal and all about his determination to do better while effectively changing nothing.

When the only thing that actually changed is people found out about it.

I particularly loved WIRED's feel of a chat over coffee, asking Zuckerberg what "philosophical changes" have been going through his mind lately. He got to say how, gosh, he really wishes he didn't have to deal with making decisions about content that promotes opposition of gender and racial equality. Which he shamelessly believes is a diverse and underserved point of view. As if fairness means incorporating, if not humoring and tolerating, ruinous disinformation campaigns, the beliefs of white nationalists, neo-Nazis, and fascists working for the most disastrous president in US history.

"A lot of the most sensitive issues that we face today are conflicts between real values, right? Freedom of speech, and hate speech and offensive content. Where is the line?" he said to Re/Code. "What I would really like to do is find a way to get our policies set in a way that reflects the values of the community so I am not the one making those decisions."

Yep. While his company is the world leader in content censorship of art, human sexuality, and black activists, Mark Zuckerberg literally never imagined he'd someday be in the position to do the right thing about hate speech. And wishes he didn't have to.

That Facebook only pretends to care — in the ways least affecting its business model — isn't new. Nor is that the company is having yet another (temporary) "come to Jesus" moment about honesty when its hand is forced. Apology tours are its speciality.

Look, Zuckerberg did say sorry — that they "let the community down." It felt just as real as all of Facebook's regular apologies over the past ten years or so. I can't wait for the next one.

Zuckerberg also shored up his assurances to press this week by stating that Facebook would be beefing up its security team. He said, "We're going to have 20,000 people working on security and content review in this company by the end of this year."

Facebook can hire all the security it wants. It won't do anything to protect us from Facebook.