With Squadbox, friends moderate harassing messages in your email

MIT researchers developed it as a way to mitigate online harassment.

Researchers at MIT's Computer Science and Artificial Intelligence Laboratory have developed a tool aimed at fighting harassment online. It's called Squadbox and the idea behind it is to have your friends or coworkers moderate your incoming messages for you. The research team interviewed a number of people who had experienced online harassment in the past and then designed Squadbox's features based on those conversations.

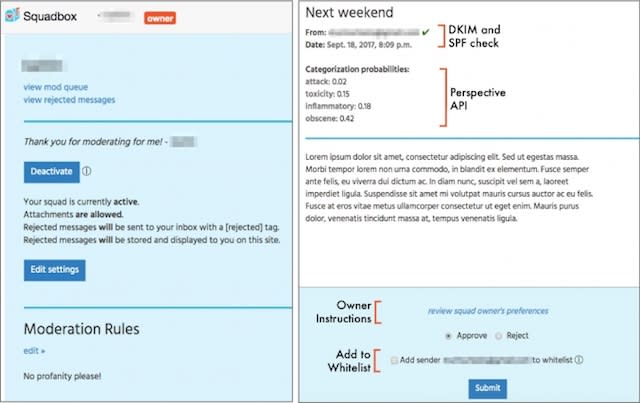

Currently, Squadbox is only designed to work with email, but the team says they're looking to expand its use to other messaging and social media platforms. Squadbox owners can set up filters, which will trigger certain emails to be sent to their moderators. Moderators can then decide whether to send that email back to the owner, or reject it based on what the owner has declared they don't want to see. Email senders can also be white- and blacklisted to automate whether their messages are approved or rejected. Squads can also be deactivated when there's a lull in harassing messages and reactivated when moderation is needed again.

The team demoed the system and tested it out with a handful of individuals. Those observing the demo expressed interest in Squadbox with one calling it a "very strong pragmatic tool." However, some issues arose during the field test. Moderators became less quick to respond to emails over time and started to feel less confident in their abilities. One moderator noted how much work it was. Owners also began to feel guilty about having their friends moderate their email. The researchers said ultimately, the system "presents challenges for relationship maintenance."

Squadbox is still in development, but friendsourced moderation is an interesting strategy for combating harassing messages. The researchers are presenting a paper on their work at the Association for Computing Machinery's CHI Conference on Human Factors in Computing Systems this month.