Google's News app is a tool for gaining perspective, not an arbiter of facts

AI, not human curation, may help us better understand the world.

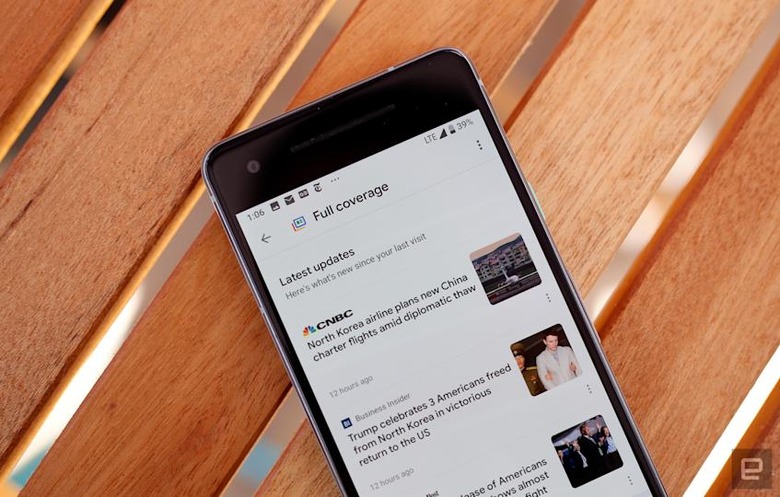

It may have been overshadowed by Android P or a slightly terrifying AI phone call, but Google's new News app was one of the most important things the search giant announced at I/O this year. It relies completely on artificial intelligence to bring you breaking news, but the most fascinating — and potentially most valuable — addition is what Google calls Full Coverage. Fire up the News app on your Android phone and you'll see a tiny icon in the corner of certain stories — tap that and you're taken to a dedicated page that surfaces related stories Google's AI deems trustworthy.

Google's approach with the Full Coverage feature is fascinating for its consistency. Every person who clicks into a Full Coverage package gets the exact same blend of stories, every time. These packages are centered around newsworthy events and consist of (among other things) clearly marked opinion, analysis and fact-checked articles. In building broad, informative recaps around events in the news, Google is conceding that there are varying viewpoints attached to every newsworthy event. Since it refuses to consider itself an arbiter of facts, though, the company's play with Full Coverage is to give people what they need to make up their own minds.

"We don't feel like we're in the business of establishing what the fact is and is not," Google News product lead Trystan Upstill told Engadget. "One of the key challenges we see is that people look at a single document that has been shared with them or is framed by their friends on some platform, and it's impossible to understand what the full context of that story is. We wanted to build something that takes you out of your local echo chamber and kind of lets you blow the doors off."

Upstill wouldn't name names of platforms where this sort of virulent insular thinking is common — he noted that he "wouldn't lay the blame at the feet of any provider because it's complicated." Even so, it's not hard to figure out where the problem gets especially troubling. By dint of its mission to deliver content that's more personally relatable, Facebook's algorithmic ranking has a tendency to reinforce existing viewpoints and biases in a way that echo chambers are allowed to flourish. Once you're in one of those bubbles, it takes time to convince Facebook's underlying algorithms that you're open to being exposed to new perspectives.

That's where Full Coverage shines: You might not like or agree with every article or video that it surfaces, but they come from trusted news sources from across the ideological spectrum to make sure people have a common baseline of understanding to work from. Many of Google's products are valuable because they tailor their experiences to their users, but by insisting that everyone gets the same Full Coverage, the company hopes to make people's worlds just a little bigger.

Chris Velazco/Engadget

"When people are fundamentally stuck in these small echo chambers, it can be hard for them to ask questions," Upstill pointed out. Those people can ask questions at any time, but let's face it — we all know people who wouldn't go to the trouble of seeking out viewpoints different from their own, and Upstill believes that the "distance" between wanting to understand opposing mindsets and actually searching for them can be problematic. It's especially tricky on a smartphone, which Upstill referred to as "a great screen for one article." Google's News app, and Full Coverage more specifically, is meant to close that distance with just a few taps.

Google's embrace of artificial intelligence to help people make sense of the news comes also with a tacit admission that humans aren't ideal for this kind of work. As evidenced by the time Facebook employed humans to flesh out its trending topics, subjective biases — or even the appearance of bias — can influence how users receive or perceive the news. Upstill says Google relies on a variety of signals to ensure that there's no ideological slant to the way it ranks news (though just knowing that probably won't convince everyone.)

Humans also can't handle this kind of news curation at scale. That stands in stark contrast to Apple's take on its own news products — the Cupertino company employs an editorial staff to curate news and create packages around them. Google has shied away from building up a workforce dedicated to managing the flow of news for its users because there's no way it could do that kind of work as efficiently as AI could.

"I can't sit down and within 30 seconds figure out that the North Korean detainees have been suddenly released in the US and that Donald Trump is tweeting about it and that there's a perspective coming in from the Washington Post," Upstill said. "I don't think we could hire enough people to do that just for the US, and this is a product we're launching in 127 countries."

It might seem odd that the future of news might be shaped by precisely zero humans, but reducing the need for human work is one of the big themes here at Google I/O 2018. People can do most things just fine, but machines can do some of those things more efficiently and with surprising levels of thoughtfulness. The human side of news — the journalism, the reactions, the sharing — will endure. Still, if we're going to let machines drive us around and make phone calls for us, why not let them try to make us more intellectually well-rounded, too?

Click here to catch up on the latest news from Google I/O 2018!