Unpaid and abused: Moderators speak out against Reddit

Keeping Reddit free of racism, sexism and spam comes with a mental health risk.

Somewhere out there, a man wants to rape Emily. She knows this because he was painfully clear in typing out his threat. In fact, he's just one of a group of people who wish her harm.

For the past four years, Emily has volunteered to moderate the content on several sizable subreddits — large online discussion forums — including r/news, with 16.3 million subscribers, and r/london, with 114,000 subscribers. But Reddit users don't like to be moderated.

In a joint investigation, Engadget and Point spoke to 10 Reddit moderators, and all of them complained that Reddit is systematically failing to tackle the abuse they suffer. Keeping the front page of the internet clean has become a thankless and abusive task, and yet Reddit's administration has repeatedly neglected to respond to moderators who report offenses.

"I've had a few death threats," said Emily, who asked to be referred to by her first name and her Reddit username, lolihull, to prevent the online harassment from spilling over into her real life. "And when people find out you're a woman, you get rape threats." That's why Emily tries not to mention she's a woman on Reddit: The torrent of abuse is far less likely to turn sexual.

"People are willing to dedicate their time to make you feel threatened for the hell of it," she said.

Some messages are direct and succinct. One sent to Emily reads, "I hope you get shot in the face with a bullet."

But others are profoundly disturbing and more creative. In one rambling attack, a Redditor told Emily, "I want you to get cancer because I wanna see your own body killing you. I want you to get cancer, your mother, sister, brother, father, grandparents I want them all to get cancer and when they open their mouths I wanna piss in them. I fucking hate you. You nasty disgusting slut fuckmeat."

Further down in the diatribe he adds, "I want you to come across a mean STD nigger and he tears your shit hole up so bad you'll never ever have to push your shit out."

Emily's experience is far from unique.

"I had three death threats this past month," said abrownn, who moderates r/Futurology, with more than 13 million subscribers, and r/technology, with more than 6 million subscribers. abrownn asked only to be known by their username.

All the moderators interviewed confirmed they had received death threats, which they said can take a toll.

"Even if you're having the best day, reading a message like 'I'm going to kill you' or 'walk in front of a bus,' is shitty," said Robert Allam, Chief Marketing Officer at Supload, an online sharing platform. Known by his username, GallowBoob, Allam moderates r/tifu ("today I fucked up"), where more than 13 million subscribers share their schadenfreude anecdotes, and r/oddlysatisfying, where 1.8 million subscribers post content that is inexplicably satisfying to watch.

Reddit overtook Facebook this year to become the third most popular website in the US, behind Google and YouTube. At its best, Reddit is a place where people discuss topics with other like-minded individuals; there are subreddits devoted to just about any topic imaginable — there's even one for people who like to Photoshop human arms onto pictures of birds.

At its worst, Reddit is awash with sexist threads, spam and threats. It's down to an army of dedicated volunteer moderators to keep Reddit clean — and they too often get caught in the crossfire.

It's hard to pin down how many moderators there are: Even the moderators themselves don't know, but most estimate their numbers are into the tens of thousands. Some spend hours each day working for free on the site. Whatever the actual figure, they far outnumber the higher-ranking and paid administrators, whose job it is to respond to the evidence that the moderators collect.

Most moderators guess there are roughly 50 paid administrators for Reddit, the sixth-most-popular website in the world. While moderators can remove offensive posts and prevent users from posting on their subreddit if they continue to break the rules, only administrators can ban users from the site entirely.

Subreddits can have their own bylaws, but racism, sexism and hate speech are targeted by moderators on pretty much any thread. The clear majority of abuse is in response to moderators calling users out when they break the rules.

In trying to keep the conversation clean, they can become targets.

"Let's say someone is calling someone the N-word and I ban him," said Allam. "They're going to fixate on you as a moderator."

"Let's say someone is calling someone the N-word and I ban him... They're going to fixate on you as a moderator."

One message Allam received even mentioned his employer: "I will fucking murder you in your London flat when you walk back from your work at Supload, you might want to take this shit seriously for once."

These threats can be persistent. Moderators complained that banned abusers will sometimes spring up under a slight variation of their previous username and start their verbal abuse all over again.

"By doing your volunteer job as a mod you're putting yourself at risk, because, apparently, [Reddit's] tools are not enough to contain these psychos," said Allam. "Reddit just keeps allowing these fresh accounts to be made in batches. Most of them are suspended accounts that broke the rules, but they come back for more."

Other moderators also say it's far too easy for abusers to get back on the site. "You can enter in any fake email you want and then just not verify the account," said William, who moderates r/worldnews, with more than 19 million subscribers, and r/PartyParrot, where more than 100,000 subscribers share their love of the bird. "I think an email address verification should be 100 percent necessary, and it would help curb the problem."

Velo, who moderates r/nottheonion, with almost 14 million subscribers, and r/Games, with close to 1.3 million subscribers, with his username IAMAVelociraptorAMA, said the abuse is kicked up a notch when there are major news events. "With politics where people are constantly angry, you're talking daily abuse to the point you couldn't even filter through all of it.

"I think an email address verification should be 100 percent necessary, and it would help curb the problem."

"It's volunteer work, but you kind of feel obligated to do it, because if you don't then the other people you volunteer with have to take up your share," he said.

William agreed the abuse can cause anxiety. He also finds it distressing when Redditors publicly accuse him of being politically biased in his moderation.

In a feature known as mod mail, users can message all the moderators of a subreddit, which has become a natural forum for abuse. Two years ago, Reddit introduced a function where the moderators can mute an offensive Redditor for 72 hours. But that doesn't solve the problem.

"Sometimes you still get people who after the 72 hours just send you another message and you mute them again and then another message, and it goes on for ages. The longest one I've seen is about a year and a half now," said Emily.

Experts say this kind of regular online abuse is known to create mental health problems.

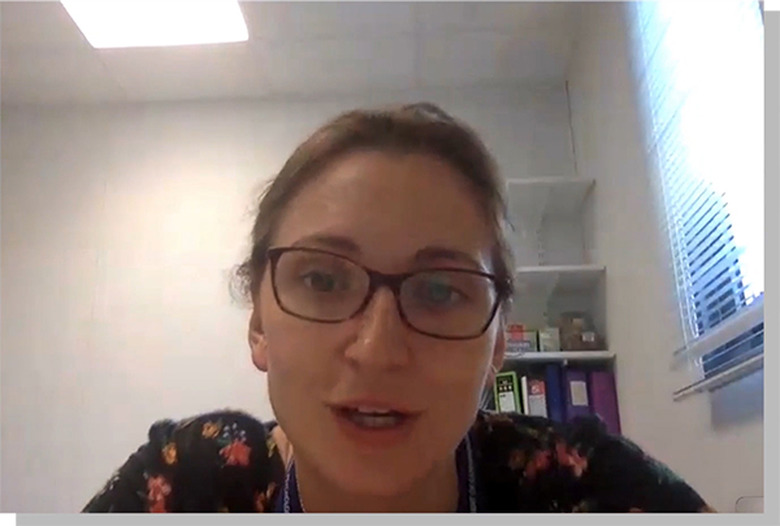

"It absolutely is a major risk factor for things like depression, post-traumatic stress disorder and anxiety," said Lucy Bowes, an associate professor of experimental psychology at the University of Oxford who specializes in cyberbullying.

"It absolutely is a major risk factor for things like depression, post-traumatic stress disorder and anxiety."

"I suspect the people who are vulnerable drop out quite early because it hurts so much," said Bowes. "Clearly it's not OK for anyone, but I imagine it's quite self-selecting."

She warns that the longer a moderator works, the greater the risk of developing psychological problems. While many people will be resilient enough to cope with the abuse up to a point, others will crack at a certain threshold. Bowes said that moderators with pre-existing mental health issues would be especially at risk.

"This will build up and build up, and you'll start seeing things like missing work, difficulty sleeping, weight gain or weight loss. You'd start to see those precursors to poor mental health," she said.

Many of the moderators interviewed said they worried about their fellow moderators burning out under the pressure caused by their online harassers, but they all claimed not to be affected themselves.

Bowes said Reddit has an obligation to inform new moderators of the kind of abuse they can expect to receive before they begin moderating and to give advice on how to handle it. There also needs to be a clear explanation of how the company intends to handle and react to the abuse when moderators report it, said Bowes.

"Reddit has a responsibility in making sure that people working for them know what they need to do. So taking screenshots, for example, and recording so they have as much information as possible should it go to prosecution."

That's something that Emily also called for. "It would be good to have a country-specific resource, because the laws on cyberbullying in the UK are different to [the US], which would be different to other countries."

"Reddit has a responsibility in making sure that people working for them know what they need to do."

William agreed. "It's so informal. We could do with a more robust system."

Some moderators have attended Mod Roadshow meetings with administrators that travel across the US and UK to meet moderators. These events were supposed to find ways to make moderation easier, but the moderators were lukewarm in their response. Some said it simply highlighted the gulf between the moderators and the administrators and that they felt patronized by the administrators, who explained the basics of moderation.

Moderators also complained of feeling ignored when they report abusive messages. They said they almost never hear back from administrators and aren't told whether the account was removed from the site or not.

"Reddit has gone far out of its way to be as little involved with moderation as possible," said Velo. "Most people don't even try to send [abusive] users to the admins anymore, because it seems like they never respond and if they do it's so vague and unsatisfactory."

Additionally, Bowes said Reddit should be checking in regularly to make sure the moderators are OK. They should also offer resilience training to the moderators to cope, she said.

"Reddit has gone far out of its way to be as little involved with moderation as possible."

"This is a large company. It should have the resources to be able to do this," said Bowes.

Some moderators would like to see Reddit act on Bowes' suggestions.

"I would absolutely welcome that," said Velo.

"If you're a moderator on Reddit for certain subreddits that are large enough, you should get moral support — actual psychological support to talk to a specialist after you get absolutely abused," said Allam.

However, other moderators also expressed a degree of sympathy for Reddit: The site is enormous, and it would be expensive to offer counseling services to moderators, they admit.

But Reddit's size may not be a defense for tackling the issue of abuse in general. According to a research project at Stanford University, just one percent of Redditors are responsible for 74 percent of all conflicts on the site.

It's a relatively small but troublingly devoted community of users who type the endless violent death and rape threats.

When asked for comment, Reddit said it is "constantly reviewing and evolving" its policies, enforcement tools and support resources. It also noted that it has doubled its admin team in the past two years to ensure it has "the teams in place to make the improvements needed," and is building solutions to detect and address bad behavior to ensure "it doesn't become a burden on moderators."

Update 1:30PM ET:

Reddit has provided an expanded comment on this story. It also pointed to its r/ModSupport community, Mod Help Center and updated report flow for making Reddit aware of infractions such as harassment. Its full, updated statement follows:

"We look into all specific allegations that are reported to us. Harassment and persistent abuse toward moderators are not acceptable behaviors on the site. We are constantly reviewing and evolving our site-wide policies, enforcement tools, and community support resources. Reddit has also doubled in size in the past two years in order to ensure we have the teams in place to make the improvements needed. This includes expanding our Trust and Safety team and our Community team, as well as building engineering solutions for detecting and addressing bad behavior so it doesn't become a burden on moderators. We are also particularly focused on improving the tools available for moderators, and we are grateful for the feedback we have received from them both online and through our efforts to meet them in person. Since 2017, we've hosted 13 events around the United States and in London to give the moderators an opportunity to share their views with us in person, which we always appreciate. We know there is more to do, and we will continue to evolve the human and technological resources available to ensure that Reddit is a welcoming place."

Video by Point

Narrator: Jay McGregor

Producers: Jay McGregor, Aaron Souppouris

Editor: Anton Novoselov