Facebook has a three-part plan for making AI more 'inclusive'

It's teaching machines to work the same for everyone, regardless of skin tone.

Facebook kicked off the second day of F8 2019, its annual developers conference, with a keynote about the technologies it uses to combat abuse on its platform. As the company detailed last year, artificial intelligence is key to keeping its apps and services safe. Facebook says AI is now proactively taking down more than 99 percent of spam, fake accounts and terrorist propaganda, though it's still struggling with hate speech (51.6 percent) and harassment (14.9 percent). Another area where Facebook is looking to improve the technology is inclusivity. What that means, essentially, is that it wants to teach its machines to work the same for everyone, regardless of skin color or other physical attributes.

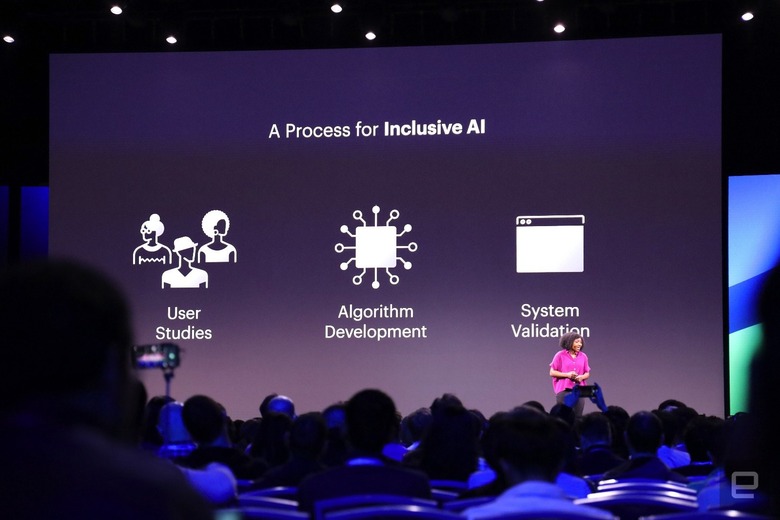

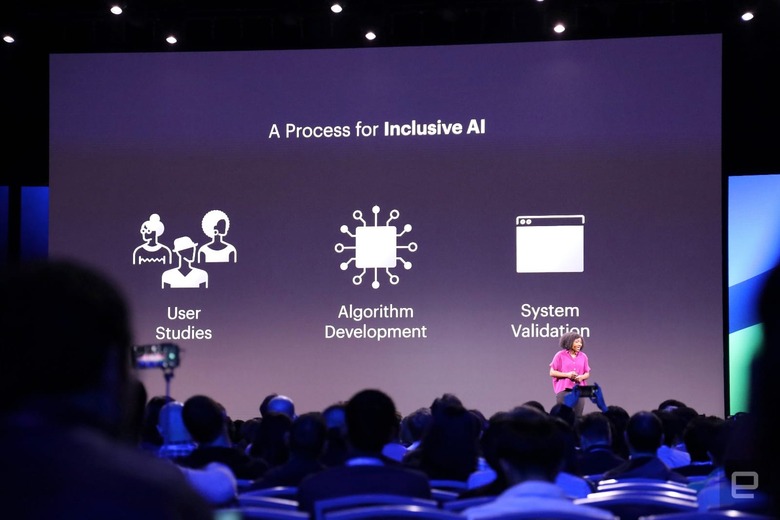

To build a more "inclusive" AI, Facebook says it is focusing on three major aspects: user studies, algorithm development and system validation. Lade Obamehinti, who leads technical strategy for Facebook's AR/VR team, said on stage that she noticed flaws in the system when she was using a pre-production version of the Portal smart camera. During a video call where she was telling a story, "It zoomed in on my white, male colleague instead of me," she said.

Obamehinti, who is Nigerian-American, said that after looking into the issue she uncovered gaps in representation in the Portal's AI-powered smart camera that could've led to the subpar experience. And if it happened to her, there was a good chance it could happen to someone else who would go on to own the product. While humans can easily distinguish skin tones, machines need to be taught. That's why Facebook is doing things like testing skin tones under different lighting conditions, and then feeds that information to AI powering its services, including augmented reality experiences and the Portal camera.

Facebook says the goal is to ensure that devices relying on AI perform the same for everyone and, most importantly, build a strong enough framework to remove any biases from its systems and offer the best personal experience possible. "We have to really understand our diverse product community and the most critical user problems when working with AI," Obamehinti said. "Inclusive means not excluding anyone."