Killing comments won't cure our toxic internet culture

But moderating them more aggressively might.

2014 was a year of reckoning for online news media. Following increasingly fractious and aggressive behavior by users, a number of marquee organizations threw their collective hands up and shut down their comments sections. Within weeks of each other, Recode, The Week, USA Today and Reuters joined with Popular Science and The Chicago Sun-Times in announcing that they would be shuttering their public forums in favor of holding those discussions on other social channels.

"If I was painting a picture of a site we were gonna have, and then at the end I said, 'Oh, by the way, at the bottom of all our articles we're going to prominently let any pseudonymous avatar do and say whatever they want with no moderation' — if there was no convention of internet commenting, if it wasn't this thing that was accepted, you would think that was a crazy idea," Ben Frumin, editor-in-chief of The Week, told Nieman Lab in 2015. In the half-decade since, comments sections have somehow persisted. But in an age of omnipresent Social Media, does the internet still need a comment section at all?

The comments section is, by definition, driven by the readers that use it to express their reactions and opinions to news articles, often via pseudonymous or fully anonymous accounts. This format works both to the advantage and detriment of news sites.

On one hand, it provides a forum for people who might feel uncomfortable sharing their opinions offline either due to social or legal repercussions, Dr. T Frank Waddell, an assistant professor in the College of Journalism and Communications at the University of Florida, explained to Engadget.

On the other hand, "the online comments section sort of turned into the Wild West of people sharing their opinions," he continued. "And when these conversations turn negative, there can be detrimental consequences for the way that the news is being perceived, even though the comments section is totally separated from it."

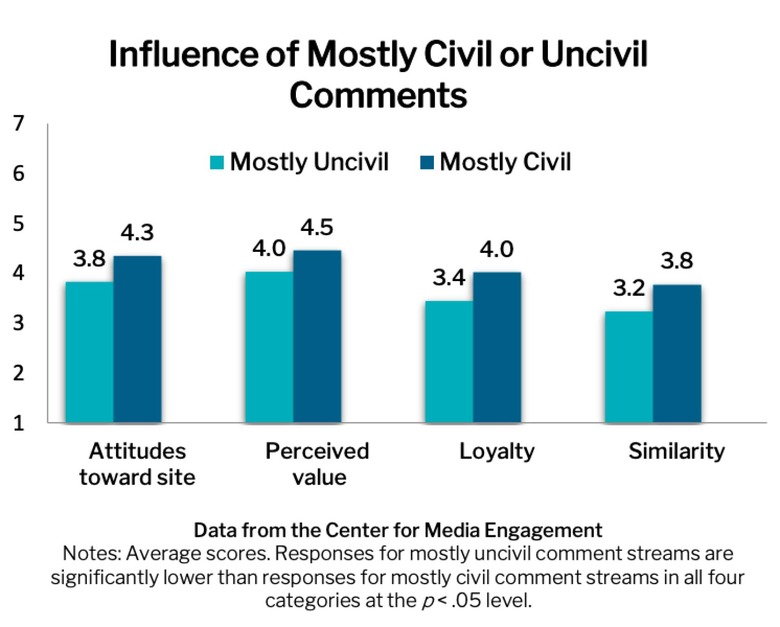

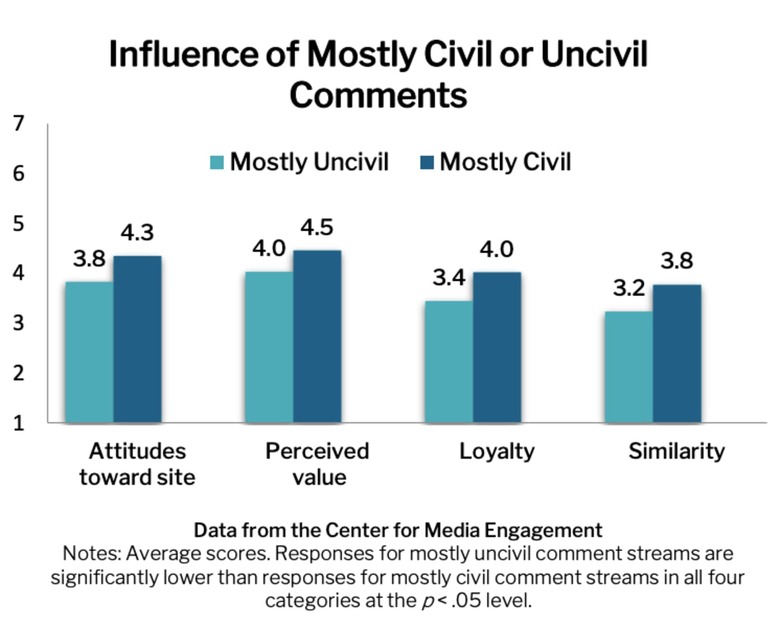

A recent study from the University of Texas at Austin found that incivility in the comments can have an outsized negative impact on the reader's perception, not only of the article itself but the news outlet as a whole — AKA the Nasty Effect. Specifically, researchers found that people who read stories with nothing but negative comments "had less-positive attitudes toward the site and saw it as less valuable" as well as "felt less loyal to the site and less similar to the commenters." What's more, the order of the comments (whether you read a positive or negative one first) makes little difference, but there appears to be a saturation level of negativity that must be achieved.

"The argument used to be that it was anonymous comments [that caused problems] and people felt emboldened to say the worst things they possibly could because there were no repercussions," Craig Newman, Chicago Sun-Times managing editor, told Digiday in 2014. "Then, at some point in the last couple of years, a switch got flipped and anonymity was no longer a need when it came to spewing awfulness. It's almost like there's no shame anymore."

"Toxicity and instability feels like it's everywhere," Dr. Gina Masullo Chen, assistant professor in the School of Journalism at the University of Texas at Austin and co-researcher of the January study, told Engadget. "I mean, the research really shows it — one in five comments, 20 percent."

This is due in part to humans' cognitive bias toward negative information, Waddell said. "This is the central tendency for negative information to be more memorable, and more relevant to our decision-making and information." The fact that this toxicity feels more prevalent than it actually is can be attributed to the bandwagon effect.

"In the context of news, we mostly see [the bandwagon effect] when the crowd or online readers are negative." Waddell continued. Positive comments don't seem to have much impact on readers' opinions, however, "when you have comments sections, where people are criticizing the reporting or the quality of the news, we very much do see effects that are negative in nature."

But despite the negative impact that comment sections have on our perception of the news, neither Waddell nor Chen foresee them going away in the near future. "I'm not in favor of getting rid of comments," Chen said. "First of all, because I don't think we can ever get to that place." She points out that even if every news site on the internet were to shut down their forums, those conversations would still occur, just on social media like Twitter or Facebook. "I would rather use techniques that will improve the comment stream," she explained, "and some of the things that improve them are really strong moderation and pre-moderation."

It isn't as easy as simply turning off the comments, either. Doing so could also have direct financial consequences for news sites. "In a phenomenon known as shared reality," Maria Konnikova argued in The New Yorker in 2013, "our experience of something is affected by whether or not we will share it socially. Take away comments entirely, and you take away some of that shared reality, which is why we often want to share or comment in the first place. We want to believe that others will read and react to our ideas."

In short, comment sections are designed to drive repeat traffic to a page (you post a comment and check back on occasion to see if it's gotten any replies or reaction) and encourage users to share that content with others. Turning that feature off would invariably lead to a decline in both traffic and advertising revenue for the site.

"Unfortunately, online digital media's reliance on ad revenue does lead to a lot of these concessions — like the need for a comment section to encourage click-throughs and more time spent on page," Waddell said. "If there was less reliance on that, it could possibly be a big positive for journalism."

"If we shut down the comments section," he said, "I suspect that those conversations will just simply occur through other vehicles, like Twitter or Facebook or perhaps even Instagram."

Dr. Chen's research has come to similar conclusions. She pointed out that when shuttering of comments sections began to cascade in 2014, industry analysts expected the conversations to simply be shunted over to social media. The assumption was that, for Facebook at least, conversations would improve and become more civil due to the site's "real name" policy, which effectively eliminates user anonymity. "But there's not tremendous evidence that that has happened," she said.

Chen also said her own research comparing the conversations around a pre-Trump White House administration. "We found that Twitter was more uncivil than Facebook and that Facebook people were having more reasoned conversations," she said. "But some of that was just because you have more space on Facebook — you're not limited to, at that time, 140 characters. It's hard to make a reasoned argument in a very short space."

So instead of shutting down the comments wholesale, Waddell suggests journalists take a more hands-on role in the discussion following a story's publication. "Research shows that when journalists are involved in the comment-production process, the negative comments that might have been shared tend to be a little bit less influential than when it's unmoderated and the audience is allowed to fully shape the conversation."

"We've also found that if you have journalist engaging comments in-stream, it improves the overall score, even if they're just in there saying, 'Hey, here's a follow up on my story,' or, 'Oh, great comment,' that reinforces good behavior and it does improve the tenor of the conversation," Chen said.

However, moderating comments can take a severe emotional toll on the humans tasked with doing so. Earlier this year, Facebook caught heat for the abysmal working conditions and mental-health support its content moderators were forced to endure. The problem got so out of hand that a number of employees began believing the conspiracy theories they were hired to filter.

As such, a number of companies are looking to outsource the drudgery of comment moderation to algorithms and AI, though those systems are still a number of years away from being competent enough to handle the rigors of the job. "There has been mixed success in training algorithms to detect incivility," Chen said. "It's complicated. You know, people say things in a sarcastic way that an algorithm is not as good at picking up. But in time, we can train them."

"My research shows that people can tolerate some incivility," Chen said. "It's not like one uncivil comment is going to be the tipping point, right? People can handle imperfect, they just can't handle it being really awful."