AI can automatically rewrite outdated text in Wikipedia articles

You wouldn't have to wait for a human editor to handle a trivial task.

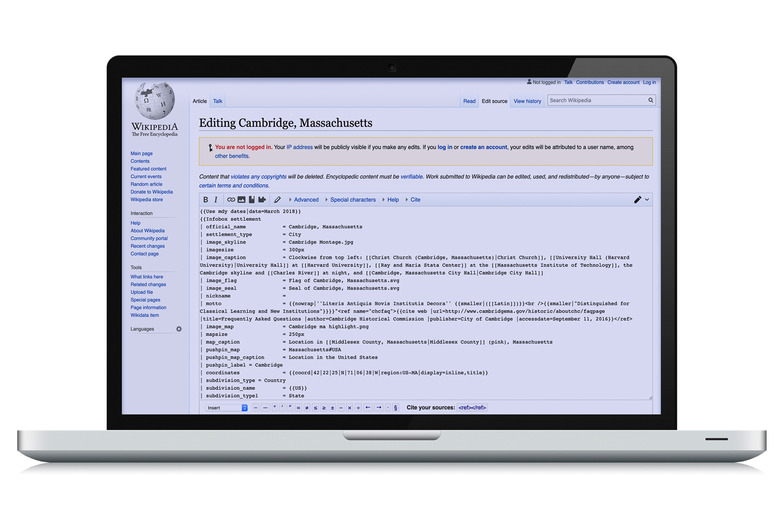

It's good to be skeptical of Wikipedia articles for a number of reasons, not the least of which is the possibility of outdated info — human editors can only do so much. And while there are bots that can edit Wikipedia, they're usually limited to updated canned templates or fighting vandalism. MIT might have a more useful (not to mention more elegant) solution. Its researchers have developed an AI system that automatically rewrites outdated sentences in Wikipedia articles while maintaining a human tone. It won't look out of line in a carefully crafted paragraph, then.

The machine learning-based system is trained to recognize the differences between a Wikipedia article sentence and a claim sentence with updated facts. If it sees any contradictions between the two sentences, it uses a "neutrality masker" to pinpoint both the contradictory words that need deleting and the ones it absolutely has to keep. After that, an encoder-decoder framework determines how to rewrite the Wikipedia sentence using simplified representations of both that sentence and the new claim.

The system can also be used to supplement datasets meant to train fake news detectors, potentially reducing bias and improving accuracy.

As-is, the technology isn't quite ready for prime time. Humans rating the AI's accuracy gave it average scores of 4 out of 5 for factual updates and 3.85 out of 5 for grammar. That's better than other systems for generating text, but that still suggests you might notice the difference. If researchers can refine the AI, though, this might be useful for making minor edits to Wikipedia, news articles (hello!) or other documents in those moments when a human editor isn't practical.