The secret behind Amazon Echo's alert sounds

Every ding, every chime contains more information than you think.

"Alexa?"

If you own an Amazon Echo, there's a chance that just reading that word triggered a pavlovian "bimm" in your mind. Or, if you have the wake sound disabled, maybe it's the timer alarm that makes you twitch if you hear it on a TV show (or someone else's speaker). Whatever you think of the sounds a smart speaker makes, none of them are accidental. They have all been meticulously designed to pull your attention or provide reassurance, depending on their goal. And the Echo could have sounded very different from how we know it today.

The Echo series, in particular, has been instrumental in defining the smart speaker and the sounds we expect and (to avoid burned pizza) need it to make. Maybe you don't think about these transient acoustic signposts much – the beeps and boops that bookend Alexa's verbal responses – and that's okay, that's by design too. In fact, Chris Seifert, Senior Design Manager at Amazon wouldn't mind if you don't notice these sounds at all.

"One of the things that people often say about sound is you only hear about it when it's wrong," Seifert told Engadget. "People don't go to a play and say how great the sound was, [it] probably wasn't a good play, if that's what your focus is."

This is why, when you do say the magic wake word, Alexa responds precisely as it does. That "bimm" might seem like a neat, generic alert sound, but it was purposefully crafted that way. Seifert, the first person Amazon employed to build Echo's sound design, reckoned that for users to be comfortable with a smart speaker, all the interactions – not just the spoken answers from Alexa – needed to feel natural and in context. "If we just use skeuomorphism, and just real-world sounds, that's kind of strange, in a purely digital experience," he said.

This is a concept Seifert refers to as "abstract familiarity." You know it, but you don't know why you know it. And there's no better example of this than Alexa's wake sound, which is based on the ubiquitous and very human "uh-huh." A sound we can hear dozens of times a day. A sound that lets us know someone is listening without us feeling interrupted.

"We took recordings of people saying that and we analyzed it and looked at the frequency it's used and how long they typically took and how loud they were in comparison to their actual normal speaking responses," Seifert said. "And then we recreated that, tried lots of different instruments. Some of them, real-world instruments, some of them completely synthetic, and found this combination of all of the above to create what we call our wake sound."

If you have an Alexa device nearby, go ahead and try it. That short sound is also curiously practical. You might find that how you respond to it is different from someone else. I personally initially always waited for the sound and then issued my command. Turns out, I might have just been being a little too polite. Seifert informs me it was designed so that you don't need to wait, you can happily talk right over it. Again, this is not a coincidence.

"I love this topic, because the whole point of the technology is it should work for you, right? Seifert said. "Even you know, during the day, I might say, 'Alexa, what time is it?' all in one phrase, right? Because I'm right by the device, just rattle it off. If I'm thinking of a larger question, before I start this long thing, I might actually pause to make sure I'm heard because I'm halfway down the hall. And I don't want to have to repeat the whole thing if it never heard me."

If you ever wondered what that wake noise could have sounded like in an alternative universe or with some of the real instruments, then you can hear them in the audio embedded below.

Good sound design is an exercise in Occam's razor: How does one create informative, appropriate feedback in a tone that's maybe less than a half a second long? We're all familiar with the dreaded Windows error alert or maybe the satisfying iPhone message swoosh. The terse, off-key alerts ignite frustration while the crisp chime of a task completed just feels right. But is it merely a case of choosing something that sounds positive or negative?

Not if the Echo wake sound is any indication. But sometimes, Seifert and his team have the luxury of a little more sonic breathing room. Like the Echo's boot-up sound which is a full nine seconds. The audio equivalent of War and Peace in UI sound design terms. But also, the very first sound that any Echo owner is going to hear, so it has to count. Even more so back in 2014 when these things were new.

"We were making a speaker that you spoke to which now we totally take for granted," Seifert said. "At the time, that was really a novel thing, like you're just going to speak to this, this device, you literally aren't having to touch it in any way, shape or form. So how do we encourage people to feel comfortable doing something that's so new, that that's just not expected?"

Seifert went on to explain how they needed to create expectations for everything to come. They needed a sound that would indicate this screenless device was powering up for the first time and that right at the end of those vital nine seconds Alexa was listening, waiting for you. The result is a pad sound that creates a little bit of suspense before breaking itself apart into three ascending notes that lead us right into Alexa's first word: Hello.

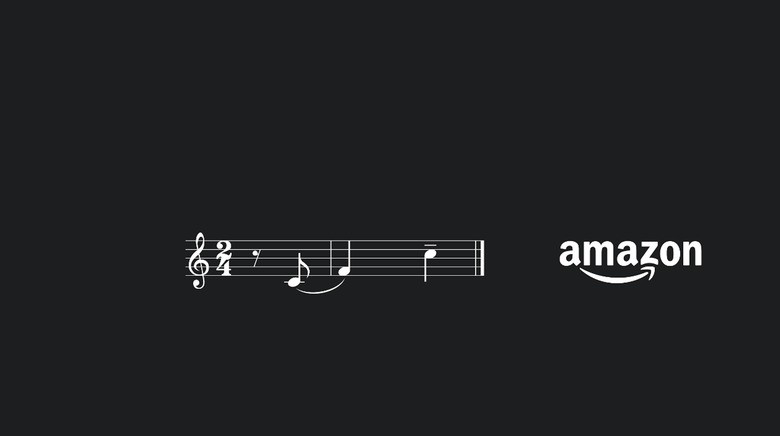

These three notes also comprise the wake sound (two at wake, the third comes later in something called an "end pointing" tone). Oh, and the incoming call ringtone uses these three notes and adds in two more for fun. In fact, pretty much all of the Echo's non-verbal signals boil down to just these three notes at some level. Just placed in different orders, pitches and lengths depending on whether they want your attention, already have it or no longer need it.

"From the moment you boot up your device, you hear these three notes. When you speak to it, you hear two before you speak and one at the end, when you get an incoming call, you get a five-note version of that melody." Seifert explained. "That's all happening before the logic part of the brain kicks in, and you start interpreting what you're hearing and thinking of the meaning of it." In short, they are playing with our minds and we're not even mad about it. If you're still not convinced, maybe you will be when you learn that those three recurring tones are meant to sound like someone saying "Am-a-zon."

But there's one small thing that Seifert and his team haven't been able to crack yet: Personalization. When it comes to our individual devices we can change and choose the sounds they make, but an Echo is often communal, part of the shared home. How does one allow for some level of personalization while maintaining the ubiquitous understanding of an "uh-huh?"

According to Seifert, that's the next big challenge in smart-speaker land. "The next step is to make all of these experiences such that people can personalize them when it's just them, but that they're still understood by a collective group." But he also remains tight-lipped, for now, about how that might actually work. "I think we'll see a lot of the future is going to have more personalization and customization [...] and that's the challenge because prior to Alexa and Echo, most sounds were made, you know, one to one."